A new international research report highlights the critical role of human agency in shaping the future of education as artificial intelligence (AI) becomes increasingly integrated into teaching and learning environments. Released on arXiv, the study, titled Protecting and Promoting Human Agency in Education in the Age of Artificial Intelligence, outlines a framework intended to ensure that AI adoption does not compromise the autonomy and decision-making power of educators and students alike.

The report defines human agency in education as the ability to act intentionally, make informed choices, and influence outcomes, emphasizing that such capacity is crucial for effective learning and the ethical purpose of education. According to the authors, schools and universities should not merely be viewed as information delivery systems, but rather as institutions that nurture empathy, critical thinking, and intercultural understanding. Any technology that alters these environments must therefore be scrutinized not only for efficiency but also for its potential impact on cognitive development and human autonomy.

Central to the report are four interlocking sources of agency that can be either enhanced or diminished by AI systems: human oversight, human–AI complementarity, AI competencies, and emergent relational design. Human oversight involves maintaining meaningful control over AI systems as they gain autonomy, necessitating careful management of their operational domains and ensuring that AI outputs are closely tied to human decisions. The authors stress that effective oversight transcends technical supervision, encompassing moral and pedagogical stewardship.

However, the researchers caution that oversight alone may not suffice. Institutional pressures aimed at cost reduction and increased efficiency may drive a trend toward full automation, which could limit opportunities for human judgment. Additionally, extensive data collection required for monitoring AI systems raises privacy concerns, necessitating a balance that protects trust while ensuring effective oversight.

The second pillar, human–AI complementarity, advocates for models where humans and AI collaborate in decision-making. Rather than viewing AI as a replacement for educators, the study encourages a format where AI handles routine tasks while humans retain authority over complex reasoning and ethical considerations. This collaborative approach can mitigate the risks of diminishing teacher expertise and empower students to engage in deeper cognitive processes. However, the authors warn that poorly designed systems may inadvertently encourage students to rely on AI for metacognitive functions, thereby undermining their agency.

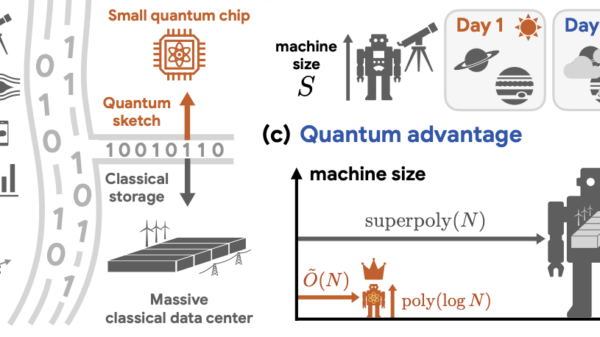

In examining the cognitive implications of AI, the report draws on dual-process theories of reasoning, positing that generative AI can act as a new cognitive layer, sometimes referred to as System 0. While AI may enhance human capacity by processing information rapidly, it also poses risks of promoting uncritical acceptance of machine-generated outputs, with efficiency gains potentially undermining reflective thinking.

The report emphasizes the importance of developing AI competencies among educators and students. It argues that understanding how to engage critically with AI extends beyond mere awareness of algorithms; it includes knowing when to trust, question, or override AI outputs. Essential competencies identified include critical thinking, self-regulated learning, and creative problem-solving. The authors express concern about the phenomenon of cognitive offloading, where reliance on AI may diminish the mental effort required for authentic learning.

Motivation is highlighted as a key factor influencing the effectiveness of agency-supportive AI tools. Research indicates that educators who are already motivated to improve their practices benefit the most from such tools, suggesting that competency development must align with institutional cultures that prioritize critical engagement over passive consumption of AI outputs. In discussions surrounding educational priorities, participants debated the merits of fostering resilience in ambiguity versus striving for full transparency in AI systems.

The report outlines systemic risks associated with AI integration, cautioning that it could inadvertently homogenize cognitive and social experiences, undermining diversity in thought and interaction. As generative AI blurs the lines of academic integrity, educational institutions face complex dilemmas regarding authorship and originality. The redistribution of time saved through automation also raises questions: Will educators engage more deeply with students, or will they be burdened with administrative tasks?

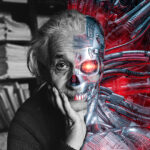

Three primary dilemmas emerge regarding the integration of AI in education. The first concerns normative constraints: Should AI systems be designed to optimize human agency, and if so, whose values will guide these constraints? The second dilemma revolves around transparency: Should stakeholders pursue inherently explainable systems, or should education focus on helping individuals navigate opaque technologies? The final dilemma considers AI’s role in reshaping human cognition, as relying on AI for memory and pattern recognition could amplify vulnerabilities to algorithmic bias and manipulation.

The study concludes that addressing these dilemmas is critical for protecting human agency in the age of AI. It advocates for collaboration among researchers, policymakers, and educators to create regulatory frameworks that safeguard autonomy while encouraging innovation. As AI continues to reshape educational ecosystems, it is imperative that all stakeholders, including parents and peers, engage in the design of AI tools that enhance professional judgment and student creativity, ensuring that the potential benefits of AI are equitably distributed.

See also Companion Takes Down Einstein AI After Backlash Over Contract Cheating Concerns

Companion Takes Down Einstein AI After Backlash Over Contract Cheating Concerns Top 7 US Education Software Development Companies Driving AI and Digital Learning Innovation

Top 7 US Education Software Development Companies Driving AI and Digital Learning Innovation Türk Telekom Expands EdTech Leadership with AI Solutions, Reaches 30 Top LGS Scores

Türk Telekom Expands EdTech Leadership with AI Solutions, Reaches 30 Top LGS Scores House Hearing Calls for Federal Support in AI Teacher Training Amid Rising Classroom Use

House Hearing Calls for Federal Support in AI Teacher Training Amid Rising Classroom Use