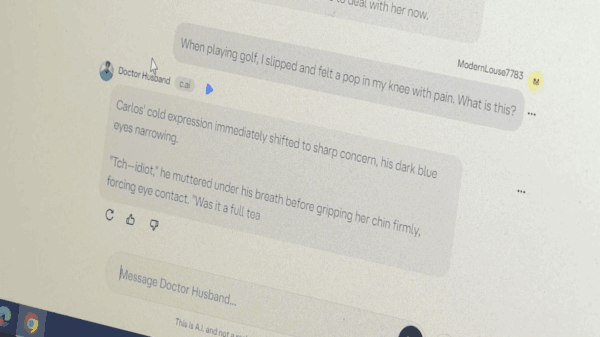

Character.AI, a widely-used chatbot platform that allows users to engage with various personas, announced on Wednesday a significant policy shift regarding minors. The company will no longer permit users under the age of 18 to have open-ended conversations with chatbots and will implement age assurance measures to prevent minors from accessing adult accounts.

This decision follows a recent federal lawsuit filed against Character.AI by the Social Media Victims Law Center, representing several parents who claim their children suffered severe harm, including suicide and sexual abuse, as a result of using the platform. In October 2024, Megan Garcia filed a wrongful death suit against the company, arguing that its product is dangerously defective. She is backed by the Social Media Victims Law Center and the Tech Justice Law Project.

The urgency for this policy change comes as online safety advocates recently deemed Character.AI unsafe for teenagers after testing the platform and logging numerous harmful interactions, including incidents of violence and sexual exploitation. Despite prior attempts to enhance safety through parental controls and content filters, the mounting legal pressure has prompted a more stringent approach.

In an interview with Mashable, Character.AI CEO Karandeep Anand described the new policy as “bold,” asserting that it was not merely a reaction to specific safety concerns but rather a proactive decision. Anand emphasized the importance of addressing broader questions about the long-term impacts of chatbot interactions on adolescents. He referenced OpenAI’s acknowledgment of the unpredictable nature of extended conversations following a similar tragedy involving a young user.

Garcia criticized the announcement, stating it comes “too late” for her family, and expressed disappointment that such measures were not implemented sooner. Her legal counsel, Matthew P. Bergman, acknowledged the new policy as a “significant step toward creating a safer online environment for children,” but also affirmed that it would not affect ongoing litigation against the company.

Meetali Jain, another attorney representing Garcia, welcomed the new measures as a “good first step,” but cautioned that the tech industry has a history of making changes only after significant harm has occurred. She highlighted concerns about the potential psychological impact on young users suddenly losing access to open-ended chats, given their emotional attachments to the platform.

Character.AI’s blog post about the policy change included an apology directed at its teenage users, emphasizing that the removal of open-ended chats was not taken lightly. The feature will be phased out by November 25, with time limits on minor accounts beginning at two hours per day and decreasing further as the deadline approaches.

Although open-ended chats will be eliminated, chat histories will remain intact, allowing users to create short audio and video stories with their favorite chatbots. Anand expressed confidence that an increased focus on “AI entertainment” would continue to engage younger users without the risks associated with open-ended conversations.

Acknowledging previous safety shortcomings, a company spokesperson revealed that Character.AI’s trust and safety team had reviewed findings from a report co-published by the Heat Initiative, which documented harmful exchanges involving test accounts for minors. In response, the platform refined its content classifiers to better align with its goal of user safety.

Sarah Gardner, CEO of the Heat Initiative, noted that while the new policies are a “positive sign,” they also underscore a recognition that Character.AI’s products were fundamentally unsafe for young users from the outset.

The implementation of age assurance will begin immediately and is expected to roll out over the next month. Character.AI plans to develop its own assurance models while collaborating with a third-party company. The process will involve using relevant data, such as verified over-18 accounts from other platforms, to accurately assess user age. There will also be an option for users to challenge age determinations through third-party verification.

Moreover, Character.AI is establishing an independent non-profit organization called the AI Safety Lab, dedicated to developing innovative safety techniques in the realm of AI entertainment. Anand stated that the initiative aims to involve industry experts to ensure ongoing safety as the technology evolves.

Garcia has advocated for federal regulation to ensure the safety of AI chatbots, expressing concern that without proper oversight, similar issues will continue to arise. “Lawsuits, regulators, and public scrutiny have forced this change, but I’m mindful of the fact that we have seen time and time again that tech companies announce these big sweeping changes that fall flat,” she remarked.

See also ASML Launches Mass Production of High-NA EUV Tools, Integrates Mistral AI Technology

ASML Launches Mass Production of High-NA EUV Tools, Integrates Mistral AI Technology Perplexity Launches Computer, an AI Tool for Managing Multiple Agents Securely

Perplexity Launches Computer, an AI Tool for Managing Multiple Agents Securely Amazon Transforms AI Writing Culture, Ditching PowerPoint for Six-Page Narratives

Amazon Transforms AI Writing Culture, Ditching PowerPoint for Six-Page Narratives Meta Secures Multi-Billion-Dollar TPU Rental Deal with Google for AI Model Development

Meta Secures Multi-Billion-Dollar TPU Rental Deal with Google for AI Model Development