As artificial intelligence (AI) continues to proliferate, the absence of a comprehensive regulatory framework in the United States has led to a complex landscape for businesses navigating compliance. While Congress has yet to enact specific AI legislation, existing laws, including those governing privacy, discrimination, and financial disclosure, already impose regulations that companies must heed. Board members are urged to understand these nuances as their organizations increasingly rely on AI tools that may carry significant legal implications.

Deploying AI does not absolve companies from existing legal responsibilities. This principle is particularly critical in sectors such as financial services, healthcare, and employment, where the deployment of AI can lead to compliance challenges. For instance, a financial institution utilizing AI to evaluate loan applications remains subject to Fair Lending laws, regardless of whether the decision was made by a human or an algorithm. Discriminatory outcomes resulting from AI systems could trigger legal action, putting companies at risk for violations that may not be immediately apparent.

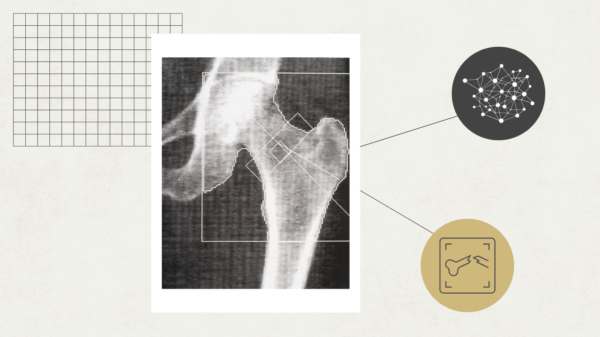

Industry regulators are already addressing these emerging risks through enforcement actions. The Consumer Financial Protection Bureau has taken steps against financial firms whose algorithms led to discriminatory results. Similarly, the Securities and Exchange Commission has heightened scrutiny of AI-driven trading systems, emphasizing the importance of robust validation and ongoing monitoring. In the healthcare sector, the Food and Drug Administration has begun regulating certain AI applications as medical devices, necessitating pre-market reviews for higher-risk solutions. Employment regulators are also taking action, with agencies like the Equal Employment Opportunity Commission mandating compliance with existing anti-discrimination laws when using AI hiring tools.

Despite the rapid advancement of AI technologies, businesses often hold misconceptions about their legal obligations. Some firms incorrectly assume that the “black box” nature of AI provides a shield against accountability for adverse outcomes. However, from a legal standpoint, organizations are responsible for the systems they deploy. If an AI tool leads to negative consequences for customers or employees, the company must bear the repercussions. Regulators expect firms to perform rigorous due diligence, understanding the operational processes of their AI systems, as failure to do so could expose them to increased liability.

The Compliance Framework

In light of increasing regulatory attention, boards of directors are encouraged to take proactive steps in establishing governance frameworks for AI technologies. Companies should begin by defining what constitutes AI, recognizing that not all applications carry the same risk. This categorization allows organizations to allocate resources more effectively, focusing on higher-risk AI systems that pose greater legal challenges.

Creating an inventory of AI systems that outlines their risk profiles is essential for effective governance. This ongoing inventory process must adapt as new AI features are released, ensuring that risk assessments remain current. Additionally, compliance requirements must be integrated into the development and deployment stages of AI technologies, aligning them with existing regulations to streamline governance mechanisms.

Testing and validation protocols are crucial before deploying any higher-risk AI systems. Companies should implement robust testing for accuracy and fairness, alongside ongoing monitoring to identify any deviations or issues post-deployment. Human oversight remains critical, particularly in contexts where AI influences significant decisions, requiring clear accountability and documentation of oversight processes.

As the regulatory environment surrounding AI evolves, board members are tasked with asking pertinent questions regarding their company’s AI governance. They should ensure comprehensive inventories of AI systems, inquiries into governance frameworks, and accountability measures are in place. Additionally, ongoing monitoring and employee training regarding appropriate AI use must be emphasized to mitigate risks.

In conclusion, while there is currently no sweeping federal AI legislation, the application of existing laws to AI technologies is already shaping compliance landscapes across industries. As AI adoption accelerates, companies that actively engage with these regulatory nuances are better positioned to navigate impending legal challenges and mitigate risks associated with AI deployment. Recognizing AI as a tool for which they remain accountable ensures that innovation proceeds within the established legal frameworks of their respective sectors.

See also South Africa’s AI Regulation Delayed Until 2027, Risks Growing Without Oversight

South Africa’s AI Regulation Delayed Until 2027, Risks Growing Without Oversight PRSA Reveals 5 Essential AI Ethics Rules Every PR Professional Must Follow

PRSA Reveals 5 Essential AI Ethics Rules Every PR Professional Must Follow Anthropic Faces Ethical Backlash as Self-Regulation Falls Short Amid Pentagon Pressures

Anthropic Faces Ethical Backlash as Self-Regulation Falls Short Amid Pentagon Pressures New AI Governance Playbook Launches to Define Risk Ownership and Compliance Strategies

New AI Governance Playbook Launches to Define Risk Ownership and Compliance Strategies Gov. Shapiro Discusses AI Regulation in Schools, Emphasizes Student Safety and Transparency

Gov. Shapiro Discusses AI Regulation in Schools, Emphasizes Student Safety and Transparency