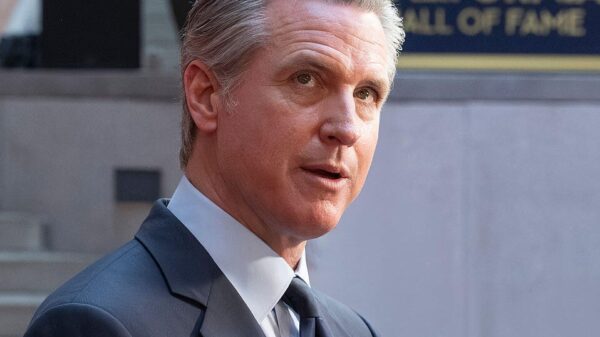

California Governor Gavin Newsom signed an executive order on Monday aimed at establishing safety and privacy guidelines for artificial intelligence (AI) companies, marking a pivotal step in state-level regulation. Dubbed “first-of-its-kind,” the order seeks to ensure that innovative technologies do not compromise public safety or civil rights.

In a statement following the signing, Newsom emphasized, “California’s always been the birthplace of innovation. But we also understand the flip side: in the wrong hands innovation can be misused in ways that put people at risk.” This initiative arises in response to the previous Trump administration’s efforts to limit state regulation of AI, favoring a more uniform nationwide approach, often influenced by lobbying from major tech firms.

“While others in Washington are designing policy and creating contracts in the shadow of misuse, we’re focused on doing this the right way,” Newsom added, highlighting the state’s proactive stance in crafting its regulatory framework. Under the new order, companies that wish to engage in business with California state agencies will be required to certify that their AI systems include safeguards against illegal content, harmful biases, and potential civil rights violations.

The order also instructs state agencies to broaden their utilization of vetted AI tools, aiming to enhance public services. Newsom asserted, “California leads in AI, and we’re going to use every tool we have to ensure companies protect people’s rights, not exploit them or put them in harm’s way.”

This move places California at the forefront of a growing conversation around AI governance, particularly as the White House recently unveiled a national framework outlining Congress’s approach to AI. The federal guidelines favor a “light-touch” regulatory stance, which has raised concerns among advocates for stronger oversight.

As AI technologies continue to evolve rapidly, the juxtaposition between state and federal approaches may become a significant point of contention. California’s action to impose stringent requirements may not only set a precedent for other states but also influence the national dialogue on how best to address the complexities of AI safety and ethics.

The implications of California’s executive order extend beyond state borders, potentially serving as a blueprint for other regions grappling with similar issues. As technology increasingly intersects with daily life, the responsibility to protect individuals from the risks of AI misuse is becoming paramount.

In the coming months, all eyes will be on how California implements these regulations and whether they lead to enhanced protections or provoke pushback from the tech industry. The balance between fostering innovation and safeguarding public welfare remains a critical challenge as the state moves forward.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health