In a move aimed at democratizing access to artificial intelligence, programmer Arman Hussein has introduced GuppyLM, a small-scale language model that allows users to build their own AI in just five minutes using Google Colaboratory. Released on April 7, 2026, GuppyLM is designed to simplify the complexities surrounding large-scale language models, making them more accessible to individuals without specialized training.

Many people today interact with large-scale language models such as OpenAI’s GPT, Google’s Gemini, and Anthropic’s Claude, often without fully understanding their underlying mechanics. These models, typically composed of millions or even billions of parameters, require substantial computational resources and expertise to develop. Hussein seeks to dispel the notion that this process is akin to magic, stating, “This project exists to show that training your own language model isn’t magic.”

GuppyLM boasts approximately 8.7 million parameters and operates using a Transformer architecture, a deep learning model pioneered by Google researchers. This positions it significantly smaller compared to competitors like Trinity-Large-Thinking, an AI model announced by American startup Arcee AI that features 399 billion parameters, or OpenAI’s GPT-3, which has 175 billion parameters. Despite its size, GuppyLM serves as a practical example of how accessible language modeling can be.

The model and its datasets are available for public access on Hugging Face, a popular platform for machine learning resources. Hussein emphasizes that building GuppyLM does not require advanced degrees or expensive hardware, making it an ideal educational tool. Users can train the model using readily available text data, allowing them to experiment with the technology at their convenience.

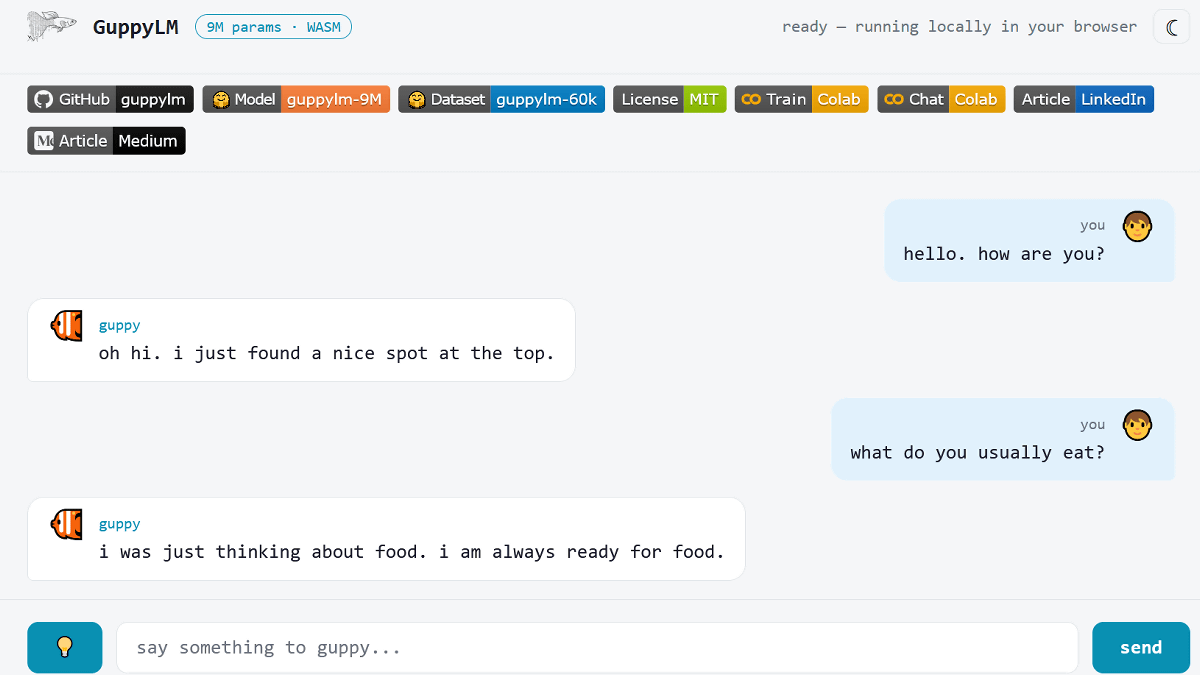

As indicated by its playful name, GuppyLM simulates the responses one might expect from a small fish. Its conversational abilities are limited to specific topics such as water, food, light, and other aquarium-related subjects, though it struggles with abstract concepts like money or politics. The model can generate short, lowercase responses, with a maximum sequence size of 128 tokens. For instance, when asked, “What do you usually eat?”, GuppyLM might respond, “I was just thinking about food. I am always ready for food.”

The project’s dataset reflects this unique character, as it is trained on sentences reminiscent of a guppy’s perspective. This allows the model to produce responses that evoke the simplicity and directness associated with aquatic life. Users can even interact with GuppyLM through a web interface powered by WebAssembly, further enhancing its accessibility.

In a demonstration of its capabilities, users can visit the dedicated website to chat with GuppyLM. When prompted with questions about its well-being, the model replies with endearing simplicity, such as “Oh hi. I just found a nice spot at the top.” This illustrates how the model is engineered to provide engaging, albeit niche, interactions.

Hussein’s initiative not only highlights the potential of smaller-scale language models but also serves as a reminder that artificial intelligence can be demystified and made more approachable. As AI technology continues to evolve, projects like GuppyLM may pave the way for broader participation in the field, encouraging individuals to explore the principles of machine learning without the barriers typically associated with large-scale models.

See also Reply Named Launch Partner for Microsoft Agent 365, Enhancing AI Governance and Scalability

Reply Named Launch Partner for Microsoft Agent 365, Enhancing AI Governance and Scalability DeepSeek Launches Instant and Expert Chatbot Modes Ahead of V4 Release

DeepSeek Launches Instant and Expert Chatbot Modes Ahead of V4 Release Microsoft Launches Open-Source Runtime Toolkit to Enhance AI Agent Governance and Security

Microsoft Launches Open-Source Runtime Toolkit to Enhance AI Agent Governance and Security OpenAI Faces Leadership Turmoil Amid $122 Billion Funding and IPO Plans

OpenAI Faces Leadership Turmoil Amid $122 Billion Funding and IPO Plans Google’s AI Summaries Wrong 10% of the Time, with Subtle Errors Misleading Users

Google’s AI Summaries Wrong 10% of the Time, with Subtle Errors Misleading Users