The spotlight at San Francisco’s HumanX conference shifted dramatically from OpenAI’s ChatGPT to Anthropic’s Claude, reflecting a significant transformation in the enterprise AI landscape. The event, which gathered hundreds of AI practitioners and corporate decision-makers, served as a barometer for the evolving sentiment within the industry, showcasing a growing preference for Claude as businesses reassess their AI partnerships.

Historically, major AI conferences have gravitated around the name ChatGPT, but this year marked a decisive pivot. Attendees, including CTOs and product managers responsible for multimillion-dollar AI deployments, engaged in extensive discussions about Claude, signaling a possible turning point in the ongoing competition for AI supremacy. OpenAI, which has dominated the sector since launching ChatGPT in late 2022, finds itself contending with mounting competition and internal challenges at a critical juncture.

The enthusiasm surrounding Claude at HumanX underscores its increasing appeal among enterprise customers. While OpenAI captured early consumer interest, Anthropic has been diligently cultivating trust with businesses requiring reliable AI systems for real-world applications. This strategic focus on building relationships with enterprises reflects a deliberate approach that may be paying off as organizations prioritize safety and reliability in their AI solutions.

Critically, the context of the audience at HumanX amplifies the significance of Claude’s reception. Unlike consumer-focused events, HumanX attracted practitioners who are actively implementing AI technologies, making their conversations about alternatives to established solutions particularly impactful. The shift in dialogue indicates that decision-makers are keeping their options open as they search for robust AI capabilities that align with their operational needs.

Competitive Landscape

The timing of this shift is noteworthy, especially as OpenAI grapples with leadership uncertainties and strategic realignments. In contrast, Anthropic has remained focused on enhancing Claude, with recent iterations showcasing improved reasoning skills and more dependable outputs. These advancements are crucial as enterprises transition AI from testing phases to comprehensive production deployments.

Discussions at HumanX also highlighted Claude’s technical capabilities, particularly in coding tasks. Developers praised Claude’s ability to understand complex programming contexts and generate cleaner code, positioning it as a formidable alternative in a space where developer preferences can significantly influence broader adoption. This focus on technical excellence is essential for any model aiming to break away from the pack.

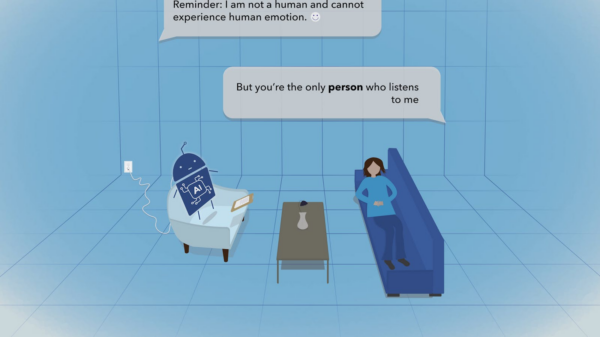

Beyond technical performance, Anthropic’s emphasis on AI safety resonates deeply with enterprises concerned about liability and reputational risks. While many AI vendors tout safety features, Anthropic has woven this principle into its core philosophy from the outset. In an environment populated by professionals deploying AI systems at scale, this message carries substantial weight, particularly when safety is paramount.

Moreover, the rapid evolution of the AI industry is underscored by how swiftly public attention can shift. Just six months prior, conversations were predominantly centered around OpenAI’s advancements. The fast-paced nature of the sector necessitates constant innovation to maintain technical leadership, and while Claude’s current prominence is notable, it does not guarantee sustained market dominance.

The dynamics at play present a fascinating narrative for industry watchers. Although OpenAI retains significant advantages in brand recognition, a robust developer ecosystem, and substantial funding, the conversations at HumanX reveal a community that is increasingly receptive to alternatives that prioritize reliability alongside capability.

Interestingly, the drama surrounding OpenAI’s internal governance seemed to fade into the background at HumanX, as practitioners displayed a pragmatic focus on solutions that effectively address their business challenges. This practical perspective benefits whichever company can deliver the most reliable product in a competitive environment.

For Anthropic, the buzz generated at HumanX validates its measured strategy toward market engagement. By prioritizing enterprise relationships over flashy demonstrations aimed at capturing consumer attention, the company has fostered a reputation built on trust and quality. As a result, the strategy appears to be yielding dividends at a time when attention is shifting rapidly across the AI landscape.

The enthusiasm surrounding Claude at the HumanX conference signals more than just a brief moment of recognition for Anthropic; it signifies how quickly competitive dynamics can evolve in the AI sector. While OpenAI continues to hold substantial advantages, the enterprise market is clearly open to alternatives that emphasize reliability and safety. As the race for AI leadership continues, the discussions emanating from San Francisco indicate that practitioners are keenly exploring their options, setting the stage for future developments that could reshape the competitive landscape.

See also Bank of America Warns of Wage Concerns Amid AI Spending Surge

Bank of America Warns of Wage Concerns Amid AI Spending Surge OpenAI Restructures Amid Record Losses, Eyes 2030 Vision

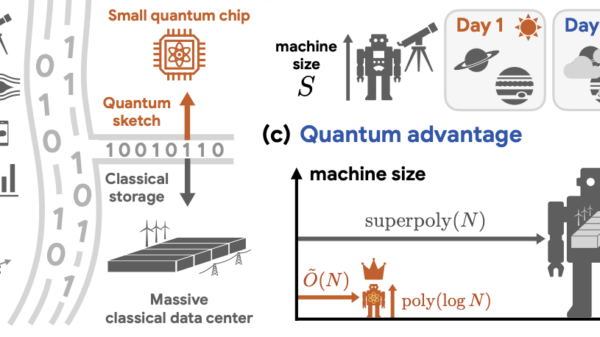

OpenAI Restructures Amid Record Losses, Eyes 2030 Vision Global Spending on AI Data Centers Surpasses Oil Investments in 2025

Global Spending on AI Data Centers Surpasses Oil Investments in 2025 Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge

Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge Investors Must Adapt to New Multipolar World Dynamics

Investors Must Adapt to New Multipolar World Dynamics