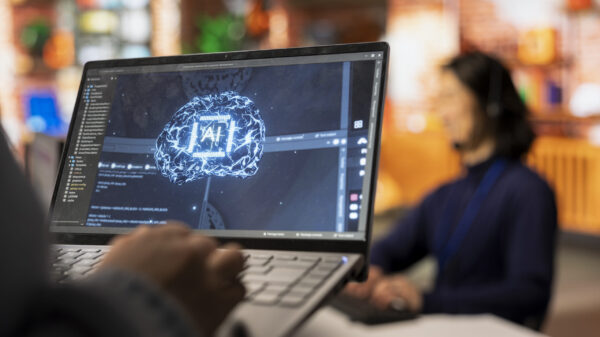

African organizations are experiencing an alarming surge in cyberattacks, with each entity facing an average of over 3,000 attacks weekly, the highest rate globally. This trend is attributed to the rapid integration of artificial intelligence (AI) into business operations, which has outpaced the necessary security measures to safeguard these systems, according to a recent report from Check Point Software Technologies.

The findings come from the firm’s AI Threat Landscape Report, which analyzed data from January and February 2026. As businesses across Africa increasingly adopt generative and agentic AI, many do so without sufficient visibility, governance, or protective measures in place.

Check Point researchers characterize the current phase as the onset of the “Agentic Era.” This term refers to the transition of AI from being a productivity tool to an autonomous operational system capable of executing tasks independently across enterprise environments. The implications for cybersecurity are profound. For example, one documented case highlighted a single developer utilizing an AI-powered development environment to create a sophisticated Linux-based malware framework, which included modular command-and-control architecture, rootkits, and over 30 post-exploitation plugins. Initial assessments from Check Point Research (CPR) suggested that this framework likely resulted from a coordinated multi-person effort over several months. However, operational security failures revealed that it was crafted by one individual leveraging AI tools.

This case underscores a critical concern noted in the report: AI is condensing the time and expertise necessary to develop complex cyberattacks, enabling lone actors to operate at scales previously associated with well-resourced criminal organizations or state-sponsored entities.

Further complicating matters, Check Point’s analysis of generative AI usage across enterprise networks during the same period revealed that approximately 3.2 percent of user prompts posed a significant risk of sensitive data leakage. This includes potential exposure of confidential business information, regulated data, and source code to external AI services. Alarmingly, high-risk activity was detected in 90 percent of organizations regularly employing generative AI tools.

Employees are reportedly using an average of 10 or more AI tools, resulting in what the report terms “Shadow AI” environments—AI deployments that remain invisible to traditional security measures, making them increasingly attractive targets for cybercriminals. Ian van Rensburg, Head of Security Engineering for Africa at Check Point, emphasized the widening gap between attackers and defenders, stating, “Attackers are operating at machine speed, while many organizations are still defending at human speed.” He further stressed the need for a comprehensive approach to AI security, which should encompass models, data, prompts, application programming interfaces, and autonomous agents, rather than merely focusing on surrounding infrastructure.

The report also highlights a growing governance gap, as private-sector AI adoption is outpacing the development of national AI strategies in key African markets. This discrepancy necessitates urgent attention, especially as the convergence of European Union data regulations and African data protection frameworks underscores the importance of cyber resilience for trade. African exporters increasingly need to demonstrate compliance to maintain access to international markets.

Hendrik de Bruin, Head of Security Consulting for SADC at Check Point, pointed to the institutional stakes involved, noting, “Without clear risk classification, visibility, and accountability, AI systems can quickly become a blind spot rather than a competitive advantage. AI adoption at scale requires trust.” The continent is grappling with a critical shortfall of cybersecurity talent, with over 200,000 unfilled positions, heightening the need for local expertise rather than reliance on external resources.

Check Point advocates for the integration of security-by-design principles and risk-based governance into national AI strategies from the outset. The firm warns that organizations that treat security as an afterthought will find it increasingly challenging to unlock the economic potential of AI.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks