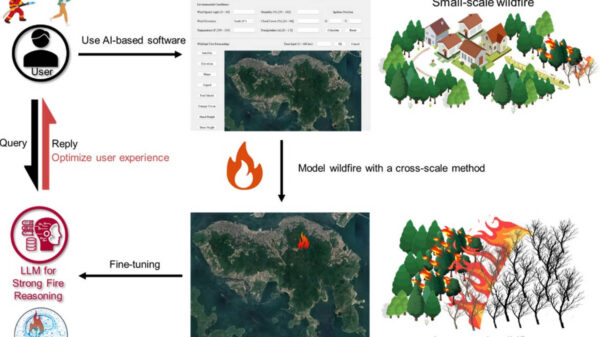

Anthropic is implementing restrictions on access to its most advanced AI model, Claude Mythos, following concerns that its abilities in detecting software vulnerabilities could lead to significant cyberattacks. The announcement was made on Tuesday, revealing that select technology giants and cybersecurity firms will gain early access to the model through Project Glasswing, a defensive initiative aimed at strengthening critical systems against potential exploitation.

Initial partners include industry leaders such as Amazon, Apple, Microsoft, Google, Nvidia, Cisco, Broadcom, CrowdStrike, JPMorgan Chase, Palo Alto Networks, and the Linux Foundation. Approximately 40 additional organizations responsible for maintaining critical infrastructure are also set to join the initiative. Anthropic has characterized Claude Mythos as “by far the most powerful AI model” it has developed to date.

“Claude Mythos Preview demonstrates what is now possible for defenders at scale,”

the company stated in a blog post that was first reported by Fortune. Anthropic cautioned that adversaries will likely seek to exploit the same capabilities that make the model effective at vulnerability detection.

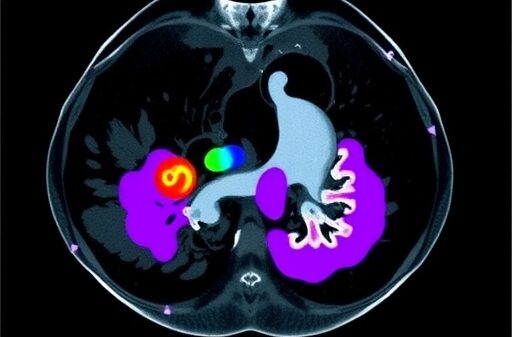

On the CyberGym benchmark, which evaluates AI agents on vulnerability analysis tasks, Mythos achieved a score of 83.1%, significantly surpassing the previous leader, Opus 4.6, which scored 66.6%. The model has reportedly identified thousands of previously unknown software vulnerabilities at a pace far exceeding that of human researchers, including a 27-year-old bug in the security-focused operating system OpenBSD.

Executives at Anthropic acknowledged that the decision to release such a powerful AI model involved considerable internal debate. Dianne Penn, Anthropic’s head of research product management, pointed out the importance of the initiative.

“We really do view this as a first step for giving a lot of cyber defenders a head start on a topic that will be increasingly important,”

Penn told CNBC, underscoring the urgency of enhancing cybersecurity measures in light of the model’s capabilities. Participants in Project Glasswing will utilize Mythos to scan and secure their systems while also sharing findings with industry peers. The initiative includes $100 million in usage credits for participating organizations and $4 million in direct donations to support open-source security projects.

This restricted rollout follows earlier warnings from Anthropic to government officials, indicating that Mythos could make large-scale cyberattacks significantly more feasible this year. Documentation from the company revealed in March suggested that the unreleased model represents a major leap in AI capabilities within cybersecurity.

“Security research like this is especially important for open-source projects,”

the company emphasized in its announcement. “By giving maintainers of critical codebases early access to these capabilities through Project Glasswing, we aim to help secure foundational internet infrastructure.”

While Anthropic intends to eventually make Mythos-class models publicly available, access will currently remain limited to Project Glasswing participants. The emphasis on early access reflects a broader strategy to proactively address potential cybersecurity threats leveraging advanced AI technologies.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks