The US Department of Defense has initiated negotiations with major technology firms to develop advanced artificial intelligence systems aimed at identifying vulnerabilities in China’s power grids and utilities. This development, reported by the Financial Times, marks a significant shift in how the military seeks to leverage digital intelligence for national security.

This initiative is designed to accelerate processes of digital intelligence and target mapping, enabling the American military to potentially disable enemy systems rapidly during armed conflict. However, the U.S. government’s push for these technologies has sparked concerns among AI developers regarding the ethical implications of employing their innovations for offensive operations.

A notable point of contention has emerged between the White House and AI laboratories, particularly following an ultimatum issued to Anthropic. The administration has threatened to terminate existing contracts or even confiscate proprietary technologies if Anthropic’s management does not consent to military use of its Claude AI model. This ultimatum has intensified discussions around the ethical use of AI, as developers strive to place limits on its deployment in autonomous weapons and extensive surveillance systems.

The Pentagon’s demand for “indefinite use” of AI tools without restrictions further complicates the relationship between the government and technology firms. Employees from leading organizations like OpenAI and Google have voiced similar concerns, advocating for constraints on the application of AI technologies. The U.S. government, however, argues that without effective deterrents from China, the military cannot afford to operate under limitations that could hinder its capabilities.

Former high-ranking CIA officials suggest that the integration of AI into military operations could dramatically enhance the scale at which sensitive networks can be scanned. This technological advantage could replace many specialists with automated algorithms that operate on a principle akin to a thief examining numerous locks to locate an unguarded entry point into enemy infrastructure. Such capabilities would enable the U.S. military to increase its effectiveness in cyber espionage.

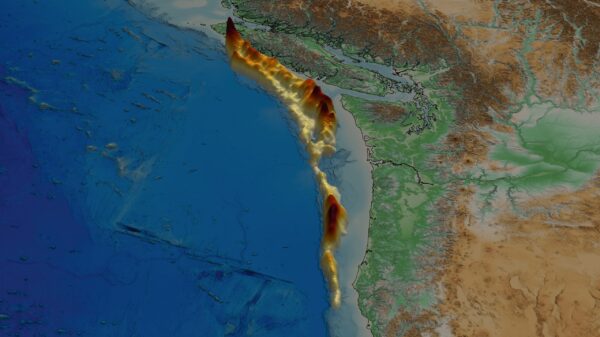

The primary targets under scrutiny include power plants located near data centers, as their incapacitation could significantly undermine China’s artificial intelligence capabilities, thereby creating a critical advantage for U.S. forces in future conflict scenarios. The intersection of AI and military strategy signals a rapid evolution in how both nations may approach warfare.

This development engenders a broader discussion about the role of technology and ethics in modern warfare. As the race for technological superiority intensifies, the implications for international security and ethical governance of AI applications will likely remain contentious. The ongoing negotiations and resultant technologies could redefine power dynamics in the digital age as nations strive to balance national security with ethical considerations.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks