A team from Beihang University has introduced the CASE framework, a novel solution designed to enhance the lifelong learning capabilities of large language models (LLMs). The research, titled “CASE: Conflict-assessed Knowledge-sensitive Neuron Tuning for Lifelong Model Editing,” is slated for presentation at the prestigious WWW 2026 (The ACM Web Conference 2026). The framework addresses critical issues faced by LLMs during continuous updates, where models often grapple with “catastrophic forgetting” or excessive resource consumption due to added parameters.

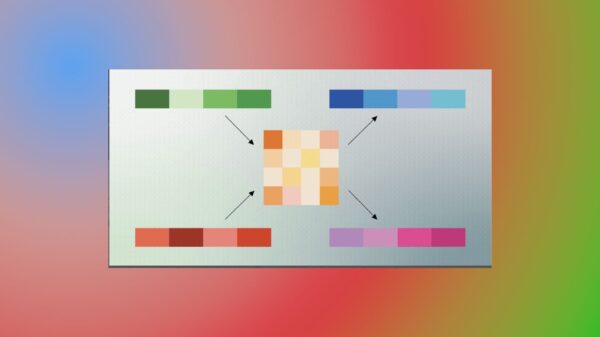

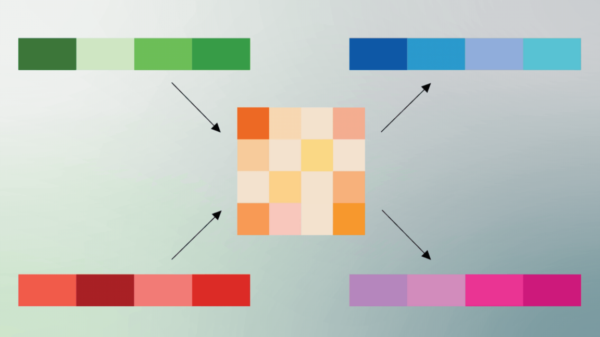

As LLMs attempt to adapt to new information, they face two main challenges. First, existing methodologies often result in models forgetting previously learned content due to conflicting updates. Alternatively, to prevent loss of information, models may incorporate excessive parameters, leading to high computational demands. The CASE framework proposes an innovative approach: it scores each edit, segregates conflicting knowledge, and reserves shared space for non-conflicting information. Crucially, it fine-tunes only the “key neurons” that are most responsive to current knowledge, minimizing the risk of misdirecting irrelevant parameters.

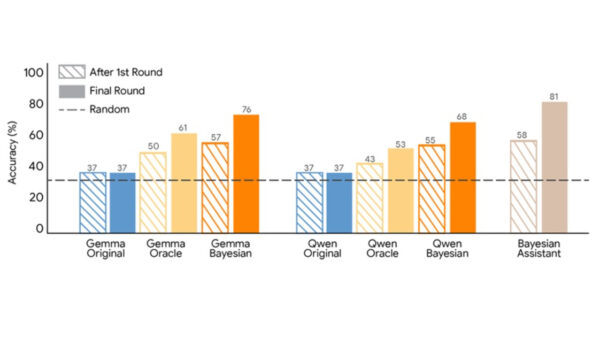

In extensive experiments, the CASE framework demonstrated remarkable performance, showing a nearly 10% improvement in average accuracy over prevailing methods after 1,000 consecutive knowledge edits. Furthermore, it maintains parameter efficiency, with additional storage requirements under 1MB. This efficiency is particularly noteworthy given that many existing frameworks consume significantly more resources.

The underlying issues of “knowledge aging” and “fact hallucination” in LLMs necessitate a paradigm shift in their operational capabilities. The goal of “lifelong model editing” is to empower LLMs to continuously learn and correct knowledge akin to human cognition without compromising previously acquired skills. However, existing methods often fall into two traps: the tendency to add parameters indiscriminately and the failure to effectively target the correct neurons during updates. These shortcomings lead to an accumulation of irrelevant changes that exacerbate existing conflicts.

The CASE team’s framework tackles these issues through its dual-module approach. The first component, known as the Conflict-Assessed Editing Allocation (CAA) module, evaluates the conflicts associated with new knowledge edits. By employing gradient theory from multi-task learning, the CAA module calculates whether new knowledge conflicts with existing parameters and determines the optimal allocation of space. If new knowledge is compatible with existing data, it shares parameter space; if not, it creates a new sub-space to mitigate potential loss of old information.

The second element, the Knowledge-sensitive Neuron Tuning (KNT) strategy, focuses on fine-tuning only the neurons most affected by the current knowledge, thus avoiding unnecessary disruptions in the model’s learning process. This is achieved through the Fisher information matrix, which assesses the sensitivity of individual neurons, allowing only those most crucial to current knowledge to be updated. The KNT strategy further incorporates a mechanism for regularizing historical knowledge activation, ensuring the stability of retained information during updates.

To validate the effectiveness of the CASE framework, the team conducted rigorous tests using several benchmark models, including LLaMA2-7B, Qwen2.5-7B, and LLaMA3-8B-Instruct, comparing it against established lifelong editing frameworks such as GRACE and WISE. In a question-answering task leveraging the ZsRE dataset, CASE exhibited a significant accuracy advantage—maintaining a 95% accuracy rate after 1,000 edits, while leading competitors experienced substantial declines. Notably, CASE also achieved a remarkable 100% locality preservation rate, demonstrating its superior retention of irrelevant knowledge.

In another benchmark involving hallucination correction, CASE significantly reduced perplexity—a metric for text factuality—by 60%, notably outperforming rival methods that struggled to maintain consistent performance. The efficiency of the CASE framework is underscored by its limited additional parameter requirements, which remain below 1MB, and its quick inference time, comparable to unedited models, highlighting its real-world applicability.

With a focus on stability, the CASE experiments also revealed that it maintains consistent performance across diverse parameter settings, adapting readily to various scenarios without extensive tuning. This adaptability presents a promising avenue for future developments in the field of AI, as the CASE framework lays the groundwork for more resilient and efficient LLMs capable of evolving alongside new information while retaining foundational knowledge.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature