The rise of artificial intelligence (AI) has ignited a dual narrative—one steeped in exhilarating potential and the other shadowed by significant concerns. While AI applications promise to enhance productivity and facilitate various tasks, the reality is that these systems do not always deliver the expected outcomes. This discrepancy often arises from the way AI is utilized; it is merely a tool, not a universal solution applicable in every scenario.

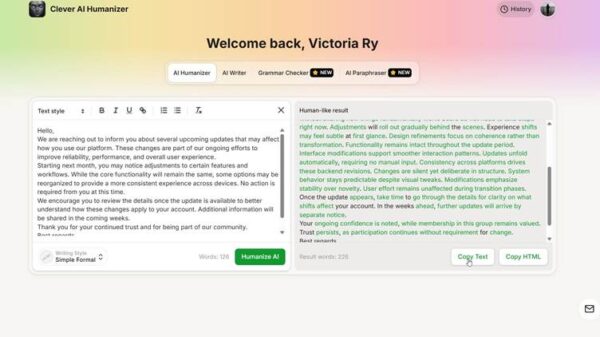

Many users engage with AI primarily as an answer engine, posing questions, generating lists, and supplementing research efforts. However, the effectiveness of generative AI hinges on a nuanced interplay of several factors, including the quality of data, the decision-making algorithms utilized, and crucially, the context in which the prompts are framed. This complexity leads to what can be understood as intermittent reinforcement.

Intermittent reinforcement occurs when a behavior is rewarded, but those rewards are unpredictable. In the AI context, users often formulate prompts with the anticipation of receiving a specific response. The challenge lies in the fact that generative AI does not operate on a straightforward cause-and-effect basis. This misunderstanding can cultivate a cycle of compulsive dependence, similar to behaviors observed in various forms of addiction. Users repeatedly refine their prompts, awaiting a satisfactory return, thus perpetuating a cycle of expectation that frequently goes unfulfilled.

As we examine the intersection of AI use and potential addiction, it is essential to consider addiction as a form of compulsive dependence. When users do not receive the desired output, they may continue to reshape their prompts, clicking through iterations until they achieve a form of response that somewhat meets their needs, even if it doesn’t fully satisfy their original intent. This process can become an endless loop—pressing the virtual lever time and again in search of the “perfect prompt.”

In essence, addiction often stems from engaging in behaviors that produce an altered emotional state, whether through relieving stress or boosting mood. In the case of generative AI, the urgency to craft an ideal prompt may release neurochemical substances like endorphins or adrenaline, fostering a sense of reward with each small success. The pursuit of the perfect prompt can thus become a compelling cycle, drawing users back repeatedly.

The psychological implications of this dynamic are profound. Much like traditional addictions—whether to substances or other compulsive behaviors—the temporary satisfaction derived from AI interactions can lead to a long-term dependence, where users continuously seek that fleeting moment of clarity or satisfaction. Each session with AI can feel like a step toward an ideal outcome, yet the reality is that the satisfaction is often transient, leading users to return for more in search of a longer-lasting fix.

This compulsive engagement raises questions about the broader impact of AI on human behavior and society. As generative AI technologies continue to advance and integrate into daily life, the potential for compulsive dependence warrants attention. Users may not only struggle with their expectations of AI but could also face challenges in managing their emotional responses to what these systems can provide.

As AI technology evolves, the conversation must shift toward understanding how to harness its capabilities responsibly. Awareness of the potential for compulsive behaviors will be essential for users, developers, and policymakers alike. Balancing the promise of AI with an understanding of its limitations could help mitigate the risks associated with its misuse, ensuring that this powerful tool serves to enhance human capability rather than undermine it.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature