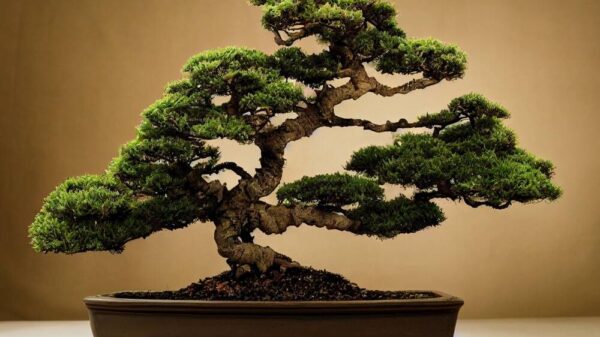

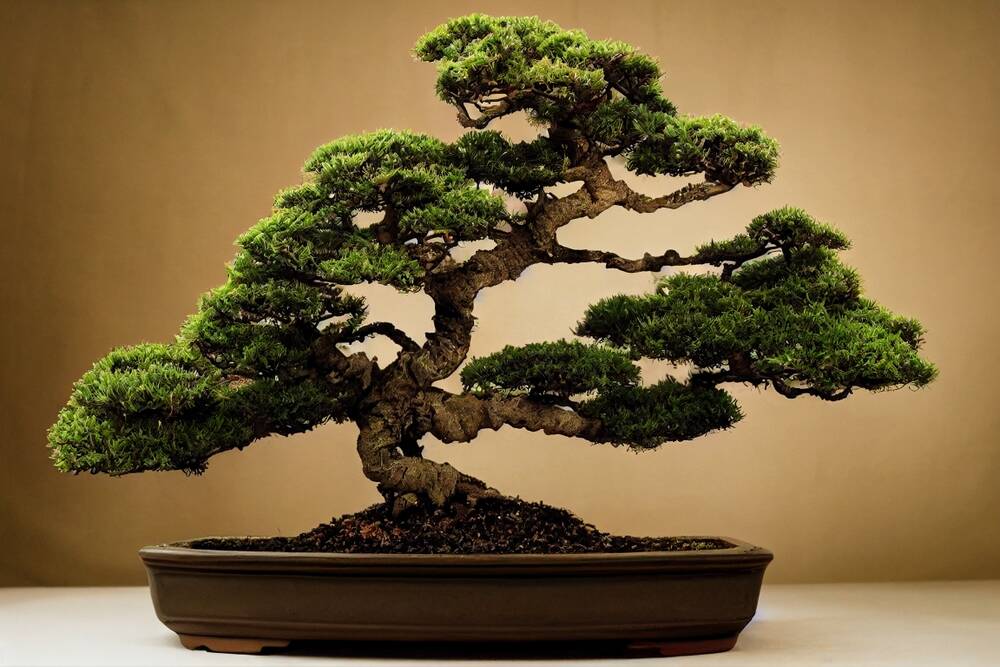

PrismML, an AI venture originating from Caltech, has unveiled a significant advancement in artificial intelligence with its new 1-bit large language model, Bonsai 8B. This model is designed to enhance AI efficiency and expand capabilities on mobile devices and beyond. With the release, PrismML aims to challenge conventional models that require extensive resources, offering a more compact solution that maintains high performance.

The Bonsai 8B model is notably small and efficient, fitting into just 1.15 GB of memory while delivering more than ten times the intelligence density compared to its full-precision counterparts. According to the company’s assertion, it is 14 times smaller, eight times faster, and five times more energy-efficient on edge hardware, all while remaining competitive with other models in its parameter class.

“Our first proof point is 1-bit Bonsai 8B, a 1-bit model that fits into 1.15 GB of memory and delivers over 10x the intelligence density of its full-precision counterparts,” PrismML stated in a social media post. This breakthrough underscores the model’s potential in various applications, particularly in scenarios constrained by memory and power.

Large language models, typically based on the Transformer architecture, are known to involve neural networks with millions or billions of weights. These weights dictate the strength of connections between neurons, impacting the model’s performance. The memory requirement varies depending on the precision used to represent these weights, placing a significant burden on device resources.

PrismML’s approach diverges from traditional methods by quantizing each weight to its sign, either -1 or +1, while employing a shared scale factor for groups of weights. This contrasts with more common representations, such as 16-bit or 32-bit floating-point numbers. The company cites previous research on quantization improvements, including notable papers from 2017 and 2024 that explore low-bit quantization strategies.

The development of the 1-bit Bonsai model is attributed to the work of Babak Hassibi, a professor of electrical engineering at Caltech and the CEO of PrismML. Hassibi emphasized that this new architecture circumvents the typical drawbacks associated with low-bit quantization, such as poor instruction following and unreliable multi-step reasoning. “We spent years developing the mathematical theory required to compress a neural network without losing its reasoning capabilities,” he stated. “We see 1-bit not as an endpoint, but as a starting point.”

This innovative architecture aims to reshape the landscape of AI by focusing on intelligence per unit of compute and energy. PrismML introduces the concept of “intelligence density” as a metric to highlight the capabilities of its models. The company defines intelligence density as the negative logarithm of the model’s average error rate, normalized by model size.

When assessed for intelligence density, the Qwen3 8B model, which scores slightly better than Bonsai 8B in various benchmarks, registers at just 0.10/GB. In contrast, Bonsai 8B boasts an impressive score of 1.06/GB. While metrics like these play a role in marketing, PrismML argues that the true significance of its models lies in their potential to facilitate AI deployment outside of cloud datacenters.

The company envisions its models powering on-device agents, real-time robotics, secure enterprise systems, and other applications where traditional constraints of memory, bandwidth, or compliance can hinder deployment. “1-bit Bonsai 8B runs natively on Apple devices (Mac, iPhone, iPad) via MLX, on Nvidia GPUs via llama.cpp CUDA,” the company stated. Additionally, two smaller models, 1-bit Bonsai 4B and 1-bit Bonsai 1.7B, are also available under the Apache 2.0 License.

As PrismML continues to pave the way with its innovative approach to AI, the implications of its 1-bit architecture could signal a new era in the technology, emphasizing efficiency without sacrificing performance.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature