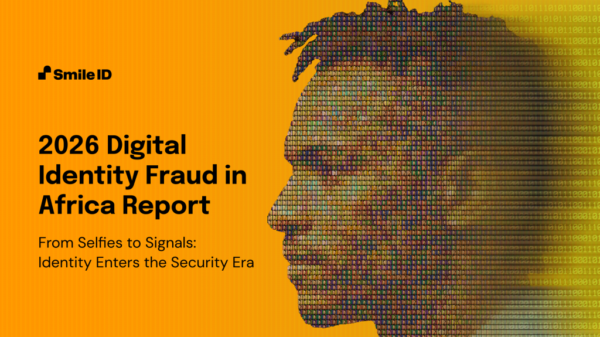

The rise of artificial intelligence has marked a significant shift in identity verification practices, with a new report from Smile ID indicating that traditional “identity selfies” are now inadequate to combat sophisticated fraud tactics. As AI-driven techniques evolve, relying solely on visual verification has become increasingly ineffective in safeguarding digital spaces.

In its report titled “From Selfies to Signals,” Smile ID outlines three critical trends reshaping the security landscape. Firstly, the focus has shifted from visual identification to the integrity of the verification process itself. Criminals are increasingly bypassing cameras, injecting synthetic media directly into identity checks. This evolution emphasizes the importance of capture integrity, which involves detecting any manipulations of devices or operating systems during the verification process.

Secondly, the nature of fraud has transformed. Attackers are no longer primarily concerned with breaching systems; rather, they are exploiting the “mid-journey” experience. This includes targeting account logins, recovery processes, device changes, and high-value transactions within already verified accounts. The goal has shifted to exploiting vulnerabilities in ongoing user interactions.

Finally, the report highlights the emergence of “industrialized” fraud. With affordable tools now available, criminal networks can automate the reuse of biometrics and transfer funds across platforms at a scale unattainable by traditional manual review processes. This change has democratized high-end fraud techniques, making them accessible to a broader range of criminals.

The implications of these developments are significant. As fraudsters deploy increasingly sophisticated deepfakes and automated injection attacks, companies must reframe their strategies. Digital identity is no longer a one-time compliance measure but a continuous security surface that must be monitored and protected throughout the customer lifecycle.

Data from over 200 million identity checks in 2025 illustrates this shift in criminal behavior. Authentication fraud attempts targeting existing accounts are now five times more common than onboarding fraud. Furthermore, mobile SDK signals—device data—are responsible for blocking 90% of fraud, while image analysis alone fails to provide adequate protection. The number of monthly “injection-style” fraud attempts, which utilize emulators and virtual cameras, has surpassed 100,000, with duplicate attempts using stolen or fraudulent data doubling year-over-year.

Mark Straub, CEO of Smile ID, asserts that “fraud is no longer a ‘KYC’ problem — it is a continuous cybersecurity challenge.” He emphasizes that leveraging privacy-preserving indicators throughout the customer lifecycle enables real-time adaptation to emerging threats. In this new era of security, ecosystem-wide protection is essential for safeguarding individuals against increasingly sophisticated attacks.

As organizations look to fortify their defenses in 2026 and beyond, Network Intelligence is expected to play a crucial role. By utilizing privacy-preserving metadata and internally tuned large language models, companies can identify “coordinated abuse”—patterns of fraud that may appear legitimate in isolation but reveal criminal networks when analyzed across an entire ecosystem. This proactive approach is vital in an age where the tactics of fraudsters continue to evolve at an alarming rate.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature