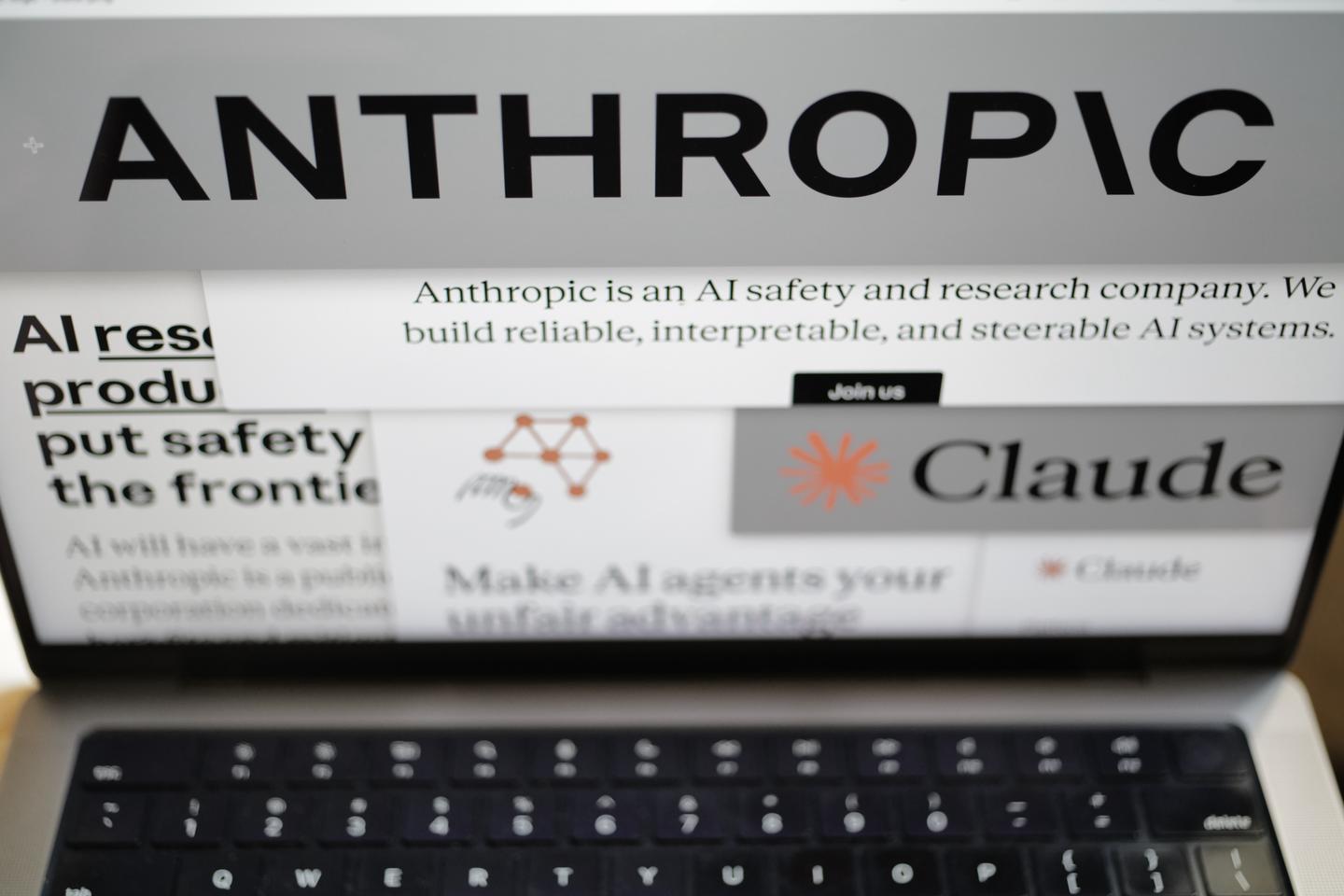

AI company Anthropic announced on February 26, 2026, that it will not permit the U.S. Defense Department to use its technology without restrictions, despite pressure from the Pentagon. Chief executive Dario Amodei reiterated the company’s stance, stating, “These threats do not change our position: we cannot in good conscience accede to their request.”

The Pentagon had given Anthropic until 5:01 PM (22:01 GMT) on Friday to agree to unconditional military use of its technology, even at the expense of the company’s ethical standards, or face potential enforcement under emergency federal powers. Amodei emphasized that while Anthropic’s models have been utilized by the Pentagon and intelligence agencies for national defense, the company draws a clear ethical line against their use for mass surveillance of U.S. citizens and fully-autonomous weaponry.

“Using these systems for mass domestic surveillance is incompatible with democratic values,” Amodei stated, adding that current AI capabilities are not yet reliable enough to warrant their deployment in lethal autonomous weapons without human oversight. “We will not knowingly provide a product that puts America’s warfighters and civilians at risk,” he said.

The Pentagon’s ultimatum followed meetings with Anthropic earlier in the week, wherein officials made it clear that failure to comply could result in the company being labeled as a supply chain risk. This designation, typically assigned to firms from adversarial nations, could severely hinder Anthropic’s ability to work with the U.S. government and damage its reputation.

In recent developments, the Pentagon confirmed that Elon Musk’s Grok system had been approved for use in classified settings, while other AI companies like OpenAI and Google are nearing similar clearances. This escalating competitive pressure is compelling Anthropic to reconsider its position.

Anthropic was contracted alongside these companies last year under a $200 million agreement to provide AI models for various military applications. The company was founded in 2021 by former OpenAI employees with the philosophy that AI development should prioritize safety, a principle that now places it at odds with the Pentagon and the White House.

Amodei articulated that while military decisions rightly belong to the Department of War, he believes there are specific instances where AI can undermine democratic values rather than defend them. This ongoing conflict highlights the growing tension between ethical AI development and national security imperatives.

The situation raises questions about the future of AI in military applications and the ethical implications of its deployment. As the debate intensifies, Anthropic’s commitment to its principles may set a precedent for other tech companies facing similar pressures.

See also Google Employees Urge Company to Avoid Military Ties as Anthropic Resists Pentagon Pressure

Google Employees Urge Company to Avoid Military Ties as Anthropic Resists Pentagon Pressure Pentagon Demands Guardrail-Free Access to Anthropic’s Claude Amid AI Sovereignty Crisis

Pentagon Demands Guardrail-Free Access to Anthropic’s Claude Amid AI Sovereignty Crisis Trump Orders U.S. Agencies to Halt Anthropic AI Use Amid National Security Clash

Trump Orders U.S. Agencies to Halt Anthropic AI Use Amid National Security Clash Government Announces AI Roundtable to Address Job Impact and Training Needs for Workers

Government Announces AI Roundtable to Address Job Impact and Training Needs for Workers OpenAI Partners with Pentagon After Anthropic’s Ethical Dispute, Secures AI Deal

OpenAI Partners with Pentagon After Anthropic’s Ethical Dispute, Secures AI Deal