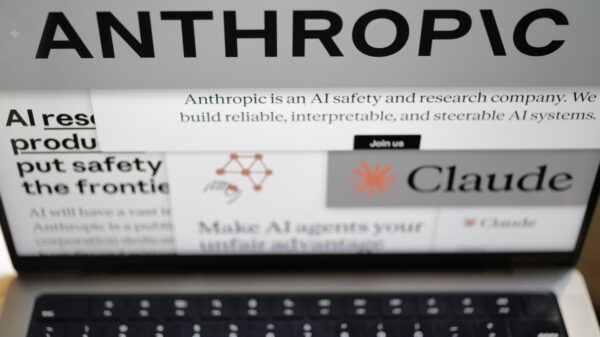

WASHINGTON (AP) — The Trump administration has directed all U.S. agencies to cease using Anthropic’s artificial intelligence technology, marking a significant escalation in a public confrontation between the government and the company over AI safety protocols. This decision, announced on Friday, follows a series of social media criticisms from President Donald Trump, Defense Secretary Pete Hegseth, and other officials, who accused Anthropic of jeopardizing national security.

The conflict arose after CEO Dario Amodei declined to grant the military unrestricted access to the company’s AI tools by a specified deadline, citing concerns that such permissions could lead to violations of the safeguards built into its technology. Trump took to social media, declaring, “We don’t need it, we don’t want it, and will not do business with them again!”

Hegseth further labeled Anthropic as a “supply chain risk,” a classification typically reserved for foreign adversaries, which could undermine the company’s relationships with other businesses. Anthropic had requested assurances from the Pentagon that its AI chatbot, Claude, would not be utilized for mass surveillance of American citizens or in fully autonomous weapons systems. While the Pentagon stated it would deploy the technology only in lawful manners, it insisted on full access without limitations.

This situation reflects broader concerns regarding the role of AI in national security, especially as the technology becomes increasingly sophisticated. The government’s push to assert control over Anthropic’s internal decision-making underscores the contentious atmosphere surrounding AI’s potential uses in lethal operations, sensitive information management, and governmental surveillance practices.

Trump criticized Anthropic’s attempts to negotiate with the military, asserting that most agencies must immediately stop using its AI, while allowing the Pentagon a six-month period to phase out the technology already integrated into military platforms. He admonished the company to “better get their act together, and be helpful,” warning of “major civil and criminal consequences” if it did not comply.

The discourse around the standoff intensified following months of private discussions that erupted into a public debate. In response to the government’s new contract language, which Anthropic argued would permit the disregard of critical safeguards, Amodei stated that his company “cannot in good conscience accede” to such demands. While Anthropic can absorb the loss of this contract, the implications of the government’s actions could reverberate more widely, especially given the company’s rapid ascent from a small research lab in San Francisco to a significant player in the AI sector.

The unfolding dispute has sent shockwaves through Silicon Valley, with many venture capitalists, prominent AI scientists, and employees from competing firms, such as OpenAI and Google, expressing support for Amodei’s stance. Such dynamics may favor Elon Musk’s rival chatbot, Grok, which the Pentagon has expressed interest in incorporating into classified military networks. Musk, aligning himself with the Trump administration, remarked on social media that “Anthropic hates Western Civilization.”

Contrastingly, Sam Altman, CEO of OpenAI, voiced support for Anthropic, highlighting the need for ethical considerations in their operations. In a CNBC interview, he described the Pentagon’s threats as “concerning” and affirmed that OpenAI shares similar ethical boundaries regarding AI applications. Amodei had previously worked at OpenAI before co-founding Anthropic in 2021.

Retired Air Force General Jack Shanahan, who previously led the Pentagon’s AI initiatives, argued that targeting Anthropic distracts from the broader issues at stake. He noted that Claude is already in widespread use across various government sectors and emphasized that the red lines drawn by Anthropic were “reasonable.” Shanahan concluded that the AI models, including those like Claude and Grok, are still not fully equipped for high-stakes national security applications, particularly concerning autonomous weaponry.

As the situation develops, the implications of the clash between the Trump administration and Anthropic may set a precedent for future interactions between technology companies and government entities, particularly in the sensitive realm of artificial intelligence.

See also AI Technology Enhances Road Safety in U.S. Cities

AI Technology Enhances Road Safety in U.S. Cities China Enforces New Rules Mandating Labeling of AI-Generated Content Starting Next Year

China Enforces New Rules Mandating Labeling of AI-Generated Content Starting Next Year AI-Generated Video of Indian Army Official Criticizing Modi’s Policies Debunked as Fake

AI-Generated Video of Indian Army Official Criticizing Modi’s Policies Debunked as Fake JobSphere Launches AI Career Assistant, Reducing Costs by 89% with Multilingual Support

JobSphere Launches AI Career Assistant, Reducing Costs by 89% with Multilingual Support Australia Mandates AI Training for 185,000 Public Servants to Enhance Service Delivery

Australia Mandates AI Training for 185,000 Public Servants to Enhance Service Delivery