Artificial intelligence (AI) has transitioned from experimental use in innovation labs to becoming integral in enterprise operations, particularly in compliance, risk, and cybersecurity sectors. Historically, these areas have adopted new technologies more cautiously compared to other business functions. However, recent trends indicate a significant uptick in the deployment of AI tools across these domains, with most organizations now leveraging digital solutions for audits, control monitoring, and risk management.

According to Patrick Sullivan, VP of Strategy and Innovation at A-LIGN, the use of automation and analytics is now essential for businesses to keep pace with escalating regulatory demands and increasing data volumes. What began as tentative experimentation with generative AI has evolved rapidly into operational applications, with AI systems now aiding in evidence collection, risk identification, continuous monitoring, and threat detection. As such, AI is no longer merely a supplementary tool in compliance processes but is reshaping how such work is conducted.

This transition creates a paradox. While AI enhances efficiency and visibility, it also introduces new governance and regulatory challenges. Companies are now tasked with using AI to bolster compliance while simultaneously establishing frameworks to manage the risks associated with AI itself. For executives and compliance leaders, grasping this dual reality is critical.

The broad application of AI often obscures important distinctions in its capabilities. Present-day enterprise AI primarily relies on machine learning models trained on extensive datasets to identify patterns, classify information, and generate predictions. In compliance contexts, these capabilities have tangible operational implications. AI increasingly dictates which risks are highlighted, what evidence is flagged, and which activities take precedence for review. Automated systems can analyze large datasets and escalate potential compliance issues far more rapidly than traditional methods.

However, as AI begins to influence compliance workflows, several key questions arise. How accurate are the automated conclusions generated by these systems? Who bears responsibility when outputs are erroneous? Additionally, how can organizations maintain oversight when decision-making becomes partially automated? Compliance leaders are now challenged to not only assess how AI enhances processes but also to evaluate its impact on decision-making and accountability frameworks.

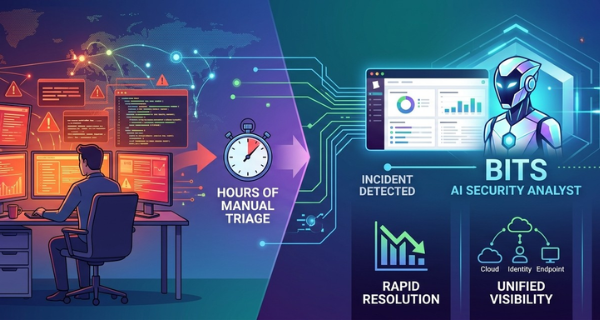

Despite the challenges, AI offers significant advantages for compliance teams grappling with increasing regulatory complexity. Cybersecurity is one of the most immediate areas benefiting from AI’s capabilities. Machine learning systems can monitor network activity and user behavior in real-time to detect anomalies indicative of potential threats. This rapid detection allows for quicker responses, thereby strengthening organizational defenses and supporting compliance with security and privacy mandates.

Continuous monitoring represents another critical advancement enabled by AI. Traditionally, compliance has relied on periodic audits; however, AI facilitates ongoing observation of controls and adherence to policies. This shift aligns with modern regulatory expectations, which emphasize continual improvement over sporadic assessments. AI also plays a vital role in data privacy and protection initiatives, automating tasks such as classifying sensitive data and detecting unauthorized access, thus aiding compliance with frameworks like ISO 27701, HIPAA, and PCI DSS.

Operational efficiency remains a notable benefit, as compliance professionals often engage in time-consuming tasks, such as document review and evidence gathering. Automation allows teams to redirect their focus toward interpreting findings, addressing complex risks, and informing strategic decisions. In summary, AI empowers compliance teams to enhance visibility while utilizing resources more effectively.

However, AI cannot supplant human judgment, and its inherent limitations pose risks that organizations must navigate carefully. One challenge is contextual blind spots; while AI excels at pattern recognition, it often lacks the nuanced understanding necessary for complex compliance decisions. Over-reliance on AI can foster misplaced confidence, potentially leading teams to overlook risks that do not conform to established patterns.

Transparency poses another challenge, as many AI systems operate as “black boxes,” complicating efforts to explain how conclusions are derived. This opacity clashes with regulatory and audit requirements that demand clear justifications for decisions. If organizations struggle to elucidate automated conclusions, they may face scrutiny under regulatory frameworks.

Moreover, implementing AI in compliance programs generates new obligations for organizations. They must govern how models are trained, validate outputs, and clarify accountability for errors or biases. In effect, companies must now comply with the very tools intended to enhance compliance. Without diligent oversight, AI designed to mitigate risk may inadvertently introduce new vulnerabilities.

To address these challenges, executives should adopt a comprehensive strategy. They need to clearly define the applications of AI within their organizations, documenting legitimate business cases and mapping AI-driven processes against regulatory and internal obligations. Every pivotal decision should incorporate human oversight, ensuring that automated recommendations are contextualized and validated. Training for teams should encompass not only compliance requirements but also AI literacy and risk management, equipping them to understand the limitations and potential biases of the tools they employ.

Finally, organizations should develop robust documentation, reporting practices, and flexible governance frameworks to ensure compliance is demonstrable, auditable, and resilient in the face of evolving regulations and AI technologies. Success will favor those who recognize the interdependence between AI and compliance, leveraging AI to enhance compliance while simultaneously applying compliance principles to govern AI itself. As organizations navigate an increasingly AI-driven economy, those that embrace this dual responsibility will build resilience, maintain stakeholder trust, and foster innovation that scales responsibly.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health