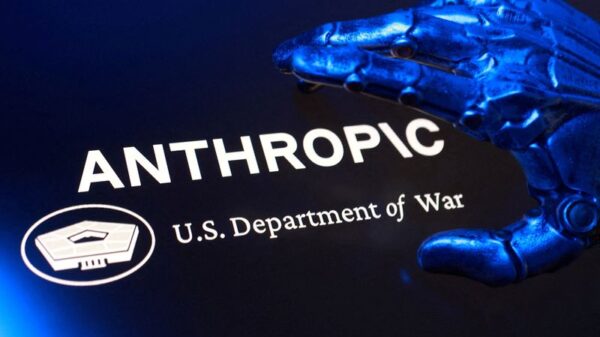

In a significant clash between technology and defense, Anthropic, an AI research company, has found itself at odds with the Department of War over usage restrictions tied to its AI model, Claude. This conflict arose from a contract signed in June 2024 during the Biden administration, allowing the Department of Defense (DoD) to utilize Claude for classified operations, including intelligence and combat. Controversially, the contract included restrictions prohibiting the use of the AI for mass domestic surveillance and autonomous lethal weapons, which can independently identify and eliminate targets without human oversight. The Trump administration expanded this contract in July 2025, maintaining similar restrictions, but recent developments signal a precarious shift in stance.

Dean Ball, a former Senior Policy Advisor at the White House Office of Science and Technology Policy, and current author of the AI-focused newsletter Hyperdimensional, discussed these developments with Yascha Mounk. They noted that Emil Michael, the Undersecretary of War for Research Engineering, deemed the restrictions overly burdensome and initiated efforts to renegotiate them, leading to the current fallout. Instead of merely canceling the contract, the DoD has now labeled Claude as a “supply chain risk,” effectively barring its use by other DoD contractors. The implications of this designation remain uncertain, sparking concerns over its potential breadth and future ramifications.

This decision marks a notable escalation, as the supply chain risk label is typically reserved for companies like Huawei, associated with state control. Mounk expressed skepticism about the DoD’s approach, predicting that Anthropic might challenge this designation legally, given its unprecedented nature in this context.

Ball highlighted the pressing issues at stake, particularly regarding the potential for domestic surveillance. He explained that while it is illegal for the government to directly collect private data on U.S. citizens, it can still acquire sensitive information through commercial vendors. With advancements in AI, the cost of monitoring individuals has drastically reduced, raising concerns about the erosion of privacy rights without any changes to existing laws.

As AI technologies proliferate, both guests acknowledged the difficulty of regulating such transformative tools under conditions of radical uncertainty. Ball noted that while existing laws aim to protect citizens, they risk becoming ineffective in the face of rapid technological evolution. He underscored the importance of balancing innovation with safeguards against potential abuse.

In examining the philosophical implications, Mounk and Ball contemplated the broader question of who should govern AI. They expressed apprehension that excessive government control could lead to mass surveillance, while an absence of oversight might allow harmful technologies to proliferate unchecked. More fundamentally, they explored the evolution of institutions in light of AI’s capabilities, questioning whether contemporary governance structures could adapt to effectively integrate these technologies.

Ball cautioned against over-regulating AI, arguing that existing legal frameworks could adequately address many concerns, provided that they are applied thoughtfully. He advocated for a proactive regulatory approach that focuses on transparency and accountability while avoiding stifling innovation. This sentiment reflects an ongoing debate about the nature of governance in an age increasingly defined by advanced technologies.

As the conversation unfolded, it became clear that the stakes surrounding AI governance extend far beyond legal frameworks. The implications of AI on society, individual rights, and institutional integrity present a complex landscape that requires careful navigation. Mounk and Ball’s dialogue underscores the urgency of finding a balance that fosters innovation while safeguarding democratic values, as the emergence of frontier AI systems continues to challenge established norms.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health