Amid an ongoing dispute between the Department of Defense and AI firm Anthropic regarding the government’s authority to enforce mass surveillance on private companies, another U.S. agency is discreetly revising its procurement rules to preempt similar conflicts in the future. The General Services Administration (GSA), responsible for acquiring goods and services for the federal government, has proposed new guidelines aimed at promoting “ideologically neutral” American AI innovation.

The GSA’s procurement process is a critical mechanism through which government priorities are expressed, influencing the allocation of taxpayer funds. By directing resources towards initiatives that prioritize the public good—such as open-source software development and the right to repair—while withholding funds from less scrupulous contractors, the government aims to safeguard public interests. However, the proposed rules have raised concerns among advocacy groups, who argue that they could undermine the very goals they intend to achieve.

According to comments filed by organizations including the Center for Democracy and Technology and the Electronic Privacy Information Center, the draft rules could inadvertently compromise the safety and efficacy of AI tools used in federal contracts. A particularly contentious provision requires that contractors and service providers license their AI systems to the government for “all lawful purposes.” Critics warn that the government’s loose interpretation of legality, coupled with its history of utilizing surveillance loopholes, calls for stringent legal restrictions to safeguard personal data from potential misuse.

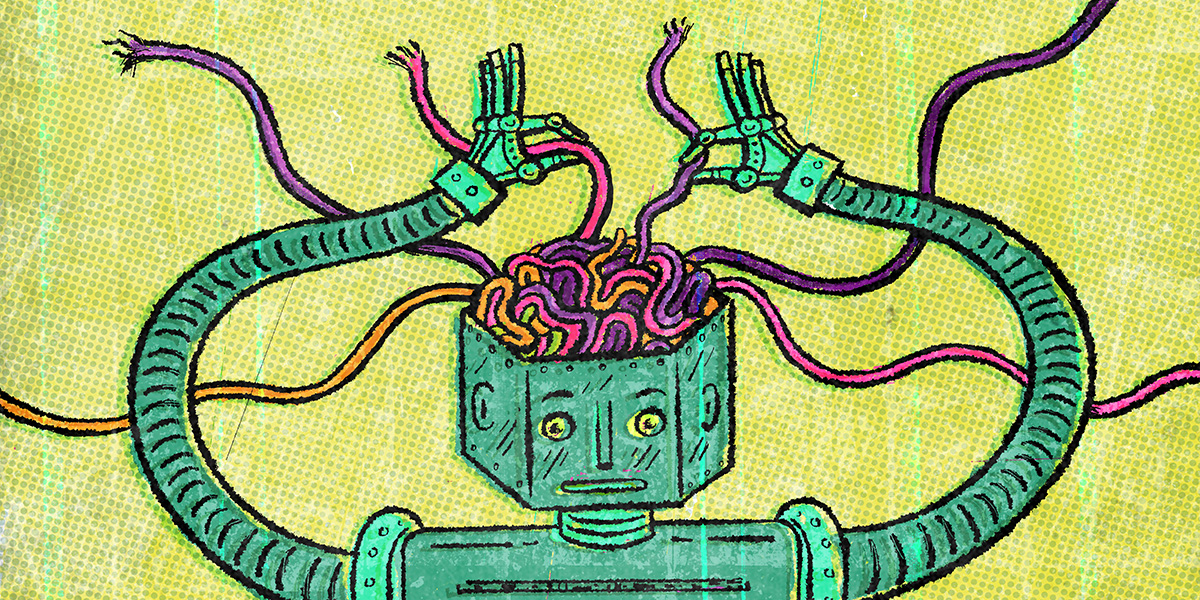

Equally troubling is a stipulation mandating that AI systems cannot refuse to produce data outputs or analyses based on a contractor’s internal policies. This means that if a company’s safety protocols would prevent it from complying with a governmental request, it must disable those safeguards. Given the escalating public concern surrounding AI safety, many view this requirement as fundamentally misguided.

The draft rules have been criticized for their ambiguous “anti-Woke” prerequisites, further complicating an already contentious regulatory landscape. Ultimately, the overarching issue is that the proposed guidelines could detract from the public interest, undermining the objective of using taxpayer dollars to foster privacy, safety, and responsible technological advancement. Advocacy groups are urging the GSA to reconsider its approach and start anew.

The implications of these changes could be far-reaching, affecting not only how AI technologies are developed and deployed but also how public trust in government oversight is maintained. As the debate unfolds, stakeholders from various sectors will be closely monitoring regulatory developments that influence the intersection of technology and civil liberties. The dialogue surrounding these guidelines underscores the need for a balanced approach to innovation that prioritizes ethical considerations alongside technological advancement.

See also Rubrik Reveals SAGE AI Governance Engine and Microsoft Defender Integration Amid 31.84% Share Drop

Rubrik Reveals SAGE AI Governance Engine and Microsoft Defender Integration Amid 31.84% Share Drop OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case