The U.S. Department of Health and Human Services (HHS) has unveiled a new artificial intelligence (AI) strategy aimed at enhancing governance and risk management of AI tools within the department. Released on December 4, 2025, this 20-page document marks HHS as one of the first federal agencies to internally apply AI regulations it has long advocated for across the broader public sector. The HHS strategy is part of a broader initiative to integrate AI into federal health services while promising to turn AI into a “practical layer of value” across operations and research.

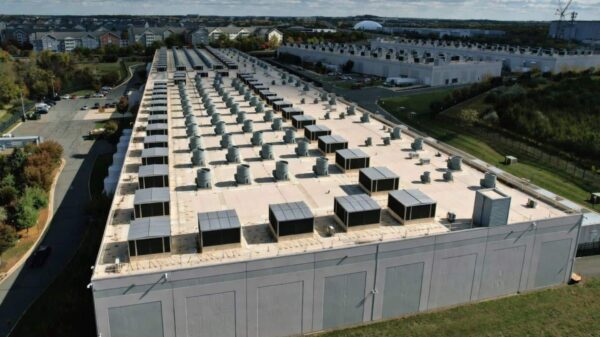

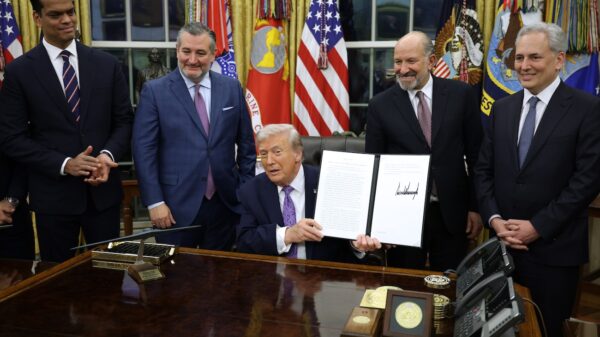

The strategy’s five pillars include governance and risk management, shared infrastructure, workforce capability, high-quality research, and modernized service delivery. HHS anticipates a 70 percent increase in AI projects in fiscal year 2025, emphasizing the need to manage sensitive health data effectively. This initiative aligns with President Donald J. Trump’s America’s AI action plan and the Office of Management and Budget’s directives for federal agencies to adopt AI while establishing governance structures to safeguard public interests.

Analysts view HHS’s new approach as a live test of management-based regulation, which encourages organizations to develop their own risk management systems instead of merely adhering to prescriptive rules. Cary Coglianese, in a recent essay, contended that this regulatory style is particularly suited to the rapidly evolving nature of AI technologies. By requiring organizations to implement risk management frameworks, including impact assessments and continuous monitoring, regulators hope to enable adaptive governance tailored to AI’s unique challenges.

The details of HHS’s strategy reveal a commitment to internal oversight. The establishment of an AI governance board is one key aspect, along with the documentation of AI use cases and criteria for identifying high-impact systems. These systems will undergo rigorous assessments, independent reviews, and monitoring, closely linked to the National Institute of Standards and Technology’s AI Risk Management Framework.

Furthermore, the strategy incorporates standards set by the International Organization for Standardization and the International Electrotechnical Commission, emphasizing a structured management system that spans the lifecycle of AI projects. While HHS is not seeking formal certification for its governance processes, it is laying the groundwork for a comprehensive internal management system for AI governance.

A significant challenge remains: ensuring that the policy decisions embedded within AI tools are transparent and contestable. Abigail Jacobs and Deirdre Mulligan have pointed out that what may appear as technical settings in AI applications often represent critical policy choices. This includes defining parameters for risk and making decisions that could have far-reaching consequences. HHS’s strategy addresses these concerns by promising public summaries for high-impact systems and metrics aimed at enhancing transparency.

However, external observers have questioned whether HHS’s safeguards for sensitive health data are robust enough. The strategy does not clarify how deeply public summaries will explore design choices related to externally sourced AI tools. This ambiguity raises concerns that key design decisions might remain obscured in technical documentation, bypassing public scrutiny.

HHS plans to collect effectiveness metrics for its AI initiatives, such as the proportion of high-impact systems subjected to independent review and response times for AI tool malfunctions. These metrics are intended to assure oversight bodies that governance processes are active and effective. Yet, the pressure to expedite assessments could lead to rushed decisions in a department anticipating a surge in AI projects.

As someone experienced in implementing AI governance frameworks, I recognize that the most challenging aspect lies in ensuring that every AI proposal triggers necessary evaluations and that stakeholders possess the authority and confidence to push back when needed. The push for increased AI utilization makes it imperative to maintain the ability to halt or reshape projects when necessary.

For other federal agencies developing their own AI strategies, HHS’s approach presents critical lessons. First, framing AI governance as a department-wide issue is essential; individual program offices cannot independently enforce standards. A central governance board is necessary to align risk management with infrastructure and procurement efforts. Second, closely aligning internal frameworks with established AI risk management standards gives external stakeholders concrete metrics to evaluate compliance. Lastly, transparency about governance processes is itself a form of risk management, allowing for public accountability.

HHS’s AI strategy serves as a foundational experiment in establishing an AI management system within the federal government. Other departments will likely use it as a model while also viewing it as an opportunity to refine their own governance frameworks. The success of management-based AI regulation hinges on its viability within the agencies that advocate for it, making HHS’s approach a potentially pivotal moment for federal AI oversight.