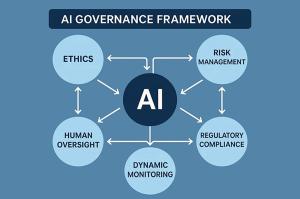

LOS ANGELES, CA, UNITED STATES, February 28, 2026 /EINPresswire.com/ — A new governance advisory initiative has been launched to address a critical question for enterprises since the rise of generative AI: Who truly owns AI risk? The recently released AI Governance & Guardrails 2026 Playbook provides essential frameworks, policy templates, and a complimentary webinar aimed at helping leadership teams establish clear accountability, controls, and audit measures before implementing AI in mission-critical areas.

As discussions around AI risk management increasingly reach the boardroom, many organizations still find themselves stuck at the policy stage. The playbook challenges this inertia, arguing that AI governance should not merely be a compliance requirement but rather a shared accountability model across various departments including IT, Security, Legal, and the broader business.

“AI risk doesn’t live in a single department,” said Lena Cho, Global Director of AI Governance Advisory. “Security sees the exposure, Legal interprets the compliance, but the business owns the outcome. Strong governance connects all three.” This perspective highlights the significant gap in accountability that many organizations face.

The playbook is designed to cater to both the U.S. and EU regulatory environments, aligning AI security controls and model risk management practices with emerging laws such as the EU AI Act and the NIST AI RMF. Its objective is to enable companies to navigate compliance with greater agility, integrating necessary controls directly into their development and deployment workflows.

“AI governance can’t lag behind innovation,” remarked Rafael Martinez, Chief Information Security Officer at a global fintech firm. “By the time you audit a model post-deployment, the damage—or the data leak—may already be done. Pre-deployment guardrails are now a baseline expectation.”

The AI Governance Playbook distinguishes itself through its focus on shared governance and tangible controls. The comprehensive 50-page guide, now available for download, includes essential tools like:

– AI Policy Templates (customizable)

– Governance Gap Assessment Checklist

– Model Risk Control Matrix

– AI Audit Readiness Scorecard

– Stakeholder Accountability Map

To illustrate its practical application, consider a multinational retailer implementing a generative AI chatbot for customer interactions across various regions. Without designated ownership, the Legal department drafts a policy, Security applies basic filters, and the business moves forward with deployment—only to discover customer data inadvertently leaving the EU. The playbook emphasizes how a cohesive cross-functional guardrail framework could have mitigated this risk prior to launch.

“Companies underestimate how small policy gaps cascade into legal exposure,” cautioned Priya Nand, Head of Compliance Strategy at a Fortune 500 manufacturer. “This framework helps unify people, process, and policy before AI goes live.”

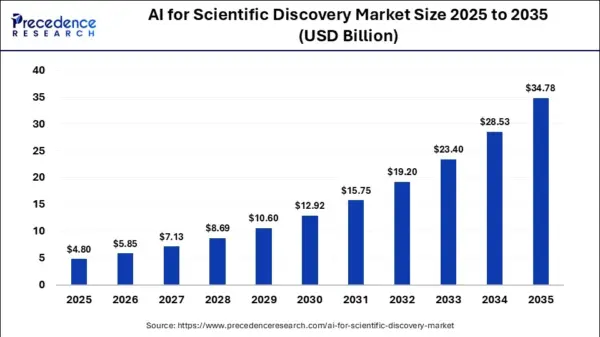

The urgency for effective AI governance is underscored by rising demand for AI assurance. Data from Gartner indicates that 71% of compliance leaders lack visibility into their organization’s AI use cases, with over 60% planning to set up formal AI risk committees by 2027. As AI technologies become integral to business operations, the capability to audit AI use is emerging as a vital competitive differentiator.

Founded in Los Angeles, Global IT Communications, Inc. specializes in managed IT services for privacy-sensitive sectors such as healthcare and financial services. With over two decades of experience, the firm integrates compliance governance with operational frameworks tailored to meet stringent data-handling requirements.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health