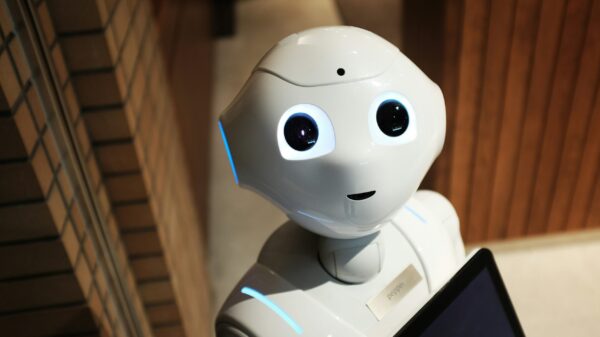

Scrutiny over how OpenAI managed information regarding the Tumbler Ridge, B.C., mass shooter months prior to the tragedy has prompted calls for Canada to evaluate regulations for artificial intelligence companies aimed at informing law enforcement in similar situations. This comes after the company confirmed it had “proactively” identified and banned an account associated with Jesse Van Rootselaar in June 2025, due to the misuse of its AI chatbot, ChatGPT, for “violent activities.”

Despite this action, OpenAI did not notify police at the time, as the activity did not meet its internal threshold for an “imminent” threat. Tragically, Van Rootselaar, 18, went on to kill eight people and injure 25 others on February 10 before taking her own life.

In response to the incident, Artificial Intelligence Minister Evan Solomon convened representatives in Ottawa to discuss the situation and OpenAI’s safety practices. Solomon stated, “all options are on the table when it comes to understanding what we can do about AI chatbots.” Meanwhile, Heritage Minister Marc Miller indicated that while his ministry is collaborating with Solomon’s to develop online safety legislation that includes AI platforms, the government is not rushing to tie this legislation directly to the Tumbler Ridge shooting.

Miller emphasized the need for careful legislation to ensure platforms act responsibly, noting that the details of what this will entail are still under consideration. He acknowledged a “legitimate thirst for easier answers” but cautioned against oversimplifying the issue, especially given the ongoing investigation.

Canada’s privacy laws stipulate that private companies “may” disclose personal information to authorities if they believe there is a risk of significant harm or potential lawbreaking. This ambiguity allows companies like OpenAI to set their own thresholds, such as the “imminent” threat criteria it employs.

Critics argue there are risks associated with allowing AI developers to autonomously determine safety protocols. Vincent Paquin, an assistant professor at McGill University, highlighted concerns about the increasing reliance on AI platforms for sensitive discussions, particularly regarding mental health, without a clear understanding of the safety mechanisms in place.

OpenAI is also navigating multiple lawsuits in the U.S. over allegations that its platforms have contributed to suicides and self-harm among young users. The company denies these claims, asserting that its safety evaluations typically reject requests for harmful content, including violent rhetoric and suicidal ideation. Reports indicate that OpenAI employees were alarmed by Van Rootselaar’s posts describing scenarios involving gun violence prior to the shooting.

Following the incident, the B.C. government stated that OpenAI officials met with a government representative on February 11, the day after the shooting, for a pre-scheduled discussion about the company’s potential first Canadian office. However, they noted that OpenAI did not inform government officials about having potential evidence related to the shootings, although the company did request contact information for the RCMP the following day.

Canada’s Privacy Commissioner Philippe Dufresne has previously stated that the absence of a Canadian office complicates investigations into tech firms like TikTok. Meanwhile, Brian McQuinn, an associate professor at the University of Regina, expressed concern that the tech industry has deprioritized internal safety regulations, particularly since the management changes at Twitter under Elon Musk. McQuinn suggested this has led to fewer safeguards and responsibilities for the companies.

Calls for stringent regulations are gaining traction, with experts like Sharon Bauer, a privacy lawyer, emphasizing the need for a balance between individual privacy and the obligation to alert authorities about potential threats. She pointed out that the term “imminent” is crucial, as a lower threshold could result in unnecessary stigmatization, while a higher threshold might lead to missing genuine threats.

Experts advocate for increased transparency and external oversight of AI companies, asserting that developers should not independently determine safety protocols. As Canada formulates a strategy for AI, there is a push for it to incorporate robust safety measures alongside economic incentives. Paquin cited a recent California law requiring large AI companies to report instances of their platforms being used for potentially catastrophic activities as a potential model for Canadian regulations.

The situation underscores the pressing need for a reevaluation of safety frameworks concerning AI technologies, especially in light of the Tumbler Ridge incident, as discussions around AI regulation continue to evolve in Canada.

See also UK Eyes Social Media Ban for Under-16s as AI Safety Laws Tighten Amid Growing Concerns

UK Eyes Social Media Ban for Under-16s as AI Safety Laws Tighten Amid Growing Concerns Swedish PTS Calls for Long-Term Funding to Implement EU AI Regulation Effectively

Swedish PTS Calls for Long-Term Funding to Implement EU AI Regulation Effectively South Africa Reveals AI Policy Timeline, Aims for Completion by 2027/2028

South Africa Reveals AI Policy Timeline, Aims for Completion by 2027/2028 Ohio Suicide Prevention Group Backs Bipartisan AI Regulation Bill to Address Self-Harm Risks

Ohio Suicide Prevention Group Backs Bipartisan AI Regulation Bill to Address Self-Harm Risks