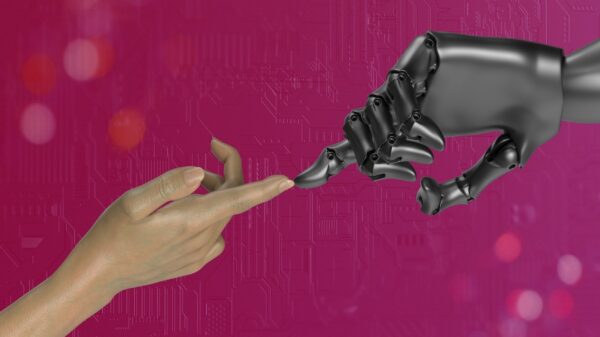

As discussions surrounding artificial intelligence (AI) continue to dominate conversations in various sectors, the Public Relations Society of America (PRSA) has issued new guidance aimed at helping professionals navigate these uncharted waters responsibly. While the recommendations stem from U.S. standards, they hold universal relevance, especially for public relations (PR) teams operating across the MENA region. The essential principles outlined in the guidelines are not merely suggestions; they are fundamental rules that all PR professionals, whether in agencies, corporate communications, non-profits, or government roles, must adhere to in order to maintain ethical integrity.

The first principle emphasizes the necessity of transparency, stating that organizations must disclose when AI significantly influences any content, strategy, or client communication. In an industry where trust is paramount, audiences have the right to know when AI-generated material is involved. This applies not only to written texts but also to visuals, videos, and even data analyses. A simple statement declaring “we use AI” is insufficient; each piece of AI-generated content requires a specific disclosure, reinforcing accountability and fostering trust with stakeholders.

The second principle focuses on data privacy, mandating that PR professionals must never upload personally identifiable information or confidential materials into public AI tools. This precaution addresses a common pitfall where sensitive client data could unintentionally become exposed. The PRSA recommends auditing AI tools for their data privacy policies and shifting to secure systems specifically designed for sensitive work. Given the nature of PR, where professionals routinely handle critical information regarding product launches, crisis situations, and executive communications, the stakes are high, with a breach potentially leading to severe reputational and legal repercussions.

In line with the third principle, the guidance asserts that while AI can assist with drafting and research, human judgment must govern the final decisions. AI tools can provide valuable insights and efficiencies but lack the nuanced understanding necessary for strategic positioning and crisis management. Therefore, it’s crucial for PR professionals to act as “human gatekeepers,” ensuring that all content is vetted prior to publication to avert potential fallout from AI-generated errors.

Another critical area the PRSA highlights is the need to manage bias in AI outputs. The fourth principle warns that AI models can perpetuate biases inherent in their training data, leading to misrepresentations of cultural or regional specifics. As the MENA region is rich in diverse cultures and perspectives, PR professionals must actively monitor AI-generated content to prevent stereotypes and miscommunications that could alienate their audiences or damage brand reputations.

Finally, the PRSA calls for the establishment of internal governance frameworks surrounding AI use. The fifth principle encourages agencies to develop clear policies, training programs, and accountability structures to navigate the ethical landscape of AI. This involves creating written AI policies that outline acceptable use, disclosure requirements, and data protection protocols. With the potential for one team member’s oversight to lead to broader legal consequences, having a robust governance framework is essential for safeguarding the organization against risks associated with AI.

As AI continues to shape the public relations landscape, these guidelines underscore that while technology can enhance efficiency, the human factors of accountability, ethics, and strategic insight remain irreplaceable. The PRSA’s guidance is a timely reminder that in an age of rapid technological advancement, responsibility ultimately lies with individuals, not algorithms. The ongoing evolution of AI in public relations presents an opportunity for professionals to lead with integrity while adapting to the changing environment.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health