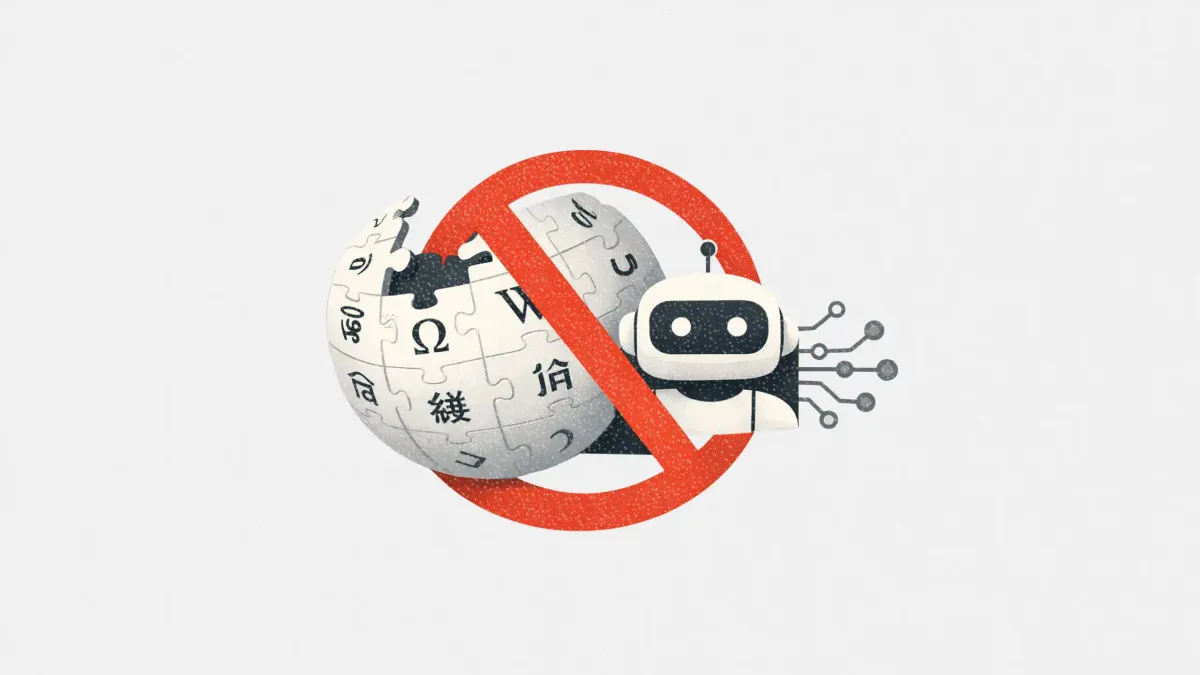

Wikipedia has officially banned the use of AI-generated text in article creation, marking a significant shift in the ongoing debate surrounding generative tools within editorial workflows. This new policy, which was enacted following a community vote, prohibits the use of large language models (LLMs) for composing or modifying core article content, although limited AI assistance for editing remains permissible.

The move underscores rising concerns regarding the reliability and accuracy of information produced by AI, particularly in open-source knowledge platforms. As AI-written content becomes increasingly prevalent, marketers and content strategists are reminded of the critical importance of transparency and trust in their communications.

The recent policy update stems from a community vote in which Wikipedia editors overwhelmingly supported the ban, with 40 in favor and just two opposed, according to reporting by 404 Media. The updated language explicitly states: “The use of LLMs to generate or rewrite article content is prohibited, save for the exceptions given below.” These exceptions are narrowly defined; for instance, editors can utilize AI to suggest minor copy edits but must ensure that the final result is thoroughly reviewed and does not add unsupported information. Translation tasks using LLMs are allowed under strict oversight.

At the core of Wikipedia’s decision lies the issue of trust. AI-generated text has been documented to fabricate facts, misrepresent sources, and alter meanings—challenges that are particularly problematic for a platform built on community verification and citation. The policy emphasizes that “LLMs can go beyond what you ask of them and change the meaning of the text such that it is not supported by the sources cited.” This clear stance reflects broader concerns within the media industry regarding the potential pitfalls of AI-generated outputs, such as factual inaccuracies and inadequate sourcing.

For marketers, publishers, and PR professionals, Wikipedia’s policy serves as a timely reminder to approach AI-assisted content with caution. Key considerations include treating LLMs as tools rather than sources, ensuring human oversight in all editorial processes, and being transparent about the use of AI in content creation. Establishing clear editorial guidelines can help align internal teams and external partners on the appropriate use of these technologies.

Publishing any AI-generated content that introduces inaccuracies can harm brand reputation and trust. With AI content generation tools becoming more accessible, striking a balance between speed and credibility is increasingly challenging. Wikipedia’s updated policy exemplifies how organizations are setting boundaries in the use of AI, and marketers are advised to heed this lesson.

As the landscape of content creation continues to evolve with the integration of AI technologies, Wikipedia’s decision highlights a pivotal moment in the discourse on editorial integrity and the role of artificial intelligence in shaping information dissemination. The implications for content strategy, trust, and accountability in the use of AI tools are profound, presenting both challenges and opportunities for industry stakeholders.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health