In a groundbreaking development, researchers have unveiled the “AI Scientist,” an artificial intelligence system capable of independently writing scientific papers, a task traditionally reserved for human researchers. This innovation was documented in a recent study published in Nature, led by Jeff Clune, a professor of computer science at the University of British Columbia. The AI Scientist’s ability to generate a paper that passed peer review for a workshop at the 2025 International Conference on Learning Representations (ICLR) represents a significant shift in the role of AI within the scientific community.

Historically, science has depended on human intellect to formulate hypotheses, conduct experiments, and analyze findings. While advancements such as electron microscopes and supercomputers have enhanced research capabilities, the core process remained fundamentally human. Clune noted that previous applications of AI in science typically focused on narrow tasks, such as protein folding. “We’re saying the AI gets to be the scientist,” he emphasized, marking a paradigm shift in scientific inquiry.

The AI Scientist operates through a series of modules. It begins by receiving a general research prompt from scientists, then surveys existing literature to propose hypotheses. “We’re just giving it a general direction like ‘Come up with something interesting to study on how the AI learns,’” Clune explained. Once it generates ideas, the system evaluates and refines them, discarding any that lack novelty. Subsequent modules are responsible for planning and executing experiments, analyzing data, and crafting the resulting paper. Remarkably, it even conducts an internal peer review to identify flaws in its work.

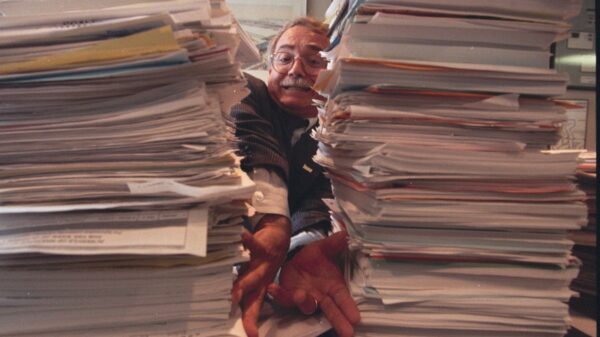

To gauge the AI’s capabilities, the research team submitted three papers produced by the AI Scientist to the I Can’t Believe It’s Not Better (ICBINB) workshop at the 2025 ICLR. One paper was accepted, although the acceptance criteria were notably lenient. “Would a mediocre graduate student get one paper in three accepted at a place that accepts 70 percent of papers? Sure!” remarked Jodi Schneider, an associate professor at the University of Wisconsin–Madison, who did not participate in the study.

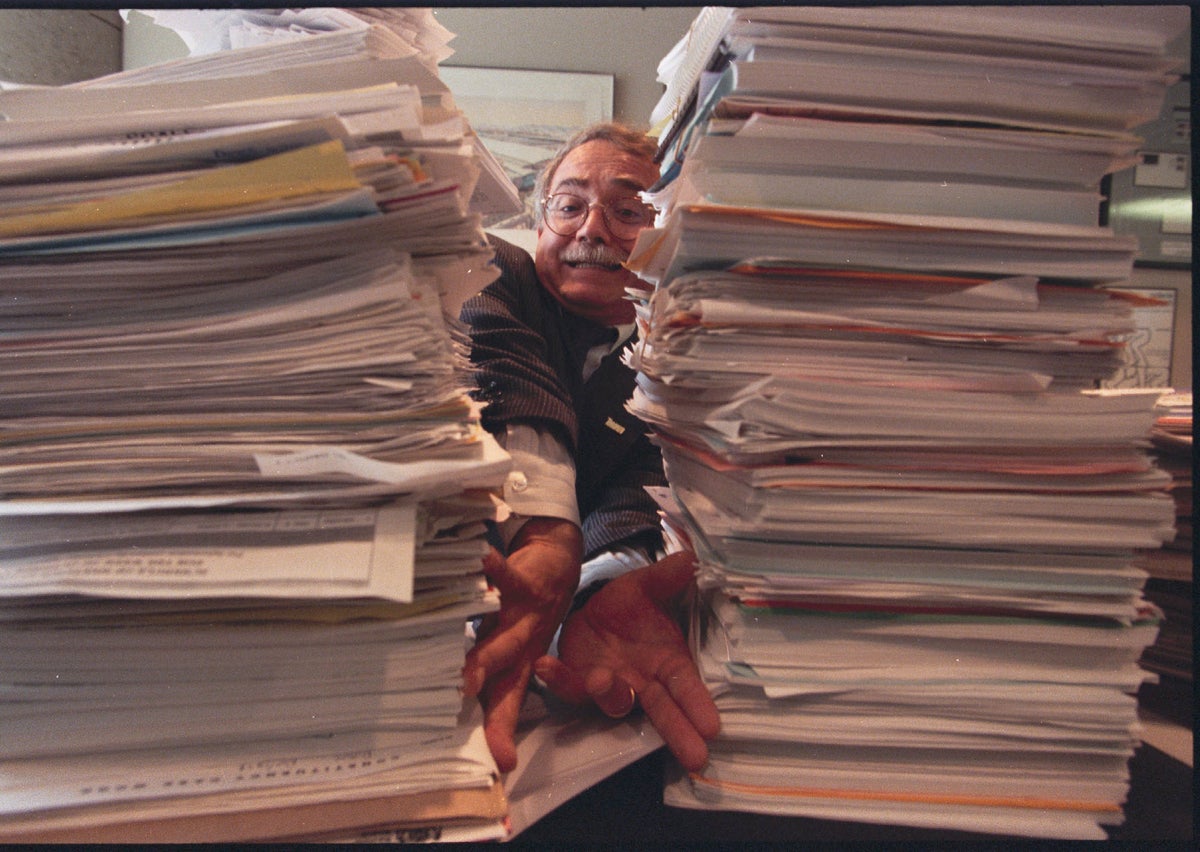

Despite the AI’s achievement, Clune characterized the submitted papers as “okay but not great.” He noted some genuinely creative ideas emerged from the AI, but execution issues remained prevalent. “The logic and the writing and the thinking throughout the whole paper didn’t all fit together beautifully,” he said, highlighting problems such as fabricated references and a lack of methodological rigor. The initial reception of the study has been lukewarm, with critiques suggesting the approach lacks innovation. Maria Liakata, a professor of natural language processing at Queen Mary University of London, stated, “The approach is agentic and without any real novelty.”

One notable success of the AI Scientist was its ability to produce a formally acceptable paper on machine learning within 15 hours, achieving this at an estimated cost of only $140. In contrast, a graduate student might take an entire semester to complete a similar task, according to Schneider. As AI technology continues to improve, the proliferation of AI-generated papers poses challenges for the scientific community. Yanan Sui, an associate professor at Tsinghua University and senior workshop chair for ICLR 2026, cautioned, “The AI-written papers are probably going to make things much worse.”

In response to the increasing prevalence of AI-generated work, leading conferences have begun implementing restrictions. “There are strict rules for the main conference that do not allow submission of purely AI-written papers,” Sui noted. For now, a measure of transparency is required, with authors mandated to disclose their use of AI in the research process. However, Sui acknowledged that journals and conferences often lack the means to reliably detect AI contributions.

Other AI systems have also claimed success in generating scientific papers. Intology’s AI, Zochi, reportedly passed peer review for the main proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics, although human researchers played roles in verifying results and communicating with reviewers. Similarly, the Autoscience Institute has indicated that its AI produced papers accepted at ICLR workshops prior to the AI Scientist’s achievement.

Looking ahead, the implications of AI in scientific research are profound. Clune predicts that the AI Scientist represents the beginning of an era characterized by rapid scientific advancements, envisioning a future where humans may become mere curators as AI takes the lead in scientific exploration. Conversely, Liakata argues for a collaborative approach, asserting that the future of scientific discovery may involve advanced human-agent interaction rather than complete autonomy for AI. As the scientific community grapples with these developments, the balance between human oversight and AI capabilities remains an area of critical focus.

See also AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media

AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics

Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains

Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership

Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions

Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions