In an era dominated by the Internet of Things (IoT) and artificial intelligence (AI), the necessity for efficient data processing and storage is becoming increasingly urgent. Edge AI technology offers a promising solution, alleviating the pressure on cloud data centers through the use of optimised processors. This innovation is set to improve latency, enhance privacy and security, reduce power consumption, and boost bandwidth efficiency. However, the landscape of edge AI remains notably fragmented, presenting challenges for developers compared to the more established cloud-based AI ecosystem.

The cloud has long been the backbone of AI, leveraging powerful GPUs and the capabilities of hyperscale data centers to handle extensive processing needs. The methodologies and programming languages in this domain are well-developed, creating a stable environment for AI development. However, as we transition to edge devices, the environment becomes increasingly heterogeneous. The lack of standardisation in the IoT space results in a disjointed experience for users and developers, complicating efforts to fully harness the capabilities of edge AI.

Unlike cloud environments, which can support energy-intensive large language models (LLMs), IoT devices require AI models that are resource-efficient in terms of power and memory. While cloud-based training typically employs tools like TensorFlow, the array of platforms and protocols available for consumer, enterprise, and industrial IoT devices can be overwhelming and incompatible, leading to integration challenges.

The disparity in development timelines between software and hardware further complicates the situation. Software applications rapidly evolve to incorporate AI functionalities, yet the hardware often lags behind. IoT device manufacturers frequently resort to repurposing semiconductors designed for other markets, such as smartphones, which may only provide limited acceleration capabilities—1 TOPS to 2 TOPS at best—before encountering a drastic leap in performance metrics that is not well-suited for IoT applications.

Research suggests that the edge AI IoT compute silicon market is expanding at approximately 15% year-over-year and could reach $20 to $25 billion within the next three to four years. This burgeoning market underscores a critical need: available AI compute silicon must align with the requirements of IoT device manufacturers. Challenges such as energy efficiency, latency, privacy, and developer ease are paramount in ensuring effective AI integration at the edge.

As AI becomes more embedded in daily life, the demand for devices that can process data efficiently at low power consumption levels is paramount. Current models in the cloud consume significant energy, posing a stark contrast to the operational requirements of IoT devices. Therefore, achieving an optimal balance between hardware capabilities and software functionalities is crucial to ensuring that IoT devices can run AI applications effectively.

Despite these challenges, the market offers engineers a broad array of options for integrating AI into IoT designs. However, many existing reference platforms are not specifically tailored to IoT applications, leading to potential inefficiencies. The need for readily available solutions that adequately address the unique demands of IoT applications is clear. As AI technology continues to proliferate, issues of secure inferencing and user privacy become increasingly relevant.

Innovations and Industry Responses

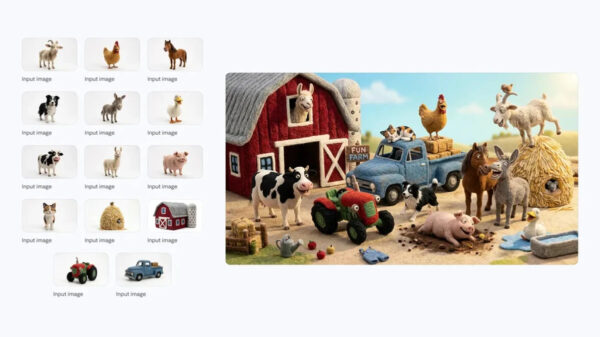

AI applications in the IoT space are often vision-based, utilising techniques like presence detection and gesture recognition. While these capabilities are currently supported in cloud environments, the next logical step is to enable them locally on IoT devices using models designed for tighter resource constraints. Additionally, the integration of other modalities, such as voice and audio, can enhance the user experience. For instance, a hybrid model can send metadata to the cloud for retraining, allowing for continuous updates to the AI model based on user behavior, making it more personalised over time.

This feedback loop between edge devices and the cloud will be essential as models evolve beyond static implementations. AI systems embedded in everyday devices, from smart speakers to security cameras, can adapt based on user patterns and preferences, upgrading themselves upon connecting to networks.

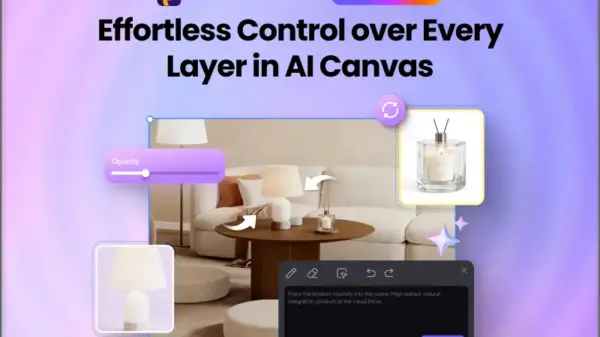

Addressing these evolving needs, Synaptics has launched its Astra “AI-Native” embedded computing platform, designed to unify the fragmented IoT ecosystem. By offering a standardised developer experience across various sectors—consumer, enterprise, and industrial—Astra utilises scalable embedded systems on chip (SoCs) featuring built-in neural processing units (NPUs) and other processing cores. This approach allows the platform to cater to a wide range of IoT applications, from simple single-sensor devices to complex multi-modal systems.

The Astra platform addresses not only performance but also security challenges in AI IoT designs. Drawing from its expertise with stringent cybersecurity requirements in set-top box manufacturing, Synaptics has created a robust secure pipeline for AI inferencing, ensuring its solutions are well protected against cyber threats. With expectations for the compute silicon market to grow significantly, Synaptics is well-positioned to meet future demands.

As edge AI computing continues to evolve, its role in the IoT landscape is set to become increasingly pivotal, offering innovative ways to manage and interpret the vast amounts of data generated by interconnected devices while significantly enhancing user experiences.

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech