Intel and SambaNova announced on Wednesday the launch of their joint production-ready heterogeneous inference architecture, which utilizes a combination of AI accelerators, SambaNova’s reconfigurable dataflow units (RDUs) SN50, and Xeon 6 processors. This innovative platform is tailored to address a wide variety of workloads, aiming to capture market share from Nvidia and other emerging competitors in the AI sector.

The architecture separates inference into distinct stages, assigning specific tasks to different silicon components. It employs AI GPUs or accelerators to handle the prefill stage, which involves ingesting long prompts and building key-value caches. The decoding and token generation tasks are managed by SambaNova’s SN50 RDU, while Intel’s Xeon 6 processors orchestrate agent-related operations such as compiling and executing code, as well as coordinating workloads across the hardware.

This method of splitting tasks mirrors Nvidia’s approach with its Rubin platform, which also segments inference into different stages. However, Intel emphasizes that its architecture relies on its Xeon 6 processors, setting it apart from other offerings in the marketplace. The introduction of this platform is strategically timed, with availability slated for the second half of 2026, targeting enterprises, cloud operators, and sovereign AI programs seeking scalable inference solutions.

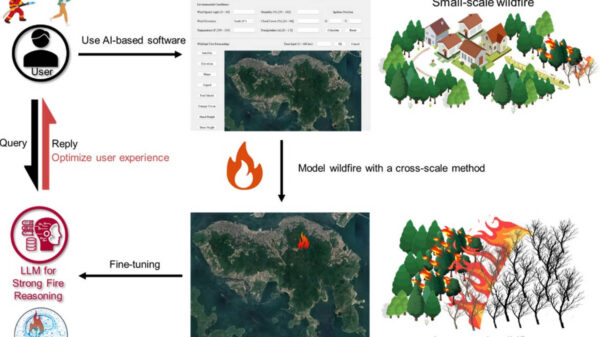

Internal data from SambaNova claims that the Xeon 6 processors achieve over 50% faster LLVM compilation compared to Arm-based server CPUs, and deliver up to 70% higher performance in vector database workloads compared to AMD EPYC processors. These performance improvements are designed to significantly reduce end-to-end development cycles for coding agents and similar applications, according to both companies.

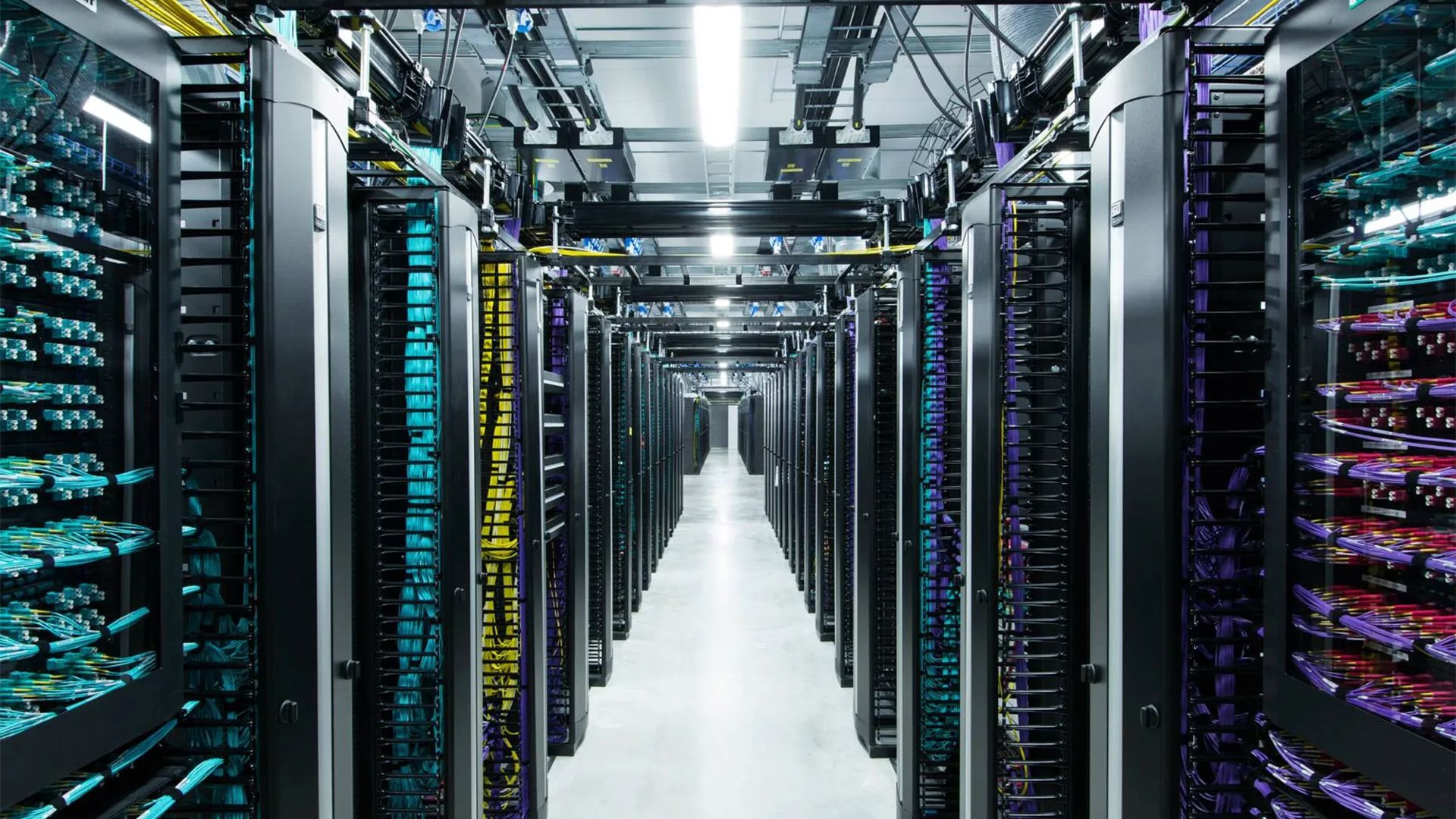

A key advantage of this heterogeneous inference architecture is its compatibility with existing data centers that can accommodate up to 30kW, a specification that fits the vast majority of enterprise data centers currently in operation. “The data center software ecosystem is built on x86, and it runs on Xeon — providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale,” said Kevork Kechichian, Executive Vice President and General Manager of the Data Center Group at Intel Corporation. He noted that future workloads will demand a heterogeneous mix of computing, and this collaboration with SambaNova aims to deliver a cost-efficient, high-performance inference architecture that meets customer needs on a large scale.

As the AI landscape continues to evolve, the partnership between Intel and SambaNova could reshape the competitive dynamics of the sector, particularly as enterprises seek robust and scalable solutions for their data processing needs. With significant advancements in processing speed and efficiency, this joint initiative stands to redefine standards for inference architectures in the coming years.

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech