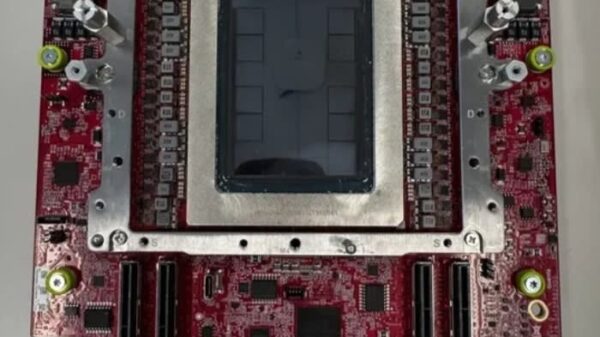

Meta announced the development of four new generations of its in-house chips, the Meta Training and Inference Accelerator (MTIA), designed in collaboration with Broadcom. These chips, slated for deployment over the next two years, aim to enhance the company’s AI capabilities significantly. “We’ve developed a competitive strategy for MTIA by prioritizing rapid, iterative development,” Meta stated in its press release, highlighting an inference-first focus and seamless adoption through native support for industry standards.

The four models unveiled are the MTIA 300, 400, 450, and 500. The MTIA 300 is already in production for ranking and recommendations training, while the MTIA 400 is undergoing lab testing prior to its data center deployment. The MTIA 450 and 500 are positioned for AI inference, with mass deployment expected in early and late 2027, respectively. Meta’s technical blog outlines significant advancements across these models, including a 4.5 times increase in HBM bandwidth and a 25 times uplift in compute FLOPs from MTIA 300 to MTIA 500.

Meta asserts that the MTIA 450 doubles the HBM bandwidth of the MTIA 400, claiming it surpasses the capabilities of existing top-tier commercial products, including Nvidia’s H100 and H200. The MTIA 500 is expected to provide an additional 50% increase in HBM bandwidth compared to its predecessor, alongside up to 80% more HBM capacity. This distinction is crucial, as HBM bandwidth is identified as the chief bottleneck during the decode phase of transformer inference. Current mainstream GPUs are designed to maximize FLOPs for large-scale pre-training, which incurs costs and power overhead that Meta argues are superfluous for inference tasks.

The MTIA chips are characterized by distinct performance metrics. The MTIA 300 focuses on ranking and recommendations, with a thermal design power (TDP) of 800 W and an HBM bandwidth of 6.1 TB/s. The MTIA 400 has a TDP of 1,200 W and an HBM bandwidth of 9.2 TB/s, while the MTIA 450 and 500 feature TDPs of 1,400 W and 1,700 W, respectively, with HBM bandwidths of 18.4 TB/s and 27.6 TB/s. The peak performance also scales, with the MTIA 500 boasting up to 30 PFLOPS.

Moreover, Meta’s approach incorporates hardware acceleration for FlashAttention and mixture-of-experts feed-forward network computations, alongside custom low-precision data types specifically designed for inference. The MTIA 450 supports MX4 performance, delivering six times the MX4 FLOPs of FP16/BF16, effectively reducing the software overhead associated with data type conversion.

In terms of deployment, the MTIA 400, 450, and 500 will utilize a common chassis, rack, and network infrastructure. This modularity allows for easier interchangeability of chip generations, contributing to an accelerated chip cadence of approximately six months, outpacing the industry’s typical one- to two-year cycles. The software stack for these chips is compatible with popular frameworks such as PyTorch, vLLM, and Triton, permitting simultaneous deployment of production models on both GPUs and MTIA without the need for MTIA-specific adjustments.

Currently, Meta has already deployed hundreds of thousands of MTIA chips across its applications for inference on organic content and advertisements. This announcement comes shortly after the company revealed a long-term, $100 billion AI infrastructure partnership with AMD. This suggests a broader strategy to reduce reliance on Nvidia across various components of Meta’s AI ecosystem while maintaining the MTIA chips as the foundation for its inference workloads.

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech