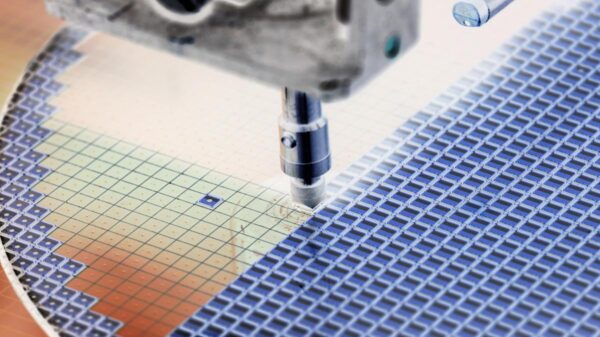

In a significant development for the tech industry, major memory manufacturers are witnessing a steep decline in stock prices, attributed largely to Google’s new algorithm, TurboQuant. This innovative algorithm drastically reduces the memory requirements needed for artificial intelligence (AI) models to function effectively, presenting a potential solution to the current RAM pricing crisis and shortage.

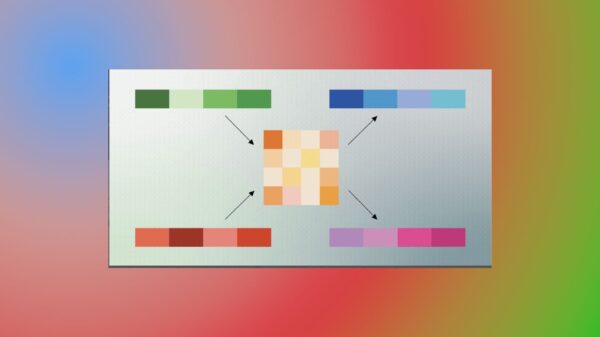

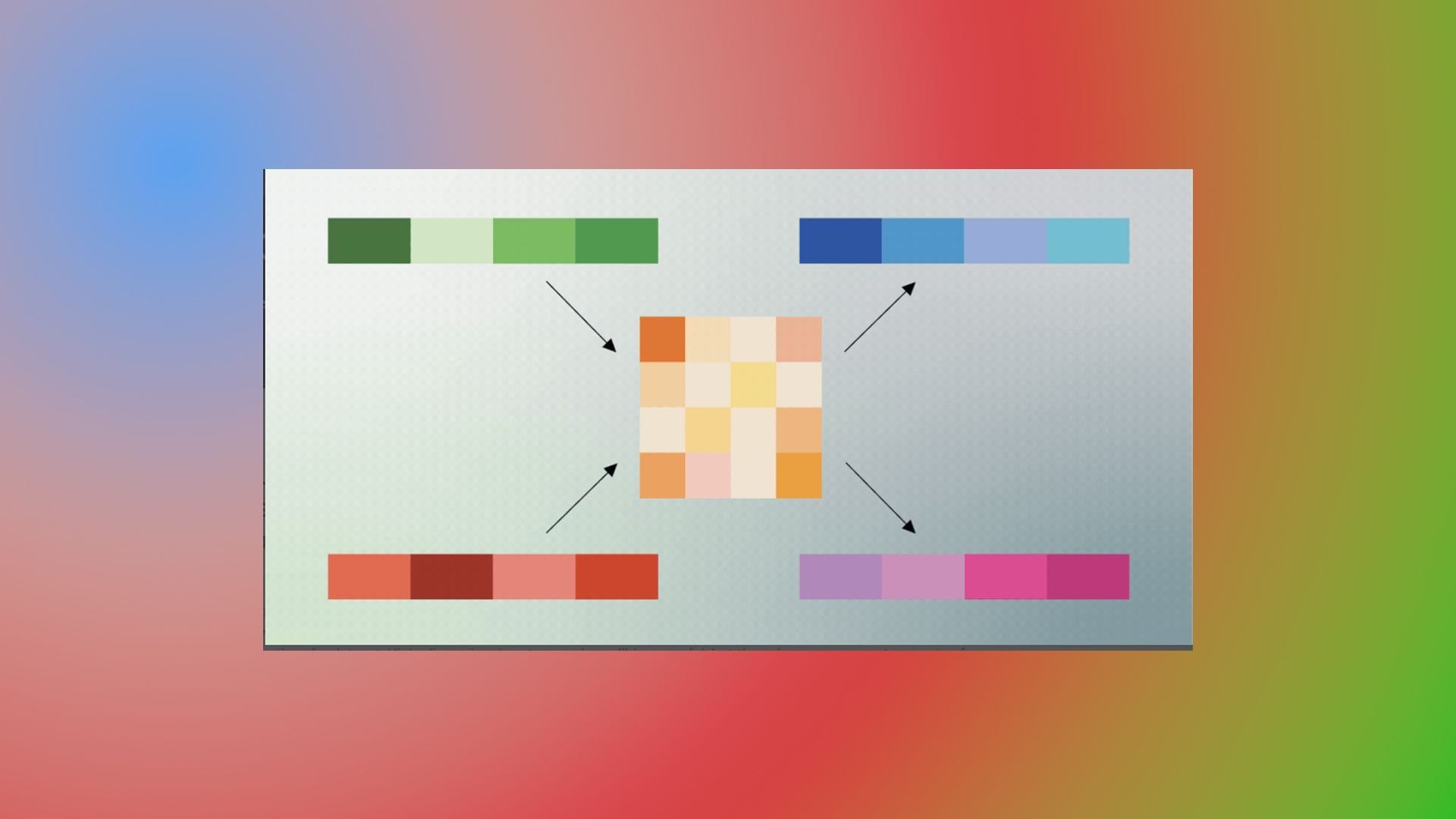

Traditionally, AI models utilize substantial memory, storing their working data in complex mathematical structures that often take up considerable space on RAM chips. However, TurboQuant introduces a compression mechanism that significantly downsizes this data. It employs a secondary process to rectify any errors that may arise from such extreme compression, ensuring that the accuracy of the model remains intact. If TurboQuant scales across data centers as anticipated, it could alleviate some of the pressures currently impacting RAM prices and availability.

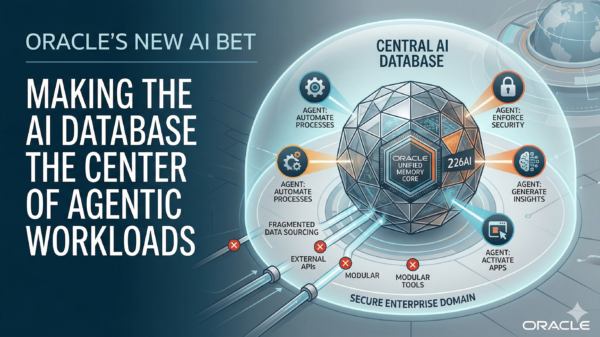

The underlying challenge stems from the fact that AI models require ample memory—known as KV cache—to maintain context during interactions. This need becomes particularly pronounced in longer conversations, leading to slower responses and diminished contextual awareness as RAM resources dwindle. Consequently, companies have been compelled to invest heavily in expensive memory options, such as HBM and DDR5, contributing to ongoing shortages and inflated prices.

TurboQuant alters this dynamic by allowing AI to operate with less memory. Instead of demanding large amounts of RAM, it compresses the temporary data into a much smaller footprint. Previous methods aimed to achieve similar results but often fell short, as they required additional data to maintain response accuracy. TurboQuant’s approach, however, employs a more efficient compression technique followed by a lightweight error correction step, enabling AI systems to function effectively with reduced memory requirements.

The broader implications of TurboQuant’s success could reshape the RAM market. With a significant reduction in memory demand, companies may no longer need to procure such vast quantities of RAM, which could, in turn, lead to a decrease in prices as market dynamics shift. As such, the stock market’s reaction to this technology will be instrumental in assessing its impact on the industry and the memory sector.

In summary, TurboQuant emerges as a promising advancement that addresses the current challenges in RAM utilization for AI models. While the technology’s long-term efficacy will be observed in the coming months, its potential to reshape the economics of memory demand presents a significant opportunity for both the tech industry and consumers alike.

See also Intel Launches Arc Pro B70 GPU for Local AI at $949, Challenging Nvidia’s Dominance

Intel Launches Arc Pro B70 GPU for Local AI at $949, Challenging Nvidia’s Dominance Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs