Multiverse Computing has unveiled the HyperNova 60B 2602, a 50% compressed version of OpenAI’s gpt-oss-120B, as part of its strategy to provide developers with hyper-efficient, high-performance models at no cost. The announcement was made on February 24, 2026, in Donostia, Spain, and the new model is now available for free on Hugging Face. This release follows the initial HyperNova 60B debut in January, and it boasts enhancements in tool calling and agentic coding capabilities, underscoring Multiverse’s commitment to democratizing access to advanced AI technologies.

As the demands on infrastructure grow, developers face increasing limitations in deploying large language models (LLMs) effectively. Multiverse aims to mitigate these challenges by creating efficient models that maintain advanced reasoning abilities while significantly reducing model size and resource requirements. The HyperNova series exemplifies this philosophy, delivering powerful AI tools without the typical trade-offs between performance and accessibility.

At the core of Multiverse’s approach is its proprietary technology, CompactifAI, which employs quantum-inspired mathematics to optimize neural networks. This algorithm has the potential to reduce model sizes by up to 95% while keeping precision loss within a narrow 2-3% margin. This is a marked improvement over conventional compression methods, which often see accuracy losses of 20-30%. As a result, developers can utilize sophisticated models that demand significantly less computational power, memory, and energy.

Enrique Lizaso Olmos, CEO of Multiverse Computing, emphasized the iterative nature of model compression, stating, “The launch of HyperNova 60B 2602 demonstrates compression as an iterative process of improvement, not a one-time optimization.” He highlighted that each new generation of compressed models expands the boundaries of what is achievable with efficient AI. By continually refining their offerings and making them openly accessible, Multiverse empowers developers to explore and deploy AI solutions without incurring substantial infrastructure costs.

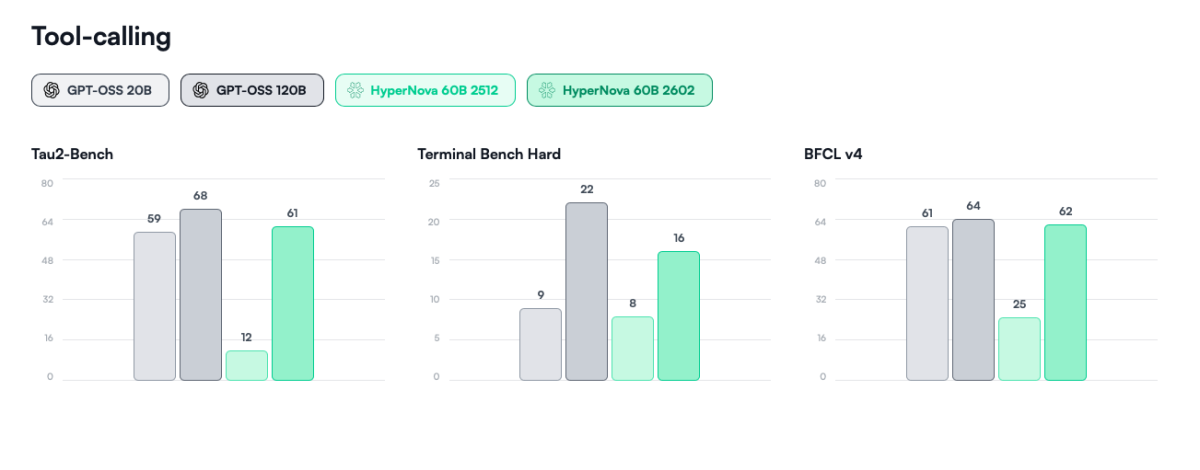

The latest model, HyperNova 60B 2602, arrives in response to positive feedback from users of its predecessor. It showcases substantial improvements across critical benchmarks, particularly in tool calling and agentic workflows. Key performance enhancements include a fivefold increase in agentic tool usage as measured by Tau2-Bench, a twofold improvement in agentic coding and terminal use according to the Terminal Bench Hard metric, and a 1.5 times enhancement in function calling capabilities measured by BFCL v4.

The new model maintains nearly equivalent tool-calling capabilities compared to the larger OpenAI gpt-oss-120B, while reducing its size from 61GB to 32GB. This advancement validates the potential of compression technologies for production-level AI applications and enhances the model’s deployability across various sectors.

With the release of HyperNova 60B 2602, Multiverse continues to broaden access to production-ready AI models suited for real-world applications in enterprise, research, and public sectors. The company plans to expand its portfolio by introducing more open-source models and updates throughout the year, catering to a variety of use cases from enterprise-level systems to applications on edge devices.

Multiverse Computing is strategically positioned to offer sovereign solutions across the AI landscape, and its open-source approach is designed to assist a burgeoning global community of developers and IT professionals evaluating AI technologies for commercial and internal use. This open access allows organizations to assess performance, security, and operational suitability prior to large-scale deployment, facilitating a smoother integration process and enhancing organizational control.

For those interested, all of Multiverse’s models, including HyperNova 60B 2602, can be accessed on Hugging Face at https://huggingface.co/MultiverseComputingCAI. Accompanying each release are technical documentation, benchmarks, and integration guides available on the same platform. To learn more about the company’s innovations in compressed AI models, visit multiversecomputing.com.

About Multiverse Computing

Multiverse Computing is a pioneer in quantum-inspired AI model compression, leveraging expertise in quantum software to create its innovative CompactifAI technology. The company, headquartered in Donostia, Spain, has a global presence with offices in the United States, Canada, and across Europe, serving over 100 clients, including major corporations such as Iberdrola, Bosch, and the Bank of Canada.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility