NVIDIA has unveiled its latest supercomputing platform, the Rubin AI, at CES 2026, marking a breakthrough in high-performance computing designed specifically for artificial intelligence (AI) tasks. This new architecture is strategically positioned to meet the increasing computational demands of contemporary AI applications, particularly in large-scale model training and generative AI systems. By integrating six sophisticated chips into one cohesive system, the Rubin platform sets a new standard for efficiency in AI supercomputing, aiming to drive the next generation of AI technologies.

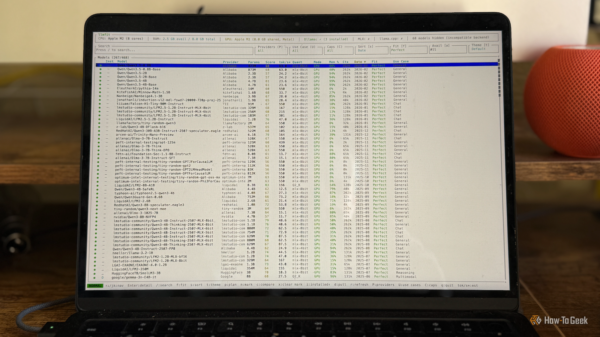

The Rubin platform features key components that collectively enhance computing performance and optimize data processing. Among these components are the Vera CPU, Rubin GPU, NVLink 6 switch, ConnectX-9 SuperNIC, BlueField-4 data processing unit, and the Spectrum-6 Ethernet switch. The synergy of these technologies creates a robust ecosystem designed to improve scalability, accelerate data transfer, and achieve superior performance in AI workloads. This architecture is expected to support more complex machine learning models, allowing for quicker training and inference.

According to Precedence Research, the AI supercomputer market was valued at USD 3.42 billion in 2025 and is projected to grow from USD 4.30 billion in 2026 to approximately USD 33.95 billion by 2035, representing a compound annual growth rate (CAGR) of 25.80% from 2026 to 2035. This fast-growing segment is becoming a pillar of high-performance computing, designed to support AI workloads such as deep learning and the analysis of large datasets.

NVIDIA’s CEO Jensen Huang emphasized that the launch of the Rubin platform reflects the rapidly growing global demand for AI computing infrastructure. The platform introduces several innovations, including the next-generation interconnect technology of NVLink, advanced transformer engines, secure computing capabilities, and high system reliability. These advancements are meant to enhance operational efficiency and enable organizations to manage the costs associated with large-scale AI implementations.

The Rubin platform signifies a substantial upgrade from the previous generation of AI computing architecture utilized by NVIDIA. The company claims that systems built on this new architecture can significantly lower costs related to AI inference while also greatly improving computational performance. Certain configurations have the potential to achieve performance levels of up to 50 petaflops, facilitating faster training and deployment of sophisticated machine learning models.

Anticipated to play a pivotal role in supporting a diverse array of AI applications, the Rubin platform is particularly geared towards hyperscale cloud infrastructures, research laboratories, and enterprise data centers. As organizations continue to expand their AI capabilities, the Rubin platform is well-positioned to influence the future landscape of AI-centric computing ecosystems, fostering advancements in productivity and innovation across various sectors.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility