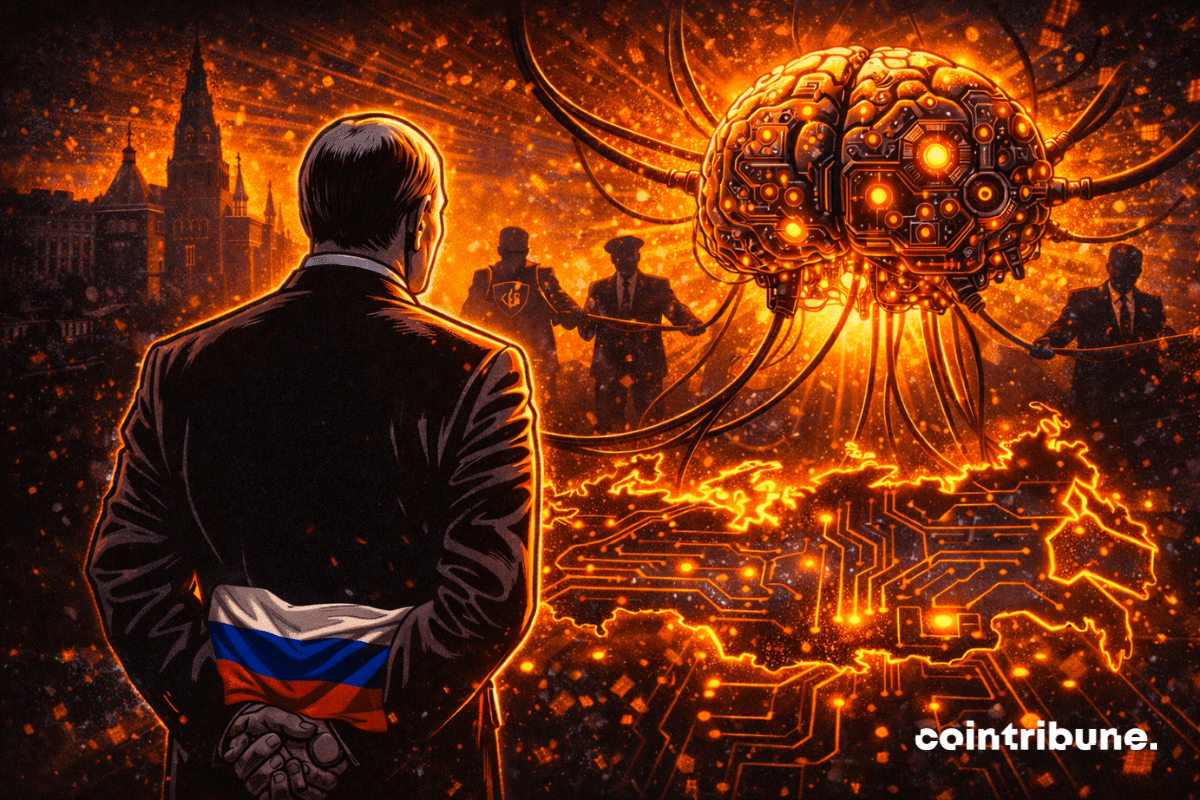

The global race for dominance in artificial intelligence (AI) is intensifying, with nations including the United States, China, and various European countries vying for technological supremacy. In this context, Russian President Vladimir Putin has taken a different approach, aiming not only to participate in the AI landscape but to create a wholly self-sufficient Russian AI ecosystem. His recent legislative proposals classify AI into three categories—sovereign, national, and trusted—designed to ensure that models developed within Russia are free from foreign influences. However, this ambition may be undermined by the reality of technological dependence on Western innovations.

Under the new law, “sovereign” AI models must be developed exclusively by Russian citizens and trained on data generated within Russia, entirely devoid of foreign components. The “national” category permits the use of open-source solutions, while the “trusted” classification is subject to oversight from the FSB, Russia’s federal security service. A presidential decree has established a commission led directly by Putin to oversee these initiatives, signifying that AI development will now be closely monitored by the state.

Despite these ambitious plans, experts in the Russian technology sector warn that achieving a fully sovereign AI system without leveraging foreign technologies would require hundreds of billions of rubles, a cost that would ultimately be borne by consumers. Furthermore, ongoing Western sanctions have severely restricted access to advanced semiconductors, essential for high-performance computing. A manager from a notable Russian tech company noted, “Totally sovereign platforms practically do not exist on the market today.” Even Sberbank, often touted as the spearhead of Russia’s patriotic AI efforts, relies on foreign open-source models like LLaMA and Mistral.

While the Kremlin enforces its digital sovereignty, Russian AI has also been employed in disinformation campaigns aimed at destabilizing public opinion in Europe. For instance, a deepfake video featuring King’s College London professor Alan Read was circulated, portraying him making derogatory comments about French President Emmanuel Macron. This incident is part of a broader disinformation initiative dubbed “Matryoshka,” which utilizes AI to create manipulative content for social media, targeting European audiences. Reports indicate that these videos often contain linguistic cues indicative of Russian syntax, marking them as products of Russian AI manipulation.

As the Kremlin pursues its vision of a self-reliant AI ecosystem, the paradox of declared sovereignty coupled with actual technological reliance raises questions about the feasibility of such an endeavor. The heavy investment required to build an independent AI infrastructure, combined with the constraints imposed by sanctions, suggests that the road ahead is fraught with challenges. While the state seeks to control and regulate AI development, the potential for misuse—particularly in the realm of disinformation—highlights the broader implications of AI technology in geopolitical dynamics.

AI represents a critical frontier for nations striving for technological leadership. However, the operational realities facing Russia serve as a cautionary reminder of the complexities involved in achieving true independence in this rapidly evolving field. The consequences of this digital arms race extend beyond national borders, as the global community grapples with the implications of artificial intelligence on security, ethics, and societal trust.

See also Boards Must Strengthen AI Governance Amid Rising Regulatory Scrutiny and Risks

Boards Must Strengthen AI Governance Amid Rising Regulatory Scrutiny and Risks South Africa’s AI Regulation Delayed Until 2027, Risks Growing Without Oversight

South Africa’s AI Regulation Delayed Until 2027, Risks Growing Without Oversight PRSA Reveals 5 Essential AI Ethics Rules Every PR Professional Must Follow

PRSA Reveals 5 Essential AI Ethics Rules Every PR Professional Must Follow Anthropic Faces Ethical Backlash as Self-Regulation Falls Short Amid Pentagon Pressures

Anthropic Faces Ethical Backlash as Self-Regulation Falls Short Amid Pentagon Pressures New AI Governance Playbook Launches to Define Risk Ownership and Compliance Strategies

New AI Governance Playbook Launches to Define Risk Ownership and Compliance Strategies