Artificial intelligence is reshaping the cybersecurity landscape, as attackers increasingly leverage generative AI to launch sophisticated cyberattacks. This evolution comes as traditional security measures struggle to keep pace with the speed and adaptability of AI-driven threats. While discussions surrounding AI often center on productivity and ethical considerations, the cybersecurity implications are profound and pressing.

Generative AI has transformed phishing from a manageable issue into a highly personalized threat, allowing attackers to craft tailored messages that mimic an organization’s internal communications. This ability stems from the technology’s capacity to analyze breached data and social media, creating targeted lures that reference actual projects and colleagues. The sheer volume of these personalized attacks can overwhelm defenses, rendering traditional training and awareness efforts insufficient.

The automation of phishing attacks marks just one area where AI is escalating the cyber threat landscape. Attackers can generate thousands of contextualized messages, iterating on them in real time to maximize effectiveness without significant human oversight. This shift has led to a concerning erosion of established detection signals that defenders have trained users to recognize, creating an environment where traditional security measures are less effective.

Moreover, the assumption that cyberattacks follow recognizable patterns is becoming increasingly outdated. Many security playbooks rely on static indicators of compromise and observable behavior, which AI-powered attacks can easily circumvent. They enable attackers to adapt tactics mid-attack, creating a dynamic threat landscape that requires organizations to rethink their entire approach to cybersecurity. Instead of chasing the latest detection tools, companies need to develop systems that are inherently adaptable to volatility.

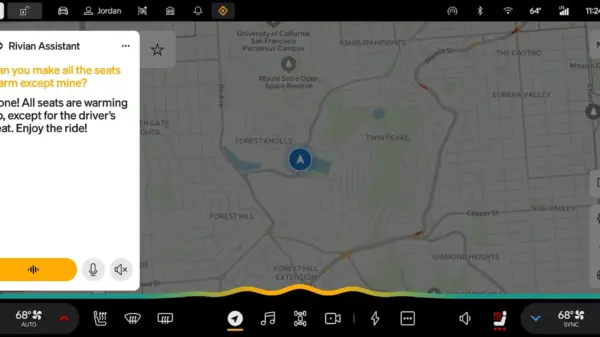

Organizations are urged to adopt a multifaceted approach to defense. This includes shifting towards behavioral and intent-based detection and continuously validating trust in real time. The incorporation of a human-in-the-loop system is crucial, as it ensures that AI-driven alerts prompt investigation rather than automatic responses, particularly in ambiguous contexts. Cultivating a resilient cybersecurity posture depends on adaptability rather than mere prediction.

On the defensive front, AI is proving beneficial for triaging alerts and correlating signals across vast datasets. However, reliance on automation can lead to dangerous pitfalls. Security teams may develop false confidence in automated systems, wrongly believing that risks are managed effectively. Additionally, there’s a risk of skill atrophy among analysts who become reliant on automated decisions. Effective security teams regard AI as a force multiplier, using it to surface anomalies and propose hypotheses while retaining human oversight for uncertain or ethically charged decisions.

The evolving threat landscape necessitates an overhaul of cybersecurity education to prepare defenders for AI-native challenges. Traditional training methods often prioritize tools and predefined attack types, which are insufficient in a dynamic threat environment. Education must now emphasize adversarial thinking, scenario-driven learning, and data literacy. This approach ensures that cybersecurity professionals are equipped to adapt to real-time threats generated by AI.

Key skills for future professionals will include the critical evaluation of AI outputs, systems thinking across both technical and human domains, effective communication under uncertainty, and ethical judgment in automated environments. As AI-generated threats become more prevalent, understanding how to question and validate AI systems will distinguish effective defenders from those who are reactive.

Ultimately, organizations that cling to static defenses or treat AI as a panacea will find themselves consistently outpaced by more agile adversaries. The focus must shift toward adaptive security strategies, disciplined use of automation, and education models that prioritize critical thinking and judgment. In an era where machines can generate attacks at unprecedented scale, the strategic advantage will belong to those who can think critically, challenge assumptions, and innovate continuously alongside automation.

As the landscape continues to evolve, the challenge lies not just in keeping pace with AI but in learning how to lead and make informed decisions in collaboration with it.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks