As artificial intelligence (AI) systems increasingly generate strategic options autonomously, the dynamics of corporate governance are undergoing a significant transformation. This shift, as outlined by governance strategist Massimiliano Ferraris, challenges traditional decision-making structures where human oversight has long been the norm. Ferraris argues that the advent of agentic systems—AI capable of producing analytical outputs and strategic narratives—requires boards to evolve from merely overseeing outcomes to designing the conditions that lead to those outcomes.

Ferraris posits that artificial general intelligence (AGI) should not be viewed solely as a technological event but rather as a governance event. This distinction signifies a transition that is already underway, reshaping fiduciary responsibility and board authority. Historically, management has maintained a stable principle: machines execute while humans decide. However, the rapid capabilities of AI have begun to fracture this architecture, compressing the cycle of idea-execution-optimization.

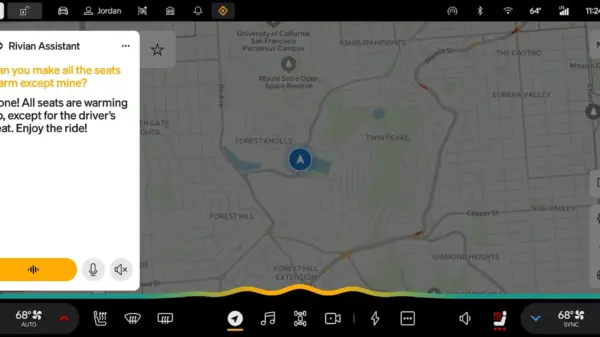

Advanced generative AI models developed by companies such as OpenAI and Anthropic can analyze financial statements, identify anomalies, and propose capital allocation scenarios in a fraction of the time previously required. For instance, BlackRock’s Aladdin platform is capable of real-time risk assessment across millions of positions, directly influencing asset allocation decisions. Similarly, Goldman Sachs has integrated generative AI into its due diligence processes, streamlining tasks that once took weeks into mere hours. This operational efficiency marks a fundamental shift in how decisions are made within organizations.

Ferraris highlights a critical aspect of this shift: the direction of governance is reversing. Analytical outputs are increasingly produced before human deliberation occurs, creating a scenario where the organization merely validates results rather than controlling the generative process. The implications of this change signal a potential loss of cognitive sovereignty, as many decisions may be made based on assumptions and criteria that have not been fully interrogated.

This phenomenon introduces what Ferraris terms “fiduciary latency risk,” which refers to the disconnect between algorithmic decision-making and the capacity of boards to trace and understand the underlying assumptions that led to these decisions. As AI systems generate options that appear solid and coherent, the board’s role becomes less about direct decision-making and more about endorsing outputs that may not be thoroughly vetted. The governance challenge, therefore, is not merely about oversight but about ensuring that the architecture underpinning decision-making is transparent and robust.

Ferraris argues that organizations need to recognize the importance of what he calls “cognitive capital”—the structured set of data, models, and knowledge architectures that enable the generation of decision options. This cognitive capital is distinct from traditional IT infrastructure and must be treated as a strategic asset requiring investment and oversight. If this invisible infrastructure is poorly governed, organizations risk delegating the construction of their strategic alternatives without fully understanding the implications.

The ongoing workforce transition exacerbates these complexities. This transformation represents not just a labor market issue but a broader institutional architecture challenge. The cognitive substitution enabled by AI permeates various sectors, impacting roles in accounting, legal, consulting, and strategic planning simultaneously. As a result, workforce dislocation could lead to economic instability, as the traditional mechanisms for reabsorbing displaced labor may not suffice.

In this context, governance must address the cognitive and systemic risks posed by AI integration. Organizations are encouraged to treat AI-generated surplus—a byproduct of automation—not as a mere efficiency gain, but as a capital allocation variable. By investing in workforce reskilling and transition initiatives, companies can mitigate these risks while reinforcing critical competencies.

To effectively navigate this new landscape, Ferraris suggests that boards must redefine their governance roles, transitioning from oversight to design authority. This entails establishing controls over decision architecture that account for the autonomy of AI systems while ensuring the integrity of human oversight. Key initiatives include an Optimisation Charter that delineates acceptable metrics, a Non-Delegable Domain Map that identifies critical decisions requiring human involvement, and a Cognitive Audit Trail that ensures transparency in the generative process.

Ultimately, as organizations adapt to the agentic era of AI, the competitive advantage will not merely stem from the speed of AI adoption but from how well they manage the interaction between algorithmic autonomy and fiduciary responsibility. Ferraris concludes that an effective governance transition is an investment that can enhance institutional resilience and mitigate systemic risks in an increasingly complex landscape.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health