Enterprise AI is moving from a phase of experimentation to one focused on economic proof, as organizations increasingly integrate conversational AI into customer-facing workflows. By 2026, the pivotal challenge for CEOs and CIOs is no longer whether the technology works effectively, but whether it can deliver reliable, defensible returns at scale.

Recent research from Gallup, along with industry insights, signals a more pragmatic approach in the sector. While voice AI technology is advancing rapidly, the complexities of operational, regulatory, and data environments are proving more challenging than initial enthusiasm suggested. For executives, the gap between technological capability and actual commercial value represents both strategic risk and opportunity.

Gallup’s latest initiative reflects this shift, as the firm tests AI-enabled phone interviews across multiple continents, conducting over half a million call attempts in seven languages. This effort aims not only to demonstrate technical feasibility but also to identify where the technology performs consistently and where it falls short.

The evolution is notable; modern voice AI systems are capable of interpreting open-ended speech, asking follow-up questions, and adapting conversations—capabilities that traditional Interactive Voice Response (IVR) systems lack. However, Gallup’s findings also reveal a critical reality often overlooked in boardrooms: these systems comprise multiple layers—speech recognition, orchestration, large language models, voice synthesis, and response coding—each introducing potential failure points.

Industry commentators have echoed these observations. Insights from techUK reveal that while conversational agents are becoming increasingly natural, operational success hinges on less visible engineering efforts, such as latency control and context retention. This indicates that although the technical frontier is evolving, operational reliability remains inconsistent.

Voice AI represents a key intersection of automation ambitions and customer realities, balancing cost-to-serve, regulatory risk, and brand experience. Theoretically, AI voice agents offer 24/7 coverage and adaptable capacity; however, many organizations are discovering slower-than-anticipated returns on investment. Reports indicate a significant disparity between AI enthusiasm and tangible financial benefits, with a Forrester survey revealing that only 15% of executives reported profit margin improvements from AI, and BCG research showing just 5% realized widespread value across their organizations.

Forrester also projected that companies might defer as much as a quarter of planned AI spending until 2026 as expectations adjust. This evolving landscape is influencing executive strategies, prompting a shift from broad exploratory deployments to more narrowly focused use cases tied to measurable outcomes. This transition marks a significant shift: AI is increasingly viewed as a capital discipline exercise rather than merely an innovation initiative.

The risk profile in voice AI deployment is uneven. While high-volume service functions—such as customer support and routine account queries—face more immediate automation pressures, complex or emotionally sensitive interactions remain challenging. Several organizations have recalibrated their deployments after finding that full automation can degrade customer experience in critical situations.

Despite these challenges, the potential for voice AI remains compelling. In environments where workflows are structured and integration is robust, these systems can eliminate friction, reduce response times, and extend service capabilities without a proportional increase in staffing. Over time, this could fundamentally alter cost structures in sectors that rely heavily on contact with customers.

As deployments advance from pilot phases to full-scale production, the associated risks become clearer. AI is inherently non-deterministic, complicating audit trails for regulated entities. Gallup notes that AI interviewers interpret open-ended speech rather than matching fixed inputs, leading to inconsistencies in handling similar responses from different users. This variability raises concerns regarding auditability and quality assurance in regulated sectors.

Additionally, challenges related to data and context fragility have surfaced. Real-world cases reveal that models can struggle with lengthy documents or nuanced rules—issues often overlooked in controlled demonstrations but that quickly present themselves in operational contexts. Another emerging concern is execution dispersion; while some firms successfully industrialize voice AI, others remain mired in proof-of-concept stages due to integration complexities or governance uncertainties.

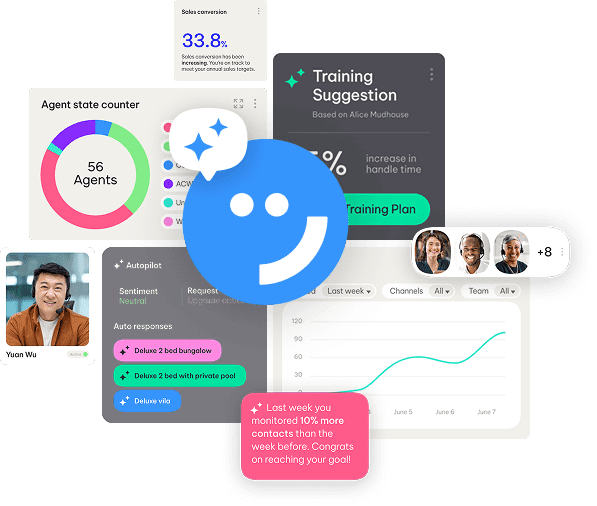

A clearer strategy is emerging among organizations that are making meaningful progress. Leading teams are focusing initial efforts on high-volume, rules-based workflows where success metrics are clear, improving both visibility into return on investment and organizational confidence. They are also treating integration as the principal engineering obstacle, ensuring that voice agents execute essential tasks beyond mere information provision, such as updating records or processing payments.

Furthermore, governance is being integrated earlier in the design process, with emphasis on compliance and audit logging. Organizations are embedding controls into their voice AI frameworks from the outset rather than retrofitting them later. Finally, a hybrid operational model is evolving, where AI assists in structured interactions while human agents manage complex or sensitive cases, reflecting how successful automation technologies typically scale.

As conversational AI continues its rapid advancement, the narrative for enterprises in 2026 will increasingly focus on disciplined execution rather than breakthrough capabilities. The organizations poised to extract real value will not be those with the most ambitious pilots, but those that align technology, data, governance, and workflow with clearly defined economic outcomes. Gallup’s methodical, test-driven approach mirrors a growing market mindset where reliability and measurable return supersede novelty as the primary objectives.

For CEOs and CIOs, this landscape presents a clear imperative: the opportunity for low-cost experimentation is dwindling. The next competitive divide will emerge between those that can effectively operationalize voice AI with control and those left navigating the gap between proof of concept and practical application.

See also Bank of America Warns of Wage Concerns Amid AI Spending Surge

Bank of America Warns of Wage Concerns Amid AI Spending Surge OpenAI Restructures Amid Record Losses, Eyes 2030 Vision

OpenAI Restructures Amid Record Losses, Eyes 2030 Vision Global Spending on AI Data Centers Surpasses Oil Investments in 2025

Global Spending on AI Data Centers Surpasses Oil Investments in 2025 Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge

Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge Investors Must Adapt to New Multipolar World Dynamics

Investors Must Adapt to New Multipolar World Dynamics