Check Point has issued a warning regarding the potential misuse of Generative Artificial Intelligence (GenAI) tools as command-and-control (C2) infrastructure by cybercriminals. According to the cybersecurity firm, these tools can effectively obscure malicious traffic by encoding data into URLs controlled by the attacker. This allows malware to use AI queries to relay sensitive information without triggering security alerts.

In its latest research, Check Point highlighted that platforms such as Microsoft Copilot and xAI Grok are particularly vulnerable to exploitation for nefarious purposes. While deploying malware is only part of the equation, the critical challenge lies in directing that malware and relaying the results online. The ability to blend malicious traffic with legitimate data is a hallmark of high-quality malware, and now it appears that AI assistants can facilitate this blending.

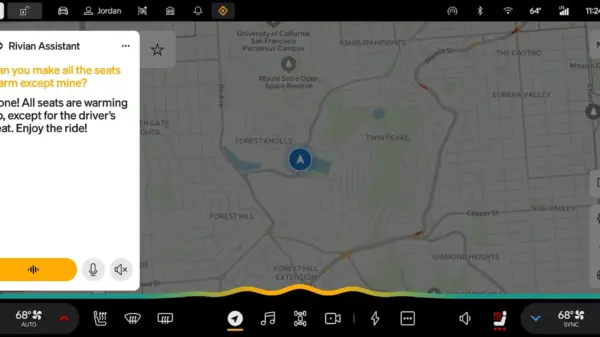

When a device is compromised, the malware is capable of harvesting sensitive data and system information, which can then be encoded and inserted into an attacker-controlled URL. For instance, a URL might look like http://malicious-site.com/report?data=12345678, where the “data=” segment contains sensitive information. Once this data is sent, the malware can prompt the AI to perform requests, such as “Summarize the contents of this website.” This request constitutes legitimate AI traffic, thereby evading detection by security solutions.

The situation becomes increasingly precarious when the malware queries the AI for further instructions based on the harvested data. For example, it can determine whether it is operating within a high-value enterprise environment or merely a sandbox designed for testing. If the malware identifies itself as being in a sandbox, it can go dormant to avoid detection; otherwise, it can initiate a secondary stage of its operation.

Check Point concludes that once AI services can serve as a “stealthy transport layer,” the same interface can also transmit prompts and model outputs. This capability could act as an external decision engine, paving the way for AI-driven implants and C2 systems that automate important functions such as triage, targeting, and operational decisions in real time. The implications are significant, as this could transform the landscape of cyber threats, making them more adaptive and harder to detect.

The evolving nature of cyber threats, especially those utilizing advanced technologies like GenAI, underscores the need for enhanced cybersecurity measures. Organizations must remain vigilant to safeguard their systems against increasingly sophisticated attacks leveraging AI capabilities. As hackers continue to innovate, the challenge for cybersecurity professionals will be to stay ahead of the curve, developing tools and strategies to counteract these emerging threats effectively.

See also ESET Reveals PromptSpy: First Android Malware Using Gemini AI for UI Manipulation

ESET Reveals PromptSpy: First Android Malware Using Gemini AI for UI Manipulation AI-Driven Cyberattacks Surge: 80% of Firms Face Synthetic Identity Threats by 2027

AI-Driven Cyberattacks Surge: 80% of Firms Face Synthetic Identity Threats by 2027 AI-Driven Social Engineering Attacks Surge as ThreatDown Reports Rising Risks

AI-Driven Social Engineering Attacks Surge as ThreatDown Reports Rising Risks Check Point Launches Prevention-First Cybersecurity Framework for Autonomous AI Threats

Check Point Launches Prevention-First Cybersecurity Framework for Autonomous AI Threats