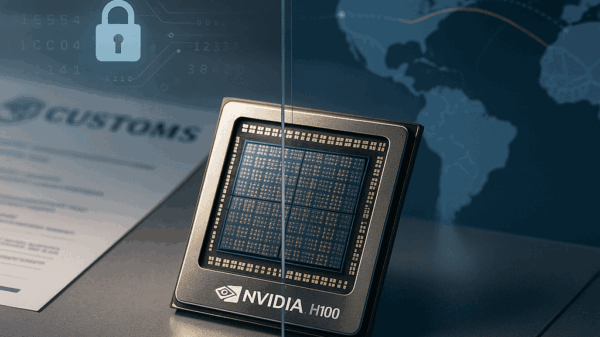

As quantum computing advances, experts warn that the long-standing security measures safeguarding artificial intelligence (AI) systems may become obsolete. The emerging risks associated with quantum threats to data integrity are becoming a pressing issue, particularly for organizations relying on Model Context Protocol (MCP) setups to connect AI models to external data sources. With the mathematical frameworks currently used for data encryption, such as RSA and ECC, proving vulnerable to quantum attacks, many businesses find themselves unprepared for a potential future where their data could be compromised.

According to a 2024 report from Deloitte, the anticipated arrival of “Q-Day”—the moment when quantum computers can effectively break existing encryption—means that organizations must immediately transition to post-quantum cryptography (PQC) to secure their long-term data. The implications of this shift are significant, as it opens a window for adversaries to harvest encrypted AI context today, only to decrypt it later when quantum technology becomes more potent. This creates a perilous “harvest now, decrypt later” scenario.

Adversarial attacks on AI models, which typically require time to refine, can be accelerated by quantum computing capabilities. For instance, a healthcare AI bot could be inundated with seemingly innocuous prompts that gradually disrupt its diagnostic functions. Furthermore, the reliance of MCP on secure peer-to-peer connections poses another risk; if a handshake is intercepted by a quantum-capable actor, the entire data stream becomes vulnerable. Small errors in API schemas, often overlooked, can act as glaring vulnerabilities for quantum algorithms to exploit, potentially exposing sensitive customer data.

In response to these emerging threats, enterprises must adopt more robust security measures. Gopher Security, a company focused on enhancing MCP setups, positions itself as a solution to counter these vulnerabilities. By shifting the focus from perimeter-based security to understanding the behavior of AI context, Gopher aims to automate defenses against quantum threats, effectively providing a more proactive approach to security.

Gopher Security’s features include real-time detection of tool poisoning attempts, where malicious instructions could be detected before the AI processes them. Additionally, the implementation of quantum-resistant peer-to-peer tunnels replaces outdated handshake protocols, ensuring that even if traffic is intercepted, the data remains secure. The platform also automates compliance checks by logging every context exchange with a tamper-proof signature, streamlining the auditing process while enhancing security.

With the average cost of a data breach recently estimated at $4.45 million by IBM, the need for efficient, AI-driven security measures becomes evident. Teams previously dedicated to manual audits find themselves overwhelmed and often miss subtle yet critical alerts. The complexity of quantum threats necessitates that organizations adapt their security frameworks now rather than waiting for a breach to occur.

The issue of “puppet attacks,” where the AI model appears functioning outwardly but has been compromised internally, further complicates the security landscape. Traditional security measures focused on identifying offensive keywords fall short against quantum-powered attackers who can manipulate language to evade detection. Instead, a behavioral approach to monitoring AI activity can reveal when models unexpectedly request access to sensitive databases or execute unauthorized commands.

Moving towards a quantum-resistant AI infrastructure is not an overnight task; it requires a cultural shift within security operations centers (SOCs) and a reevaluation of existing protocols. Organizations are encouraged to utilize their existing API schemas as frameworks for building defenses. By integrating PQC-enabled gateways that validate incoming traffic against these schemas, businesses can effectively detect anomalies that signal potential attacks.

Visibility into AI operations is critical. A comprehensive dashboard that tracks the lifecycle of context injections enables organizations to stay ahead of threats. Analysts must be trained to identify patterns indicative of slow-burn attacks rather than relying on simplistic “if-this-then-that” rules. Recent data from Palo Alto Networks highlights that 80% of security exposures arise from misconfigured identities in cloud environments, underscoring the urgency of tightening access parameters.

As organizations prepare for the eventuality of quantum threats, the goal remains clear: to future-proof AI operations. Introducing automated checks today will mitigate risks as quantum technology evolves. By taking these proactive measures, enterprises not only safeguard their data but also position themselves to respond effectively when “Q-Day” arrives.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks