At the 1 Billion Summit in Dubai this January, discussions centered on the transformative role of artificial intelligence (AI) in the financial sector, revealing both optimism and underlying concerns. AI’s influence extends beyond mere efficiency and cost reduction; it is reshaping the very foundations of financial services. Before customers even engage with advisers or institutions, AI systems are already shaping their perceptions and trust through personalized feeds, recommendation engines, and automated messaging, marking a significant shift in how financial choices are made.

The summit highlighted the urgency for institutions to address the scale of AI’s influence in financial services. While much emphasis was placed on the scale of reach and engagement, speakers cautioned that scale without governance is merely exposure to risk. The rise of financial creators — individuals who wield significant influence through podcasts, newsletters, and social media — illustrates how AI-driven persuasion has become systemic within the industry. These creators form relationships and garner trust long before consumers interact with traditional financial institutions.

The pressing question for finance leaders has shifted from whether AI-driven influence exists to how it is governed. Influence, which was once considered external to a company’s balance sheet, has now become integral to financial operations. As algorithms dictate reach and personalization enhances relevance, the speed of engagement often outpaces institutional responses, potentially leading to significant risks.

The role of financial creators highlights this paradigm shift. They do more than promote products; they foster direct relationships and community trust. AI amplifies their impact by optimizing content and facilitating rapid monetization, effectively turning marketing into a form of economic leverage. Financial institutions can no longer afford to overlook this influence; it demands the same rigorous governance applied to capital, compliance, and risk management.

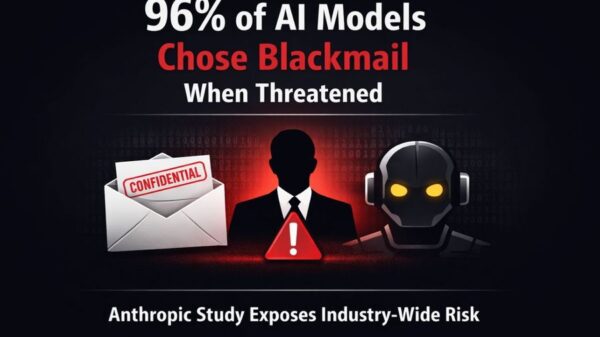

However, one of the most significant risks in this evolving landscape is the concept of symmetry. AI does not differentiate between good intentions and negative outcomes; it prioritizes engagement over suitability. Consequently, the same systems that enhance credibility can also exacerbate misalignment, resulting in significant failures when trust is rapidly eroded.

As regulation struggles to keep pace with these developments, the potential for blurred disclosures and obscured incentives increases. Customers may only realize the implications of monetization after their behaviors have shifted, leading to trust erosion not necessarily driven by malice but by a lack of transparency. AI does not introduce new ethical dilemmas in finance; rather, it magnifies existing challenges and reduces the room for ambiguity.

Personalization is often touted as a means of enhancing customer centricity, yet it can obscure the line between guidance and manipulation. AI systems that tailor messages based on behavioral signals and emotional cues risk becoming tools of silent persuasion. It becomes crucial for financial institutions to ensure that when influence is personalized, customers can comprehend the information they receive, understand the motives behind it, and discern whose interests are being prioritized.

The obligation to maintain fiduciary duty extends beyond the advice offered; it encompasses the entire environment in which decisions are made. Systems capable of influencing financial decisions must be transparent, governable, and interruptible by human oversight. Transparency is not merely a best practice; it forms the foundation of trust in financial interactions.

A prevalent misconception shared at the summit was that partnering with creators or third-party platforms mitigates risk. In reality, such partnerships can concentrate risk within institutions. The rapid pace of creator-driven messaging can collide with the slower, more cautious operational tempo of financial institutions, leading to compliance vulnerabilities and potential brand damage. When incidents arise, regulators look to the institution, not the algorithm or partner involved, emphasizing the need for robust governance surrounding AI systems.

Effective governance cannot be an afterthought. Institutions must recognize that vetting, contracts, disclosures, and training are essential elements of operating within an AI-influenced landscape. The most vital takeaway from the summit was not about the technology itself, but rather the discipline required to manage it responsibly.

Success will belong to those institutions that prioritize trust infrastructure alongside influence strategies. Such an approach necessitates treating creators as partners within a regulated framework, rather than viewing them as shortcuts to engagement. As the financial landscape evolves, the gap will likely widen between those that invest in trust as a systemic asset and those that continue to chase fleeting impressions. Institutions that embrace this shift will be better positioned to build lasting portfolios of influence, while others may face volatility and scrutiny in an increasingly complex environment.

AI is not diminishing the need for sound judgment in finance; it is making the necessity for judgment more visible. How institutions choose to govern their influence strategies today will have lasting implications for trust in the future.

See also Hong Kong Reveals 2026 Budget with HK$150B for AI and Financial Reforms

Hong Kong Reveals 2026 Budget with HK$150B for AI and Financial Reforms Jio Financial Launches Early Access for AI-Powered Finance App Finsider with Reward Points

Jio Financial Launches Early Access for AI-Powered Finance App Finsider with Reward Points ZimX Finance Launches ZiRA, an AI Assistant Tailored for Zimbabweans in Public Beta

ZimX Finance Launches ZiRA, an AI Assistant Tailored for Zimbabweans in Public Beta JioFinance Launches Agentic AI App, Revolutionizing Personal Finance for Users in India

JioFinance Launches Agentic AI App, Revolutionizing Personal Finance for Users in India Pluvo Secures $5 Million to Enhance AI Decision Intelligence for Finance Teams

Pluvo Secures $5 Million to Enhance AI Decision Intelligence for Finance Teams