In an age where perception equates to capital, the integrity of online reputations has come under intense scrutiny. Events such as the 2023 Deepfake CEO Scam and recent AI-generated deepfake persona investment scams highlight the troubling ease with which trust can be undermined. These incidents echo the 2019 “WeWork Pre-IPO Hype,” where the power of strategic storytelling significantly distorted valuation metrics. Similarly, the infamous 2017 Fyre Festival exemplified how influencer-driven narratives can overshadow genuine infrastructure and planning.

The dilemma of trust in digital platforms is not new, as evidenced by the 2017 hoax of the fictitious restaurant The Shed at Dulwich. This non-existent establishment skyrocketed to the top of TripAdvisor’s rankings, becoming the No. 1 restaurant in London based solely on fabricated reviews and images. The experiment, conducted by a journalist, revealed a troubling vulnerability in online rating systems, leading to widespread media attention and a humorous take on influencer culture. However, the episode also served as a critical wake-up call regarding the economic and social implications of manipulated reputations.

Economics of the Digital Delusion

Digital platforms thrive on user-generated content—ratings, reviews, and comments that ostensibly serve as quality indicators. This user feedback aims to reduce information asymmetry, a concept articulated by Nobel laureate George Akerlof, which describes a market’s failure when buyers lack reliable information about sellers. Ideally, user-generated reviews return credibility to the marketplace, but as the aforementioned journalist discovered, these signals can be easily fabricated.

The algorithms that govern visibility on these platforms reward patterns rather than the actual truthfulness or quality of content. When deceptive content surpasses a certain threshold, it becomes self-justifying, leading to a cycle where curiosity drives clicks, and perceived legitimacy is reinforced by visibility. In this environment, reputation becomes a commodity that can be bought, sold, or manipulated, incentivizing deception as an economically advantageous strategy. As algorithms dictate market success, manipulative practices seem a rational business choice, distorting consumer decision-making and directing capital toward the most visible rather than the most deserving entities.

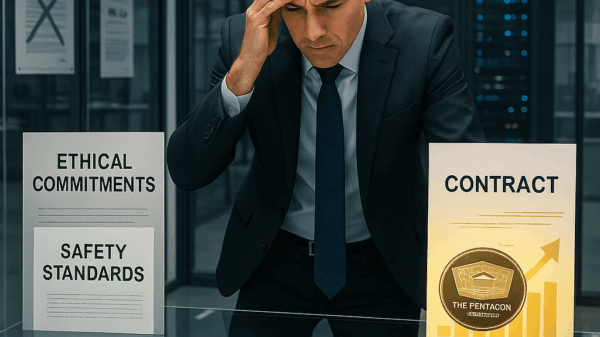

The crisis of trust extends beyond mere consumer deception; it raises questions about the structural integrity of platform capitalism itself. Major companies like Google, Amazon, and Yelp have emerged as arbiters of trust, controlling the visibility of businesses while simultaneously prioritizing engagement metrics that can be influenced by controversy and deceit. This creates a structural strain; platforms must present credibility while maximizing engagement, often leading to reactive measures against fake content rather than proactive solutions.

Reputational manipulation now permeates various sectors, affecting restaurants, e-commerce, and even educational technology. Sellers purchase counterfeit reviews to boost sales ranks, while online influencers cultivate followers for lucrative brand deals. In this landscape, perceived value often outweighs actual quality, raising the specter of long-term economic risks that threaten to weaken consumer trust and increase transaction costs. Once trust erodes, it becomes challenging to restore, posing a significant barrier to the growth of the digital economy.

Protection against Algorithmic Deception

Addressing digital deception necessitates comprehensive solutions. First, platform accountability must evolve beyond reactive moderation to a proactive framework that discourages systemic manipulation. Regulations should incentivize preventive measures rather than merely responding to scandals. Authentic reviews can be reinforced through verified digital identities linked to real transactions, combating anonymity that often facilitates abuse while respecting privacy protections.

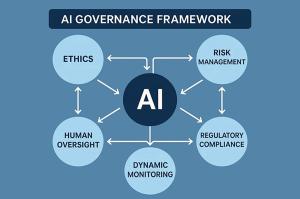

Transparency around algorithms is essential; while proprietary formulas may not be fully disclosed, providing insight into ranking factors can mitigate manipulation. Utilizing artificial intelligence defensively can also be effective—AI technologies can detect bot networks and flag synthetic media, exposing deception through the same tools that enable it. Furthermore, enhancing consumer internet literacy is crucial. Individuals need to learn how to navigate online cues, identify signs of inauthenticity, and challenge algorithm-driven narratives.

As the digital landscape evolves, the economic power of businesses will increasingly hinge on the trustworthiness of digital signals. The fallout from eroded trust can be both slow and costly to repair, indicating that no amount of venture capital or technological advancement can substitute for the foundational element of credibility. In a marketplace where consumers are rightfully skeptical, the challenge remains to foster and maintain trust in an environment rife with potential deception.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature