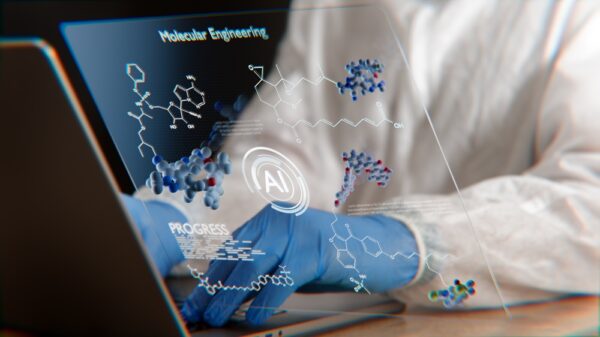

A national team of researchers, including faculty from the Wayne State University School of Medicine, has revealed that generative artificial intelligence tools, such as Gemini 2.0 and Python, can execute complex data analyses more quickly and, in some cases, more effectively than traditional computer science teams. This groundbreaking finding could pave the way for improved diagnostic testing for preterm birth, a leading cause of newborn mortality and long-term developmental disabilities in children. The U.S. witnesses approximately 1,000 preterm births daily.

The researchers enlisted AI to predict preterm birth outcomes based on data derived from over 1,000 pregnant women. Dr. Adi Tarca, a professor in the Center for Molecular Medicine and Genetics at Wayne State and a co-senior author of the study titled “Benchmarking large language models for predictive modeling in biomedical research with a focus on reproductive health,” published in Cell Reports Medicine, emphasized the potential of these AI tools.

This study also involved Dr. Marina Sirota, a professor of pediatrics at the University of California, San Francisco (UCSF), who had compiled microbiome data from approximately 1,200 pregnant women across nine different studies. Despite the vast amount of data available, analysis proved challenging, prompting the team to crowdsource assistance from data scientists through a competition known as DREAM, or Dialogue on Reverse Engineering Assessment and Methods, Challenges.

Dr. Tarca has previously led two DREAM Challenges, aiming to assess the applicability of machine learning and AI in biological research. “We typically organize challenges where we ask a research question, provide genomics data, and then invite the community to generate models to address the problem at hand,” he stated. “We then evaluate the models using a blinded test set and invite the top performing teams to co-author a paper describing the research question and the best solution.”

The recent reproductive health project sought to evaluate the capability of generative AI programs in replacing human analysts in data challenges by generating computer code needed to construct predictive models from genomics data, initiated by a simple user prompt. The quality of these AI-generated models was compared against solutions developed by human participants in three prior DREAM challenges.

Dr. Sirota expressed optimism over the findings: “These AI tools could relieve one of the biggest bottlenecks in data science: building our analysis pipelines. The speed-up couldn’t come sooner for patients who need help now,” she remarked. She also noted that the study’s success hinged on open data sharing, leveraging the combined experiences of numerous women and researchers.

The collaborative effort included faculty and students from various institutions, such as Huron High School in Ann Arbor, Michigan, New York University Grossman School of Medicine, NYU Langone Health, the University of Michigan, Michigan State University, and the Pregnancy Research Branch of the Eunice Kennedy Shriver National Institute of Child and Human Development.

In total, the researchers tasked eight AI tools with developing algorithms to assess pregnancy outcomes using the same datasets as the previous DREAM challenges, but without any human input. Among the eight large language models tested, o3-mini-high, 4o, DeepseekR1, and Gemini 2.0 managed to accomplish at least one task. The study found that R code generation was more successful than Python, with OpenAI’s o3-mini-high exhibiting the best performance, successfully completing seven of eight tasks.

The AI chatbots were directed with natural language prompts, akin to how ChatGPT operates, though these prompts were carefully structured to guide the AI in evaluating human health data similarly to how human DREAM teams performed. After executing the AI-generated code on the DREAM challenge datasets, four of the eight AI tools produced prediction models that matched or even surpassed the results generated by human teams.

While the authors acknowledged the potential for misleading results, they underscored that AI’s capabilities could enable researchers to navigate vast data sets more efficiently, allowing them to focus on deeper inquiries. “Thanks to generative AI, researchers with a limited background in data science won’t always need to form wide collaborations or spend hours debugging code,” Dr. Tarca noted. “They can focus on answering the right biomedical questions.”

This research was supported by the March of Dimes Prematurity Research Center at UCSF and by ImmPort, a publicly accessible data repository that provides immunology research data. The datasets used in this study were partially generated with the backing of the Pregnancy Research Branch of the NICHD.

See also Gemini App Launches Video Templates for Streamlined Content Creation

Gemini App Launches Video Templates for Streamlined Content Creation Interview Kickstart Launches Advanced Generative AI Course for Engineers, Lasting 9 Weeks

Interview Kickstart Launches Advanced Generative AI Course for Engineers, Lasting 9 Weeks OpenAI Reveals 20 Best Generative AI Tools of 2026 to Boost Productivity and Creativity

OpenAI Reveals 20 Best Generative AI Tools of 2026 to Boost Productivity and Creativity Dottxt Launches Outlines Framework on AWS for Enhanced Structured Outputs

Dottxt Launches Outlines Framework on AWS for Enhanced Structured Outputs