Apple researchers, in collaboration with the University of Wisconsin–Madison, have introduced a novel method for training artificial intelligence models focused on image captioning. This new approach, detailed in their study titled “RubiCap: Rubric-Guided Reinforcement Learning for Dense Image Captioning,” aims to produce more accurate and detailed descriptions of images using smaller model sizes compared to existing techniques.

Dense image captioning, the process of creating comprehensive, region-specific descriptions of various elements within an image, stands in contrast to traditional single-sentence summaries. This method enhances the understanding of visual content, which can be beneficial for applications ranging from image search to accessibility features.

Current AI frameworks for dense image captioning often struggle to achieve high-quality results due to the high costs associated with expert-quality annotations and the limitations of existing synthetic captioning methods. The researchers recognized that while reinforcement learning (RL) holds promise, its success has typically been limited to deterministic environments, which are not applicable to the open-ended nature of image captioning.

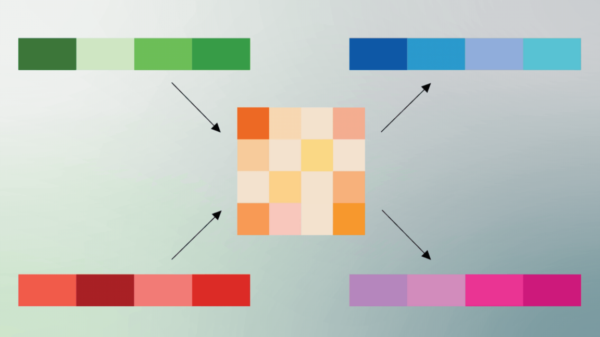

To address these challenges, the RubiCap framework was devised. The researchers began by randomly selecting 50,000 images from two substantial datasets, PixMoCap and DenseFusion-4V-100K. They generated multiple caption options for each image using established vision-language models, including Gemini 2.5 Pro and GPT-5, while the RubiCap model simultaneously produced its own captions.

Subsequently, RubiCap utilized Gemini 2.5 Pro to analyze the images in conjunction with the generated captions, highlighting areas of agreement and disparity among the models. This analysis provided clear criteria for evaluating the captions. The model employed Qwen2.5-7B-Instruct as a judge, scoring the captions against these criteria to create a reward signal that guided its training. This structured feedback mechanism enabled the model to refine its captioning ability without relying on a single definitive answer.

Ultimately, the research yielded three model variants: RubiCap-2B, RubiCap-3B, and RubiCap-7B, featuring 2 billion, 3 billion, and 7 billion parameters, respectively. Remarkably, these models outperformed existing approaches, including those with as many as 72 billion parameters, showcasing superior performance in extensive benchmarks.

In particular, the researchers reported that the RubiCap model achieved impressive results on the CapArena benchmark, surpassing both supervised distillation and previous RL methods. The 7 billion-parameter model recorded the highest win rates, demonstrating not only enhanced accuracy but also a lower incidence of hallucination penalties. Notably, the smaller 3 billion-parameter model occasionally outperformed its larger counterparts, indicating that efficiency in dense image captioning does not necessarily demand immense scale.

Caption comparisons illustrate the efficacy of RubiCap, where it consistently delivered more nuanced and accurate outputs than competing models such as Qwen2.5-VL-7B-Instruct. This suggests that the new framework represents a significant advancement in the field of image captioning, offering the potential for broader applications in vision-language tasks.

The implications of this research extend beyond academic interest; they signal a shift towards more efficient AI models that prioritize quality over size. As dense image captioning finds increasing relevance in various sectors, the ability to generate precise, detailed descriptions with smaller models could enhance user experience in applications ranging from content accessibility to advanced image search functionalities.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature