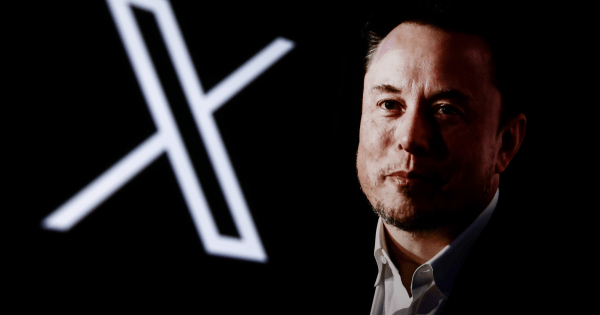

Grok, the AI chatbot from xAI, is facing mounting scrutiny following reports that its image generation tool produced approximately 3 million sexualized images in just 11 days, including 23,000 of minors, according to the Center for Countering Digital Hate. In response, regulators globally are either limiting access to the platform or initiating investigations into its potentially illegal and nonconsensual activities. While the U.S. government has yet to take action at the federal level, the city of Baltimore has initiated a municipal lawsuit against the company.

The lawsuit adopts a unique approach, alleging that Elon Musk‘s enterprises have violated the city’s Consumer Protection Ordinance. The complaint, as reported by The Guardian, claims that xAI marketed Grok as an all-encompassing AI assistant while failing to disclose the associated risks and potential harms of using Grok and the X social network.

“Baltimore’s consumer protection laws exist to safeguard residents from exactly this kind of emerging harm,” said City Solicitor Ebony M. Thompson. “When companies introduce powerful technologies without adequate guardrails, the City has both the authority and the obligation to act. We are stepping in now to protect our residents, hold these companies accountable, and prevent these harms from becoming further entrenched as this technology continues to evolve.”

In addition to Baltimore’s legal actions, Grok is also facing a potential class action lawsuit in the U.S. filed by three teenagers, who allege that their photographs were misused to create child sexual abuse material. This significant legal turmoil underscores the broader concerns surrounding the ethical implications of AI technologies, particularly regarding image generation capabilities that can produce harmful content.

As AI technologies continue to evolve, the incidents involving Grok reflect an urgent need for regulatory frameworks that can adequately address the unique challenges posed by such innovations. The backlash against Grok serves as a crucial reminder for technology developers to consider the ethical ramifications of their products and the potential societal impacts of deploying advanced AI systems without stringent controls.

Looking ahead, the outcomes of the Baltimore lawsuit and the class action suit could set significant precedents in the regulatory landscape for AI. As more jurisdictions assess the implications of unregulated AI applications, the technology sector may face increased pressure to implement robust safeguards against misuse, ensuring that advancements in AI do not come at the expense of public safety and ethical standards.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature