LinkedIn announced this week a significant overhaul of its Feed recommendation system, utilizing large language models (LLMs) and cutting-edge GPU technology to enhance content ranking for its global user base of over 1.3 billion professionals. The detailed engineering blog, authored by Hristo Danchev and published on March 12, 2026, provides the first granular insights into how the platform curates content, a critical factor as LinkedIn continues to dominate B2B paid media, accounting for 41% of total budgets in 2025, according to Dreamdata.

The previous architecture of LinkedIn’s Feed was described as “heterogeneous,” relying on multiple separate systems that operated independently. This fragmented approach produced a variety of content but involved substantial maintenance costs. Each system maintained its own infrastructure and optimization logic, making holistic tuning complex and inefficient. The new unified retrieval pipeline aims to streamline this process by generating embeddings through LLMs, which better capture the semantic proximity of posts and member profiles, enhancing relevance in content presentation.

One key improvement highlighted in the announcement is the system’s ability to handle “cold-start” scenarios, wherein new members join the platform with minimal data. Traditional methods could only identify basic correlations based on profile information. In contrast, the newly deployed model, trained on extensive pre-existing data, can infer deeper interests relevant to users, suggesting that an electrical engineer may have latent interests in renewable energy, even without past engagement.

LinkedIn’s engineers faced challenges in processing raw engagement metrics, as numerical features were treated as arbitrary text tokens, leading to a lack of correlation between item popularity and relevance. The solution involved percentile bucketing, which transformed raw counts into percentile ranks that provided a clearer signal of a post’s engagement level. This adjustment resulted in a 30-fold improvement in correlation between popularity and embedding similarity, significantly enhancing relevance in content retrieval.

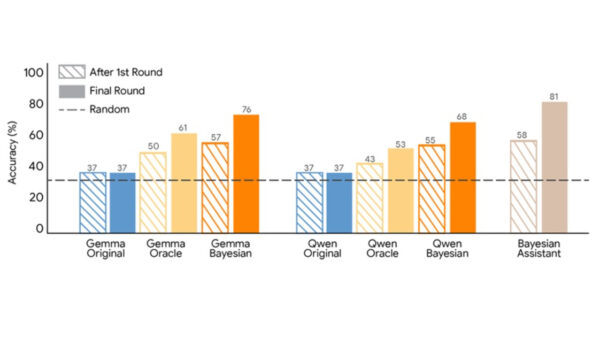

The system employs a dual encoder architecture where a shared LLM processes both member and item prompts, generating embeddings that are evaluated through cosine similarity. Training methodologies included using both easy and hard negative examples to refine the model’s ability to distinguish between nearly relevant and genuinely valuable content. This nuanced approach improved the system’s recall rate, underlining the importance of incorporating a member’s interaction history to enhance performance.

At the ranking stage, LinkedIn’s Generative Recommender (GR) model processes over 1,000 historical interactions, utilizing a transformer architecture with causal attention. This design respects the temporal flow of user engagement, allowing the model to recognize and adapt to shifts in a member’s interests over time. By employing a technique called late fusion, the model integrates static context features without inflating computational costs, ensuring efficient processing.

Serving this sophisticated model at scale poses distinct engineering challenges. Traditional ranking models relied on CPUs, but the LLM architecture necessitates high-bandwidth memory available only on GPUs. LinkedIn’s innovative solutions include a disaggregated architecture that separately handles feature processing and model inference, along with a custom Flash Attention variant that enhances performance even further, achieving sub-50ms retrieval latency across millions of indexed posts.

The implications of these advancements extend beyond improved organic reach. The same ranking logic that governs organic content also influences LinkedIn’s sponsored placements, helping to drive a substantial return on ad spend of 121% in 2025. Marketers may find the new system increasingly receptive to content targeting professionals in adjacent or emerging fields, as the model’s understanding of latent interests could yield new opportunities for engagement.

As LinkedIn’s role in B2B media continues to grow—now accounting for the largest share of budget allocations—it becomes crucial for marketers to grasp how the Feed prioritizes content. The shift towards LLM-based reasoning signifies a departure from traditional keyword competition, allowing posts about “data security” to connect with broader themes such as regulatory compliance and operational risk. This expanded competitive landscape requires content strategists to adapt their approaches significantly.

LinkedIn’s ongoing commitment to responsible AI practices is also notable. The platform emphasizes regular audits of its models to ensure fair competition among creators, while the system’s design deliberately excludes demographic attributes, focusing instead on professional signals and engagement patterns. This transparency is crucial as the platform continues to evolve and influence B2B marketing strategies.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature