Researchers from MIT and other institutions have developed a novel technique to significantly enhance the training speed of reasoning large language models (LLMs), which are adept at tackling complex problems through step-by-step breakdowns. This breakthrough, presented at the upcoming ACM International Conference on Architectural Support for Programming Languages and Operating Systems, could revolutionize the efficiency of training these advanced models, critical for applications such as financial forecasting and risk detection in power grids.

The new method addresses the considerable computational and energy demands associated with training reasoning models, which often suffer from inefficiencies during the training process. While some high-powered processors tirelessly work on intricate queries, others remain idle, wasting potential computational resources. The innovative technique devised by the team leverages this downtime by training a smaller, faster model that predicts the outputs of the larger reasoning LLM, with the latter verifying the smaller model’s outputs. This not only accelerates the training process but also reduces the workload on the reasoning model, doubling training speed without sacrificing accuracy.

“People want models that can handle more complex tasks. But if that is the goal of model development, then we need to prioritize efficiency,” said Qinghao Hu, an MIT postdoc and co-lead author of the paper detailing the technique. Joining Hu on the paper are co-lead author Shang Yang, along with Junxian Guo, and senior author Song Han, an associate professor at MIT and distinguished scientist at NVIDIA. The research team includes members from ETH Zurich, the MIT-IBM Watson AI Lab, and the University of Massachusetts at Amherst.

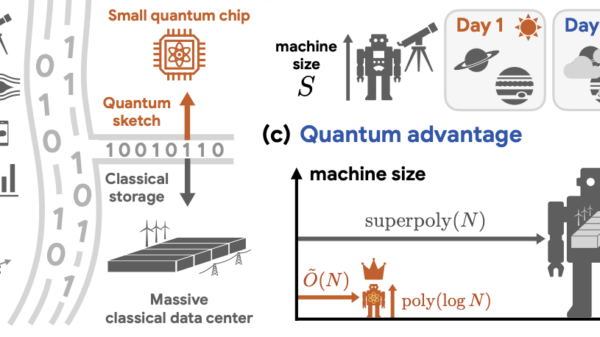

The training bottleneck in reasoning LLMs arises when developers use reinforcement learning (RL) to enable these models to identify and rectify mistakes in their reasoning processes. The RL technique involves generating multiple potential answers to a query and rewarding the best option, updating the model based on these top answers. However, researchers found that the rollout process—generating multiple potential answers—can consume as much as 85 percent of the execution time in RL training. “Updating the model—which is the actual ‘training’ part—consumes very little time by comparison,” Hu noted.

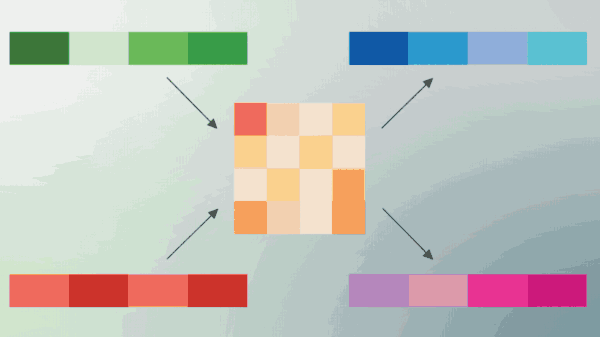

This bottleneck occurs because all processors in the training pool must finish their responses before advancing to the next step. Consequently, processors generating shorter responses may idle while waiting for others to complete more extended tasks. The team sought to mitigate this issue using a technique known as speculative decoding, where a smaller model, called a drafter, rapidly predicts future outputs of the larger model. The larger model then verifies the drafter’s predictions, expediting the training process.

Traditionally, drafter models are trained only once and remain static, which is impractical for reinforcement learning where models are updated frequently. To address this limitation, the researchers developed a flexible system termed “Taming the Long Tail” (TLT). This system includes an adaptive drafter trainer that utilizes idle processor time to train the drafter model on the fly, maintaining alignment with the target model without incurring extra computational costs. The second part of TLT, an adaptive rollout engine, optimizes the speculative decoding strategy based on the features of the training workload.

The lightweight design of the drafter model facilitates quick training, allowing TLT to reuse components from the reasoning model training process, further boosting performance. “As soon as some processors finish their short queries and become idle, we immediately switch them to do drafter model training using the same data they are using for the rollout process,” Hu explained.

Testing the TLT across various reasoning LLMs, the researchers reported training speed improvements ranging from 70 to 210 percent while maintaining accuracy. The drafter model also has the potential for efficient deployment as an added benefit.

Looking ahead, the team aims to integrate TLT into a broader array of training and inference frameworks while exploring new RL applications that could benefit from this accelerated approach. “As reasoning continues to become the major workload driving the demand for inference, Qinghao’s TLT is great work to cope with the computation bottleneck of training these reasoning models. I think this method will be very helpful in the context of efficient AI computing,” Han remarked.

This research is funded by the MIT-IBM Watson AI Lab, the MIT AI Hardware Program, the MIT Amazon Science Hub, Hyundai Motor Company, and the National Science Foundation, highlighting the collaborative effort to push the boundaries of LLM capabilities.

See also AI Detection Tools Struggle to Identify Deepfakes, Reveals New Testing Findings

AI Detection Tools Struggle to Identify Deepfakes, Reveals New Testing Findings Google Launches Nano Banana 2 with 4K AI Image Generation and Enhanced Text Clarity

Google Launches Nano Banana 2 with 4K AI Image Generation and Enhanced Text Clarity Mercury 2 Launches with 1,000 Tokens/Second Speed, Outpaces Haiku by 500%

Mercury 2 Launches with 1,000 Tokens/Second Speed, Outpaces Haiku by 500% Google Launches Nano Banana 2, Merging AI Image Tools for Enhanced Visual Generation

Google Launches Nano Banana 2, Merging AI Image Tools for Enhanced Visual Generation Douyin Launches Long-Form Writing Feature to Attract 907M Users with AI Support

Douyin Launches Long-Form Writing Feature to Attract 907M Users with AI Support