As universities across the globe integrate generative AI tools like ChatGPT into their curricula, a critical conversation is emerging regarding the impact of such technologies on student learning. While many instructors assume students are already using these tools, some have started mandating their use in coursework. However, this shift raises an important question: Are educators inadvertently hindering students’ critical thinking skills by requiring reliance on machines?

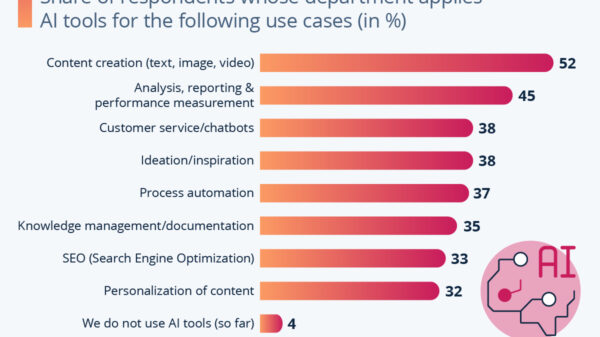

A growing body of research indicates potential harm. A 2025 study by Andreas Gerlich established a clear negative correlation between frequent use of generative AI and critical thinking abilities, particularly among younger users who may lack foundational reasoning skills. The adverse effects of AI dependency were notably less pronounced among students with more advanced education, suggesting that those still developing their cognitive capabilities are particularly vulnerable.

Professional organizations are echoing these concerns. The Institute of Electrical and Electronics Engineers Computer Society (IEEE) has highlighted the risks associated with AI-driven cognitive offloading, which refers to the outsourcing of mental tasks to machines. This trend poses significant challenges for educators tasked with developing students’ analytical capabilities, as reliance on AI tools may prevent students from engaging in the very reasoning processes they need to learn.

Critical thinking is an active, not passive, endeavor. When students turn to generative AI for tasks like dissecting arguments or evaluating evidence, they potentially forfeit the opportunity to engage in independent reasoning. The risk lies in their tendency to equate AI-generated outputs with genuine understanding, leading to a form of cognitive borrowing that may dilute their learning experience.

This situation presents an ethical dilemma for educators. Professors have a duty of care to their students and are responsible for the foreseeable outcomes of their teaching methods. If emerging evidence suggests that mandating the use of generative AI tools undermines critical thinking—particularly among students in need of these skills—the requirement could inadvertently cause more harm than good.

Consider the analogy of a foreign language class, where requiring students to utilize Google Translate for assignments would defeat the purpose of learning the language itself. Similarly, AI chatbots function as translation engines for reasoning, converting prompts into arguments without the requisite cognitive work that fosters true comprehension. By prioritizing convenience over cognitive engagement, students may lose out on the intellectual rigor necessary for developing logical reasoning.

Proponents of mandated AI use often argue it promotes equity, ensuring that all students become proficient with tools essential for the future workforce. However, this perspective overlooks a crucial reality: students who are still building their academic skills are at the greatest risk of becoming overly reliant on AI. Gerlich’s findings affirm this concern, suggesting that making generative AI compulsory could exacerbate existing disparities rather than equalize them. Students with weaker skills may be encouraged to delegate their thinking to chatbots instead of enhancing their own capabilities.

Beyond cognitive offloading, informed consent is another critical consideration. Students must understand that generative AI tools, designed to mimic human reasoning, can subtly alter their cognitive habits. If educators require the use of these systems, they owe it to their students to provide a comprehensive overview of associated risks.

Importantly, this is not a call for a blanket ban on generative AI. Students are likely to use these tools regardless of classroom policies. Instead, educators can create assignments that prioritize the process of reasoning, employing methods such as oral defenses, argument maps, and evidence-tracing tasks that make critical thinking visible and assessable.

Additionally, implementing “offloading audits” before assigning academic work could help identify potential pitfalls. Questions such as whether tasks require traceable reasoning steps, if AI-generated responses could pass for deeper understanding, and whether there are alternative pathways to demonstrate competence can guide assignment design. If such criteria are not met, educators should consider redesigning the task.

Ultimately, professors must continually assess whether tasks necessitate independent student performance. In courses focused on critical thinking, the answer is often yes. Mandating AI use in these contexts may therefore be counterproductive. Just as individuals do not improve their physical strength by allowing machines to lift weights for them, students will not enhance their thinking skills by relying on chatbots for cognitive tasks.

The mission of higher education is not to chase after technological trends but to cultivate intellectual habits that endure beyond the lifespan of current tools. As evidence mounts suggesting that requiring generative AI use may do more harm than good, educators should embrace the guiding principle of responsible teaching: First, do no harm.

Moti Mizrahi, Ph.D. is a professor of Philosophy of Science and Technology at the Florida Institute of Technology in Melbourne, Florida.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature