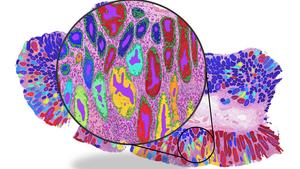

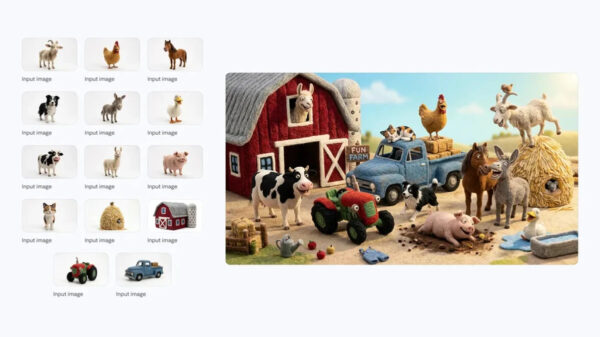

Starting March 1, 2026, the 2025 Law on Artificial Intelligence, No. 134/2025/QH15, will implement stringent transparency measures aimed at AI-generated content. This legislation mandates that any images or videos created or altered by artificial intelligence must feature clear and easily recognizable labels to differentiate them from authentic content.

According to Clause 4, Article 11 of the law, AI systems that simulate or impersonate real individuals—whether through audio, images, or video—are required to be labeled appropriately. This applies particularly to creative works such as films and artistic pieces, where the labeling must not detract from the overall experience of the audience. The objective is to maintain clarity regarding the origin of the content, thereby minimizing potential confusion over what is real versus AI-generated.

The new regulations also mandate that all AI-generated materials must be marked in a machine-readable format as set forth by the government. This step aims to facilitate the identification of such content in digital environments, enhancing transparency across platforms. Specific guidelines regarding the forms of notification and labeling will be detailed by the government in forthcoming regulations, further outlining how these requirements will be enforced.

Moreover, the law stipulates that if AI-generated content—be it text, audio, image, or video—creates ambiguity regarding the authenticity of events or individuals, the entity responsible for deploying that content must provide a clear notification upon its release. This measure serves as a precaution against misinformation, reinforcing accountability among creators and distributors of AI-generated media.

In addition to labeling requirements, the law places a significant emphasis on the responsibilities of developers, providers, deployers, and users of AI systems. They are required to ensure the safety, security, and reliability of their technologies, including timely detection and remediation of any incidents that could cause harm to individuals, property, or societal order.

The implementation of this law comes amid growing concerns surrounding the use of artificial intelligence in media and communication. As advancements in AI technology continue to blur the lines between reality and simulation, there is an increasing demand for regulations that protect the public from potential deception. The 2025 Law on Artificial Intelligence aims to address these concerns by establishing a framework that promotes transparency and accountability in AI-generated content.

As AI technologies evolve and become more integrated into daily life, the implications of this legislation will extend beyond mere compliance. It will likely influence industry standards and practices, shaping how creators approach content production in a landscape where authenticity is paramount. The law not only reflects the urgent need for transparency but also paves the way for a more informed public discourse surrounding artificial intelligence and its capabilities.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health