As regulatory scrutiny surrounding artificial intelligence intensifies, companies are grappling with heightened compliance risks. A pivotal question is emerging in the business landscape: should organizations appoint an AI Compliance Officer, or can they rely on robust governance frameworks to navigate this evolving regulatory terrain?

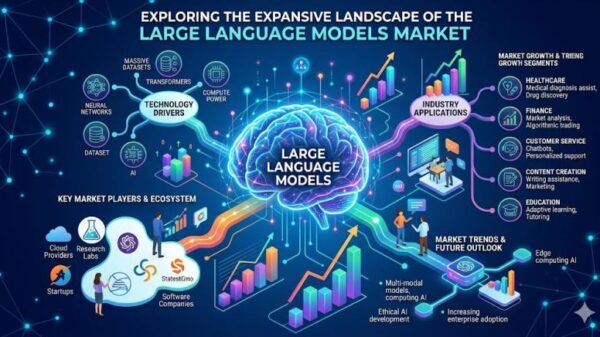

The demand for compliance measures has surged as governments worldwide seek to establish guidelines to manage the potential risks associated with AI technologies. With the European Union’s proposed AI Act and various national initiatives gaining momentum, firms face a complex web of legal obligations that can vary significantly by jurisdiction. These regulations aim to mitigate risks ranging from algorithmic bias to data privacy violations, pushing companies to evaluate their internal structures closely.

In response, some organizations are considering the establishment of dedicated roles focused exclusively on AI compliance. An AI Compliance Officer could serve as a centralized figure responsible for ensuring adherence to regulations, developing protocols, and training staff on compliance matters. This approach could foster a culture of accountability and empower businesses to respond swiftly to regulatory changes.

However, the necessity of such a position is not universally accepted. Critics argue that strong governance frameworks coupled with existing compliance teams may suffice. By integrating AI compliance into broader risk management strategies, companies could leverage their current resources without incurring the additional costs associated with a dedicated officer. This perspective emphasizes the importance of cross-departmental collaboration, where legal, IT, and operational teams work together to address compliance challenges.

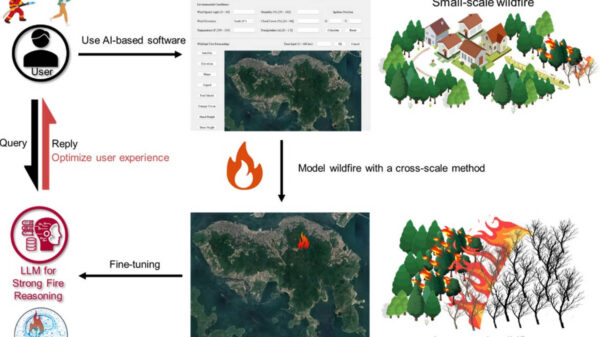

Proponents of a dedicated role contend that the rapid evolution of AI technology warrants specialized oversight. Given the unique challenges posed by AI, including its capacity for autonomous decision-making, a focused compliance officer could ensure that ethical standards and regulatory obligations are consistently met. This role could become increasingly vital as businesses scale their AI initiatives, particularly in sectors like finance, healthcare, and transportation, where the stakes are particularly high.

The discussion around AI compliance roles is unfolding against a backdrop of increasing public concern regarding the ethical implications of AI. Incidents of biased algorithms and data breaches have heightened scrutiny from both regulators and consumers, prompting businesses to reassess their commitment to responsible AI deployment. Companies that fail to proactively address compliance risks may face not only legal repercussions but also reputational damage in an era where public perception significantly influences brand loyalty.

As organizations navigate this complex landscape, they must also consider the implications of their decisions on innovation. Striking a balance between compliance and creativity in AI development will be crucial as firms strive to harness the benefits of these technologies while adhering to regulatory standards. The appointment of an AI Compliance Officer could potentially streamline this balance, allowing companies to innovate responsibly.

Looking ahead, the discourse surrounding AI compliance is likely to evolve as regulatory frameworks mature and the technology continues to advance. Organizations will need to remain agile, adapting their compliance strategies in response to ongoing legal developments and societal expectations. Whether through a dedicated officer or an integrated governance approach, the pursuit of compliance in the realm of AI will be central to fostering a sustainable and ethical technology ecosystem.

See also Trend Micro Launches Trend Vision One AI Security Package for Comprehensive AI Risk Management

Trend Micro Launches Trend Vision One AI Security Package for Comprehensive AI Risk Management India’s CCI Reveals AI Market Study, Proposes Policy for Fair Competition and Innovation

India’s CCI Reveals AI Market Study, Proposes Policy for Fair Competition and Innovation MSPs Prioritize Data Governance and AI Readiness Amid Rising Compliance Demands

MSPs Prioritize Data Governance and AI Readiness Amid Rising Compliance Demands Healthcare Data Collection & Labeling Market to Reach $3.69B by 2032, Growing 13.48% CAGR

Healthcare Data Collection & Labeling Market to Reach $3.69B by 2032, Growing 13.48% CAGR EU Delays Key Provisions of AI Act, Raising Compliance Concerns and Competitive Risks

EU Delays Key Provisions of AI Act, Raising Compliance Concerns and Competitive Risks