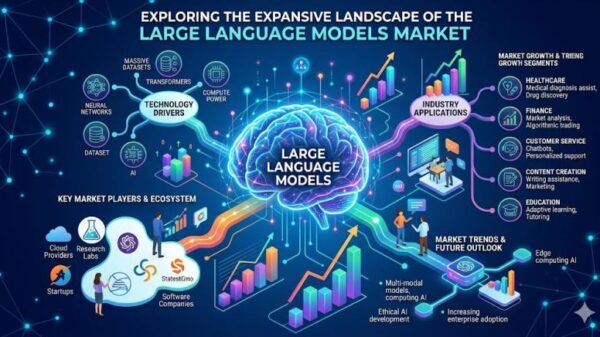

As artificial intelligence (AI) increasingly integrates into various sectors, from healthcare to finance, the question of liability in the event of AI-related harm has gained prominence. The intersection of technology and law is complex, particularly as AI systems are employed for high-stakes decision-making. With neural networks diagnosing diseases, approving loans, and even making employment decisions, the consequences of failures can be dire. This has raised critical inquiries about accountability: when AI causes harm, who is responsible?

In Ontario, Canada, personal injury lawyer and Doctor of Business Administration candidate, is frequently confronted with the issue of assigning blame when AI systems malfunction. Legal frameworks struggle to adapt to the unique challenges posed by AI, including the principles of negligence, causation, and foreseeability. As AI systems become more autonomous, the traditional understanding of responsibility becomes muddled.

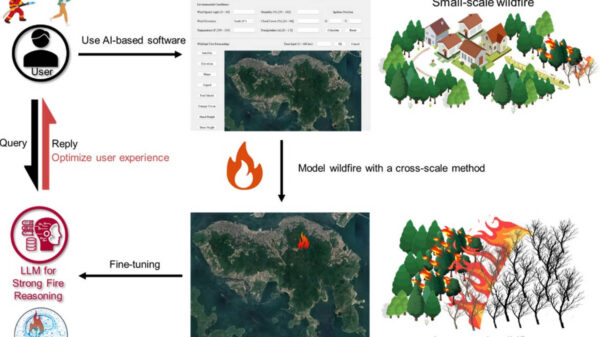

The role of AI has expanded far beyond simple automation, now penetrating vital areas like healthcare, transportation, and policing. Current models often rely on deep neural networks capable of detecting complex patterns in large datasets, but their inner workings remain largely opaque even to their developers. This unpredictability presents a significant challenge to legal doctrines that have historically relied on foreseeability and human intention, creating a tension between innovation and accountability.

Legal scholars have identified a “liability gap” in the governance of AI, complicating the established elements of tort law: a duty of care, a breach of that duty, causation, and damages. Developers may lack knowledge about how their models will behave once deployed, and many organizations utilize third-party machine learning systems. The end-user often faces an opaque decision-making process, making it difficult to pinpoint responsibility.

In personal injury cases, courts traditionally evaluate whether a defendant acted reasonably. However, how can reasonableness be assessed when decision-making is partially delegated to probabilistic models? The challenge lies in the fragmented nature of responsibility among various actors involved in the AI ecosystem, including data providers, software developers, and the organizations that utilize AI outputs.

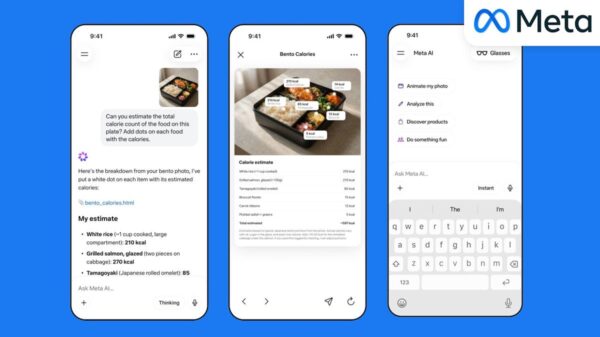

The issue of explainability further complicates matters. Deep learning systems often operate as “black boxes,” obscuring their internal decision-making processes. In cases where a medical AI misdiagnoses a condition, potential points of fault could include the training methods, the data used, and the reliance placed on automated outputs by healthcare professionals. Legal literature suggests a need to differentiate between causal responsibility, role responsibility, and liability responsibility to effectively attribute blame in such scenarios.

Litigation surrounding autonomous vehicles provides a case study in how courts may approach AI-related harm. Courts have begun to apply traditional principles of negligence and product liability to injuries caused by these technologies. Recent cases have seen juries apportioning liability between human drivers and technology companies, highlighting the difficulty of determining responsibility in the context of AI. Should liability rest with the vehicle manufacturer, the software developer, the human operator, or the data used to train the algorithm?

There is an ongoing debate regarding whether traditional negligence frameworks or stricter liability regimes are more appropriate for AI-related cases. Some argue for strict liability, suggesting that injured parties should not bear the burden of proving fault when dealing with highly complex technological environments. In contrast, others believe that negligence law can adapt to reflect technological changes, emphasizing the need for a balance between innovation and accountability.

From a business standpoint, AI liability represents a significant risk management issue. Organizations deploying AI systems must proactively address questions surrounding contractual risk allocation, professional liability insurance, and regulatory compliance. The role of insurance will be crucial in defining accountability; some scholars suggest that a combination of insurance and tort may be the most effective way to address AI-related harms.

Ethical considerations also play a critical role in the discussion of AI governance. Key principles such as fairness, transparency, accountability, and explainability are essential in shaping the legal frameworks surrounding AI. However, legal obligations do not always align with ethical responsibilities. Recent scholarship advocates for more comprehensive frameworks that connect design decisions with legal outcomes, ensuring that those harmed by AI technologies have recourse.

As AI systems evolve, the future of negligence law will likely adapt to a landscape where decision-making authority increasingly resides with machines. Courts are expected to continue applying existing legal doctrines to these new technologies, focusing on the actions of the individuals behind AI systems rather than the systems themselves. The question remains: who is the risk creator, and who can best prevent harm from occurring?

Until legislatures establish comprehensive regulatory frameworks, courts will have to navigate the complexities of AI liability by applying established legal principles to novel technologies. While neural networks may be a recent development, the fundamental principles of negligence remain deeply rooted in legal tradition.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health