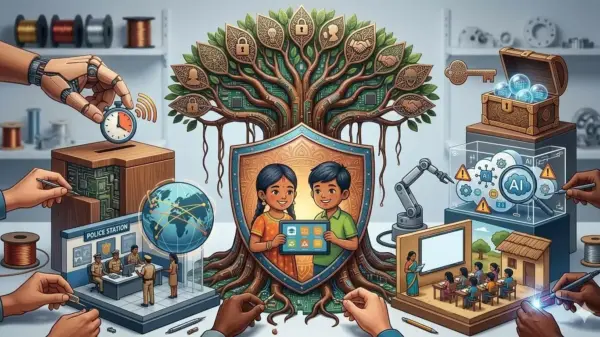

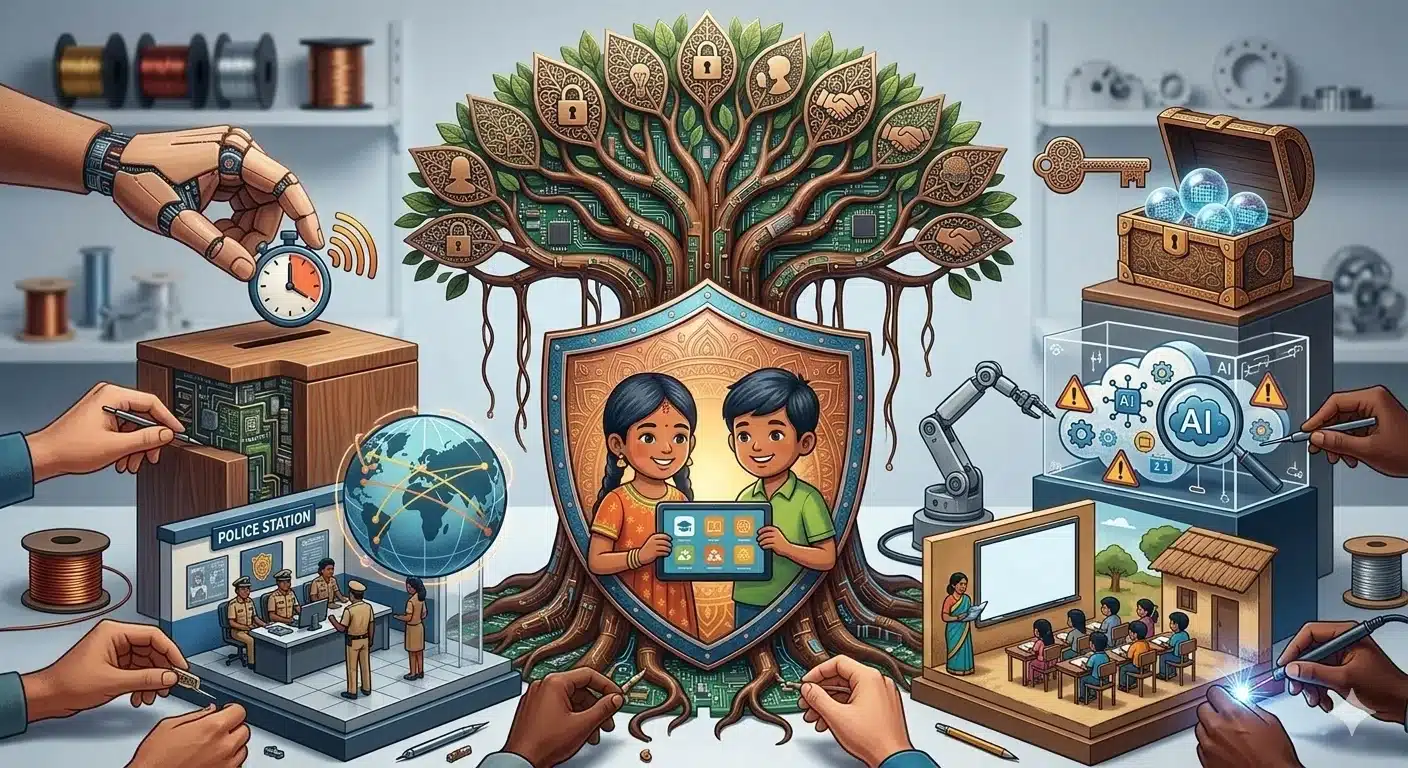

As artificial intelligence (AI) continues to transform interactions with technology, concerns about AI child safety in India are gaining prominence. Digital platforms, from AI-powered toys to social media algorithms, are now integral to children’s lives, offering opportunities for learning while also posing significant risks related to privacy, exploitation, and online harm. The Indian government acknowledges these challenges, with Union Minister for Electronics and IT, Ashwini Vaishnaw, recently outlining regulatory measures aimed at bolstering AI child safety during a statement in Parliament.

The emphasis from officials is clear: the advancement of AI technologies should not compromise the safety of children online. A foundational element supporting AI child safety in India is the Information Technology Act, 2000. This legislation mandates that online platforms prevent the dissemination of harmful content involving children, including sexually explicit materials or content promoting violence. Under this law, social media platforms must promptly remove illegal content upon receiving notifications from the government or courts. In cases of non-consensual intimate content, companies are required to take action within two hours.

These requirements are particularly pertinent in the AI landscape, where harmful content can rapidly proliferate or be generated through advanced technologies. The law also compels platforms to report specific offenses to authorities, bolstering the legal framework designed to protect minors online.

Complementing these regulations is the Digital Personal Data Protection Act, 2023, which introduces stringent rules regarding the collection and use of children’s personal data, particularly from emerging technologies like AI-based toys and applications. Companies must obtain verifiable parental consent before processing any child’s personal data and face strict limitations on practices such as behavioral tracking and targeted advertising directed at children. These measures aim to prevent AI systems that engage with children from covertly collecting or exploiting their data without parental oversight.

In addition to existing laws, the Indian government has released AI Governance Guidelines to promote ethical AI development. These guidelines recognize children as a vulnerable demographic at risk for long-term harm from inadequately designed AI systems. They propose risk assessment frameworks and monitoring mechanisms to help policymakers detect potential AI-related dangers proactively. This commitment to responsible development is reflective of India’s overarching AI strategy, which aims to foster innovation while safeguarding its citizens.

To further strengthen AI child safety, enforcement mechanisms play a significant role. The government has established the Indian Cyber Crime Coordination Centre and the National Cyber Crime Reporting Portal, enabling citizens to report cybercrimes, including those targeting children. Collaborations with internet service providers facilitate the blocking of websites hosting child sexual abuse material, using databases from organizations like the Internet Watch Foundation. Law enforcement agencies also benefit from training programs and cyber forensic capabilities funded through national cybercrime prevention initiatives.

However, legal frameworks alone are insufficient. Public awareness is equally essential to ensure the effectiveness of these measures. Government-supported initiatives, such as the Information Security Education and Awareness (ISEA) program, have conducted extensive workshops across the country, reaching various demographics, including students, teachers, and law enforcement personnel. Additionally, guidance from organizations like the National Commission for Protection of Child Rights contributes to shaping cyber safety protocols for schools, parents, and educators.

India’s evolving set of laws, policies, and awareness programs signals a concerted effort to create a protective framework surrounding AI technologies. Yet, experts stress that without robust enforcement, platform accountability, and improved digital literacy, well-intentioned regulations may fall short of their objectives. As the nation aspires to lead in AI innovation, it faces the challenge of ensuring that its technological ambitions do not outstrip the protections necessary for its youngest digital citizens.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health