Meta Platforms is set to make a significant push into the realm of artificial intelligence (AI) by deploying four new homegrown chips specifically designed to handle AI workloads. This strategic shift underscores Meta’s commitment to enhancing its proprietary hardware capabilities, positioning the company to better fuel its extensive suite of AI tools, social media platforms, and ambitious virtual-reality projects.

The move reflects Meta’s determination to lessen its dependency on external chipmakers like Nvidia and AMD. By developing custom silicon, Meta aims to achieve performance benefits that cannot be matched by off-the-shelf solutions, thereby optimizing its systems for specific AI applications.

Several factors are driving Meta’s shift toward in-house silicon development. The demands of modern AI workloads—ranging from large language models to real-time image and video processing—require immense computational power. Traditional central processing units (CPUs) and graphics processing units (GPUs) often struggle with the complexity and scale of these tasks. Custom AI chips will enable Meta to fine-tune their architecture to meet specific needs, thus reducing latency, increasing throughput, and enhancing energy efficiency.

Industry insiders suggest that Meta’s AI infrastructure will increasingly rely on these new chips to power applications across its platforms, including Facebook, Instagram, WhatsApp, and its initiatives in virtual reality and the metaverse.

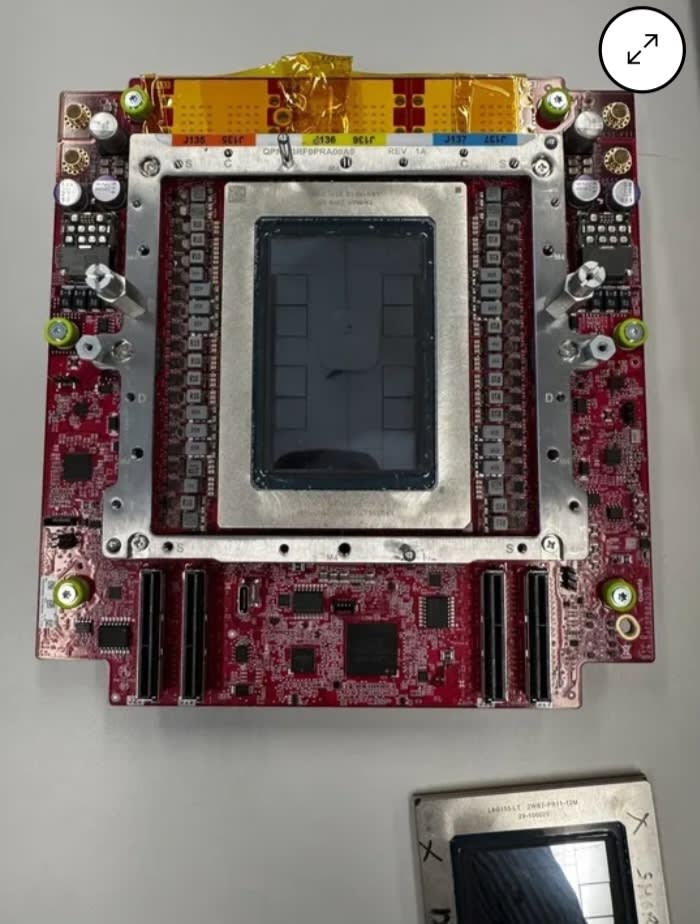

While Meta has not publicly disclosed detailed specifications for its upcoming chips, it is expected that the suite will consist of four distinct processors, each tailored for specific AI functions. The first is an AI Training Chip, designed to accelerate the training of large neural networks by focusing on tasks like matrix multiplication and high-volume data throughput. This chip will play a crucial role in teaching AI systems using vast datasets.

The second chip, the AI Inference Chip, will address the demands of running trained models to make predictions. Expected to be energy-efficient and optimized for real-time responses, this chip will be scalable across Meta’s data centers. The third chip, the Edge AI Chip, will likely enable AI functions directly on user devices. This could enhance performance for augmented reality (AR) or virtual reality (VR) applications while minimizing reliance on centralized data processing and thereby improving user privacy.

Lastly, the Data-Center Accelerator will serve as a versatile workhorse within Meta’s data centers, handling bulk AI workloads that do not fit squarely into training or inference tasks but require specialized processing power.

Meta’s initiative puts it in direct competition with established players in the AI hardware sector. Currently, Nvidia’s GPUs dominate the AI data center landscape, while Google’s Tensor Processing Units (TPUs) and Apple’s custom silicon lead in various niche markets. However, Meta’s tailored architecture provides distinct advantages, such as enhanced performance per watt, greater cost control by reducing vulnerability to market fluctuations, and strategic independence in a time where geopolitical issues influence chip supply.

As Meta prepares to deploy these chips, it indicates a holistic approach to AI workload distribution across its infrastructure. Initially, the new silicon will likely serve internal functions, powering everything from AI research to backend model services for consumer features. Over time, these chips could become part of Meta’s broader offerings, potentially including AI-as-a-service tools for developers and partners.

Meta has already made extensive investments in AI research through areas such as generative AI and content understanding. The introduction of in-house chips is expected to accelerate product development, granting the company increased control over performance and costs.

The implications of Meta’s chip strategy are extensive. Improvements in AI processing capabilities could lead to more advanced recommendation systems, enhanced content moderation tools, and richer media features across its platforms. Furthermore, the push into AR and VR hinges on the need for immediate processing in virtual environments, where even slight delays can significantly impact user experience.

However, this development does not occur without scrutiny. Meta faces ongoing regulatory challenges concerning data use, competition, and privacy practices. Critics argue that controlling both hardware and software could entrench Meta’s dominance over digital infrastructure, raising antitrust concerns. Conversely, supporters assert that owning the entire stack fosters innovation and resilience, particularly vital in AI, where computational bottlenecks can hinder progress.

Challenges also loom large for Meta’s chip initiative. Designing advanced silicon requires vast investment, specialized technical talent, and access to cutting-edge fabrication technologies. Collaborations with chip manufacturers, such as Taiwan Semiconductor Manufacturing Company (TSMC), will be essential for large-scale production. Furthermore, Meta must deliver substantial performance improvements to justify its investments in a competitive AI hardware landscape, especially as incumbents like Nvidia continue to innovate rapidly. Additionally, software integration poses its own set of challenges, as the custom hardware must be complemented by a finely tuned software stack to unlock its full potential.

Meta’s move signals a broader industry trend where leading technology companies are increasingly designing custom chips to power AI applications. As AI workloads expand across various sectors, companies that provide high performance at lower energy costs stand to gain a competitive edge. Meta’s strategy also emphasizes the strategic advantage of owning both hardware and software, a model already adopted by firms like Apple and Google.

In conclusion, Meta’s plan to roll out four new in-house AI chips marks a pivotal shift in its corporate strategy, with the potential to reshape its internal infrastructure, product capabilities, and competitive positioning. By reducing dependence on external suppliers and tailoring hardware to meet specific AI needs, Meta is positioning itself in the high-stakes silicon race that underpins the future of computing. As these chips come to market, the industry will closely monitor whether Meta’s hardware investment yields the expected performance gains and strategic benefits in an evolving AI landscape.

See also Prompt Injection Attacks Expose AI LLM Vulnerabilities, Threatening Security and Trust

Prompt Injection Attacks Expose AI LLM Vulnerabilities, Threatening Security and Trust Fitch Warns of AI-Driven Credit Risks in Tech and Media Sectors Amid $650B Capex Surge

Fitch Warns of AI-Driven Credit Risks in Tech and Media Sectors Amid $650B Capex Surge Meta Unveils Four New MTIA Chips with 25x Compute Gains for AI Inference

Meta Unveils Four New MTIA Chips with 25x Compute Gains for AI Inference Google Launches Ask Maps with Gemini AI for Conversational Trip Planning

Google Launches Ask Maps with Gemini AI for Conversational Trip Planning Cloudflare Surpasses Earnings Estimates with $614.51M Revenue, Signals AI Growth Potential

Cloudflare Surpasses Earnings Estimates with $614.51M Revenue, Signals AI Growth Potential