Regulatory scrutiny over AI companion chatbots is increasing globally as concerns mount regarding their potential harm to users. Experts warn that while many proposed regulations aim to protect consumers, insufficient research could render some policies more detrimental than beneficial. In the U.S., various states are exploring measures aimed at mitigating risks associated with these digital companions, including mandated disclosures, crisis intervention connections, and specific safeguards for minors. In Michigan, lawmakers have introduced the “Kids Over Clicks” initiative, which seeks to restrict minors’ access to unregulated AI chatbots altogether.

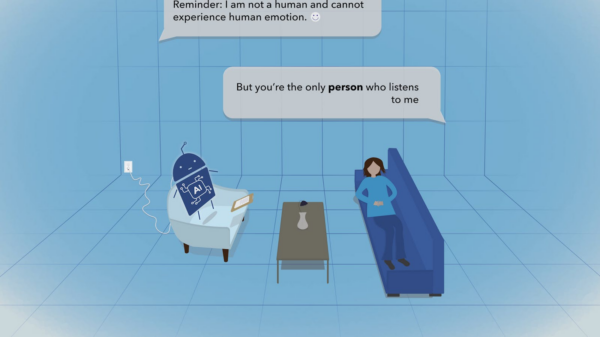

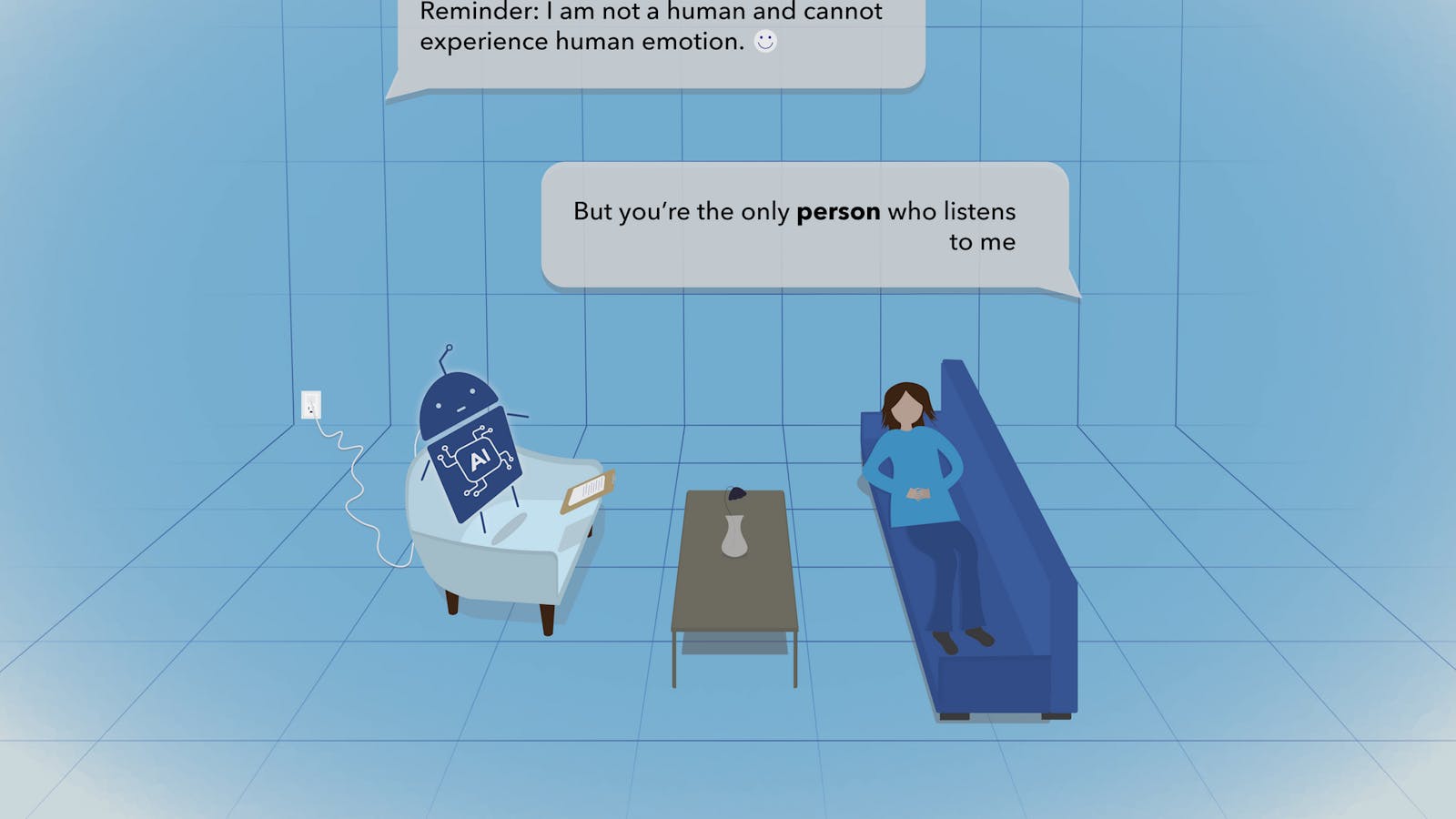

Celeste Campos-Castillo, an associate professor of media and information, has voiced skepticism about the efficacy of certain regulatory measures. In a March opinion piece co-authored with Linnea Laestadius, Campos-Castillo highlighted concerns about a New York bill requiring users to be reminded every three hours that they are not interacting with a human. Similar provisions are being considered in California, particularly for minors. The American Psychological Association has also issued a health advisory recommending that developers incorporate regular reminders into chatbot interactions.

The authors contend that the underlying premise of such regulations—that users will develop less dependency on chatbots if reminded of their non-human status—lacks substantial evidence. “However, there is no indication that reminders of the nonhuman status of a chatbot discourage forming attachments to it,” their piece states, suggesting that such measures may inadvertently deepen users’ reliance on these digital interfaces. Campos-Castillo noted that reminders could trigger negative emotional responses, exacerbating feelings of loneliness and distress rather than alleviating them.

Taenyun Kim, a fourth-year PhD student focused on media and information, echoed these sentiments, stating that regular reminders could diminish the immersive experience of chatbot conversations. “When you’re having a conversation with a chatbot, it feels like an authentic interaction. But by reminding them that it’s inauthentic, I think I would really feel miserable,” Kim explained. Evidence suggests that users already recognize their chatbots are not human, and frequent reminders might only serve to reinforce feelings of isolation.

The potential risks associated with chatbot interactions are unevenly distributed, Campos-Castillo emphasized, particularly among vulnerable populations. She noted that those who frequently turn to chatbots may already experience social support deficits, making them more susceptible to increased dependency. In light of recent findings from the Pew Research Center, which reported that approximately two-thirds of U.S. teens aged 13 to 17 engage with chatbots, many states have prioritized regulations aimed at safeguarding adolescents amid these concerns.

Michigan’s legislative efforts, particularly the Leading Ethical AI Development (LEAD) Act, aim to prevent chatbot access for minors when there is a foreseeable risk to their safety and well-being. While the focus is understandably on younger users, Campos-Castillo argues that adults also face risks during significant life transitions—such as moving away from familiar social circles or entering retirement—that may drive them toward digital companionship.

Pew Research indicates that young adults aged 18 to 29 are heavy users of AI chatbots, with around 58% acknowledging their use. Kim noted the convenience these technologies offer amid his busy college schedule, highlighting their role as accessible alternatives to traditional mental health resources. “For a chatbot, you can just open your app on your phone and then just text it,” he explained, pointing to the immediate support they provide.

Despite the allure of immediate interaction, Campos-Castillo’s discussions with young people reveal a consistent preference for human connection over digital engagement. “Young people still want that human and personal connection,” she said, adding that the lack of opportunities for such interactions drives them to seek solace in chatbots.

Looking ahead, Campos-Castillo sees potential for chatbots to serve beneficial roles in healthcare, provided they are integrated thoughtfully and rigorously regulated. She advocates for more extensive research to unravel the complexities of chatbot interactions, emphasizing the need to understand the psychological implications of these technologies as their usage grows among younger demographics. “We need to accumulate a lot more evidence than we already have,” she concluded, underscoring the urgency of addressing the evolving landscape of AI companionship.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health